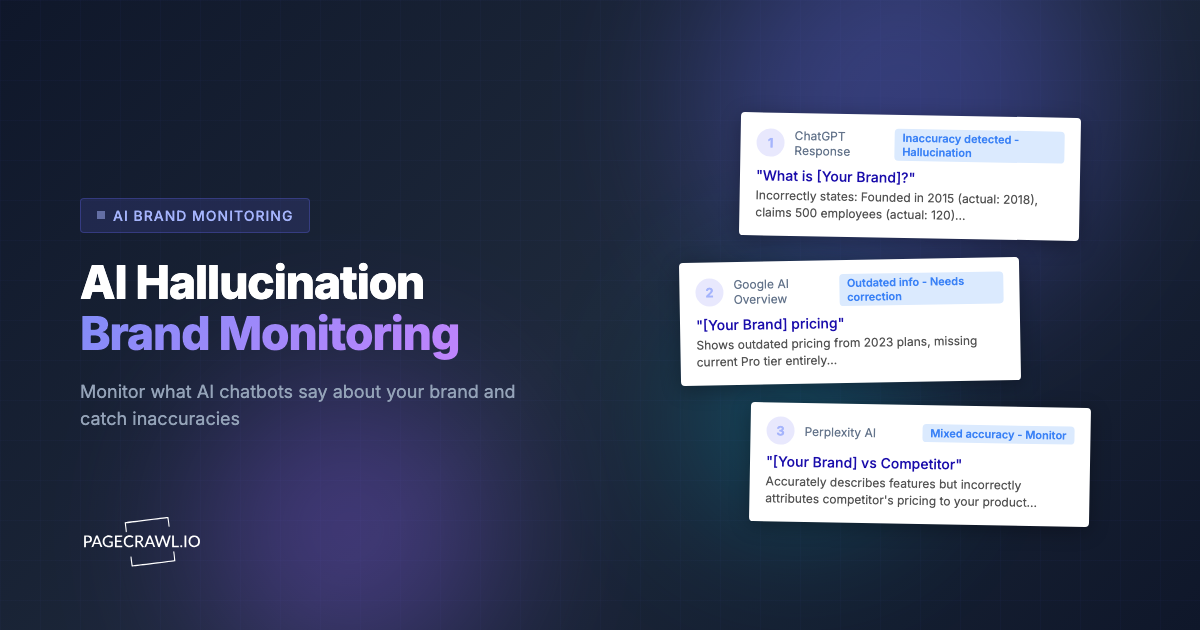

A Fortune 500 company discovered that ChatGPT was telling users their product had been discontinued. It had not been discontinued. The AI had confused the company's product rebrand with a discontinuation, and this incorrect answer had been circulating for months before anyone on the marketing team thought to ask ChatGPT about their own brand.

This is not an isolated incident. AI hallucinations about brands are widespread, persistent, and increasingly consequential. When a potential customer asks an AI assistant about your product, they receive an answer that sounds authoritative and confident, even when it is completely fabricated. Wrong pricing, phantom features, confused competitor comparisons, outdated company descriptions: these are not edge cases. They are the norm for most brands that have not actively addressed AI accuracy.

This guide covers how to systematically monitor what AI platforms say about your brand across ChatGPT, Gemini, Perplexity, Claude, Google AI Overviews, and other AI systems, and what to do when the information is wrong.

The AI Hallucination Problem for Brands

What AI Gets Wrong About Brands

AI hallucinations about brands fall into several categories, each with different consequences:

Fabricated features: AI systems confidently describe features your product does not have. A monitoring tool might be described as offering "real-time collaboration" when it does not. A SaaS product might be credited with an integration that does not exist. Users who sign up expecting these phantom features become frustrated customers.

Wrong pricing: AI models are trained on historical data that includes old pricing pages, blog posts mentioning prices, and comparison articles. If you raised your prices last year, AI might still quote the old price. Worse, it might fabricate a pricing tier that never existed, like a "free forever" plan you never offered.

Competitor confusion: In crowded categories, AI systems frequently confuse which company offers which feature. Your competitor's unique selling point gets attributed to you, or your differentiator gets credited to them. This undermines the positioning you have spent years building.

Outdated descriptions: AI training data has a cutoff date. Everything published after that date is invisible to the model unless it retrieves live web results. Company pivots, product evolution, leadership changes, and market repositioning go unrecognized.

Fabricated company details: AI generates plausible-sounding but false facts about companies, including wrong founding dates, incorrect headquarters locations, fabricated funding amounts, and invented partnerships.

Why This Problem Is Getting Worse

Several trends are accelerating the AI hallucination problem for brands:

More AI platforms means more surfaces for errors. It is not just ChatGPT anymore. Perplexity, Google AI Overviews, Bing Copilot, Claude, Gemini, Meta AI, and dozens of specialized AI assistants all generate answers about brands. Each platform has different training data, different retrieval systems, and different error patterns. An answer that is correct on Perplexity might be fabricated on ChatGPT.

More users trust AI answers. A growing percentage of knowledge workers now use AI as their primary research tool for product evaluations and purchasing decisions. When AI provides a wrong answer, users often accept it without verification. The authoritative tone of AI responses reduces the likelihood that users will double-check by visiting your website.

AI answers influence other AI systems. When one AI system generates incorrect information about your brand, that answer can be scraped, republished, and fed into the training data of other AI systems. A single hallucination can propagate across the entire AI ecosystem, becoming increasingly difficult to correct.

AI is becoming the first touchpoint. For many potential customers, the first time they encounter your brand is through an AI-generated answer, not through your website, not through a Google search result, and not through a referral. That AI-generated first impression shapes their entire perception of your product.

What to Monitor

Effective AI brand monitoring requires covering multiple platforms, each with different characteristics.

Perplexity

Perplexity is a search-focused AI that generates answers with citations. Because it retrieves live web results, its answers change frequently and can be monitored through standard web monitoring.

Why monitor it: Perplexity is growing rapidly as an alternative to Google search. Its answers include source citations, which means users can verify claims, but most do not. Incorrect Perplexity answers carry extra weight because the citations create an illusion of thoroughness.

What to track: Search for your brand name, your brand vs. competitors, your product category, and problem-solution queries your product addresses. Each search generates a unique URL that can be monitored.

Google AI Overviews

Google AI Overviews (formerly SGE) appear at the top of search results for many queries. These AI-generated summaries are seen by millions of users and often reduce click-through to the underlying search results.

Why monitor it: Google AI Overviews are the highest-visibility AI-generated content about your brand. They appear before organic search results, which means users may never see your own website's description if the AI Overview answers their question (correctly or incorrectly).

What to track: Your brand name, your product category, your brand vs. competitor comparisons, and common questions about your product. Each Google search URL can be monitored.

ChatGPT

ChatGPT is the most widely used AI assistant. Its answers are generated from training data and, increasingly, from web retrieval. ChatGPT does not have persistent URLs for conversations, which makes it harder to monitor through traditional web monitoring.

Why monitor it: ChatGPT's user base is enormous. Incorrect answers reach millions of people. Because conversations are private, you cannot see what ChatGPT tells individual users about your brand, only what it tells you when you ask.

What to track: Brand queries, product comparison queries, and problem-solution queries. Monitoring requires either periodic manual checking or API-based automated querying.

Bing Copilot

Bing Copilot integrates AI answers directly into Microsoft's search engine. Its answers are visible to users of Bing, Microsoft Edge, and Windows search.

Why monitor it: Bing Copilot reaches users across Microsoft's ecosystem. Its answers often differ from ChatGPT's despite both using OpenAI technology, because Bing Copilot has access to live web search results.

What to track: The same brand and category queries you track on other platforms. Bing search URLs with AI responses can be monitored.

Claude (Anthropic)

Claude is used by a growing number of businesses and developers. Its answers about brands tend to be more cautious (explicitly noting uncertainty), but hallucinations still occur.

What to track: Brand name queries and product comparisons, particularly for B2B brands where Claude's business user base is concentrated.

Gemini (Google)

Gemini is Google's standalone AI assistant, separate from AI Overviews in search. It has its own interface and generates different answers than Google AI Overviews for the same queries.

What to track: Brand queries through the Gemini web interface. Gemini conversation URLs can be monitored for changes when Google updates the model.

Setting Up AI Monitoring with PageCrawl

PageCrawl can monitor any AI platform that generates accessible web pages. Here is how to set up comprehensive monitoring.

Monitoring Perplexity

Perplexity is the easiest AI platform to monitor because each search has a persistent URL.

- Go to Perplexity.ai and search for your brand name

- Copy the URL from the results page

- Create a PageCrawl monitor with this URL

- Set tracking mode to "Content Only" to focus on the AI response text

- Set check frequency to daily

- Set AI focus to: "Track changes in how this AI response describes [your brand]. Alert me about changes to features described, pricing mentioned, competitive comparisons, and overall sentiment."

Repeat for your top 10 brand-related queries:

- "[Your brand] review"

- "[Your brand] pricing"

- "[Your brand] vs [top competitor]"

- "Best [your category] tools"

- "[Your category] comparison"

- "[Top competitor] alternatives"

Monitoring Google AI Overviews

Google AI Overviews appear for many search queries and can be monitored through the search results URL.

- Search Google for your target keyword

- If an AI Overview appears, copy the search URL

- Create a PageCrawl monitor with the URL

- Use CSS selector targeting to focus on the AI Overview section specifically, or use content-only mode

- Set check frequency to daily or every 2 days

- Set AI focus to: "Track changes in the AI Overview content. Alert me about changes in how my brand is described, which competitors are mentioned, and what claims are made."

For detailed guidance on using CSS selectors to target specific page elements, see our CSS selector guide.

Monitoring Bing Copilot

Bing Copilot responses can be monitored similarly to Google AI Overviews.

- Search Bing for your brand or category query

- Copy the URL when a Copilot response is displayed

- Create a PageCrawl monitor for the URL

- Use content-only tracking mode

- Set check frequency to daily

Building a Multi-Platform Dashboard

For comprehensive AI monitoring, create monitors across multiple platforms for the same query:

| Query | Perplexity | Google AI | Bing Copilot |

|---|---|---|---|

| "[Brand] review" | Monitor 1 | Monitor 2 | Monitor 3 |

| "[Brand] pricing" | Monitor 4 | Monitor 5 | Monitor 6 |

| "[Brand] vs [Competitor]" | Monitor 7 | Monitor 8 | Monitor 9 |

| "Best [category] tools" | Monitor 10 | Monitor 11 | Monitor 12 |

This matrix gives you cross-platform visibility. When one platform's answer changes, you can check whether other platforms also updated, suggesting a broader shift in AI understanding of your brand.

Organize these monitors using folders and tags in PageCrawl. Create a folder per platform and tag by query type (brand, category, comparison).

Building a Multi-Platform AI Monitoring System

Prioritize by Business Impact

Not all AI queries about your brand are equally important. Prioritize monitoring based on business impact:

Highest priority (monitor daily):

- Direct brand name queries ("What is [brand]?")

- Pricing queries ("[Brand] pricing")

- Comparison queries against your top 3 competitors

Medium priority (monitor every 2-3 days):

- Category queries ("Best [category] tools")

- Problem-solution queries

- "[Brand] review" queries

Lower priority (monitor weekly):

- Niche use case queries

- Long-tail comparison queries

- Historical or background queries

Establish Baselines

Before you can detect changes, you need to know what AI currently says about you. For each monitored query:

- Record the current AI response

- Note which facts are correct, which are wrong, and which are missing

- Score the response on accuracy, completeness, and sentiment

- Set this as your baseline

PageCrawl automatically captures the initial state when you create a monitor, giving you a built-in baseline.

Set Up Cross-Platform Alerts

Route all AI monitoring alerts to a single channel so your team has a unified view. A dedicated Slack channel or email address works well. For our guide on integrating AI brand monitoring with your broader online reputation monitoring strategy, which covers review sites, social media mentions, and news coverage alongside AI monitoring.

Responding to AI Hallucinations

Detecting inaccuracies is only half the battle. Here is how to respond effectively.

Content Strategy: Feed AI Correct Information

AI systems learn from web content. The most effective long-term strategy for correcting AI hallucinations is publishing clear, structured, authoritative content on your own website.

Structured data and schema markup: Implement Organization, Product, and FAQ schema on your website. AI systems increasingly use structured data to ground their answers.

FAQ pages: Create comprehensive FAQ pages that directly answer the questions AI users ask. "What is [your brand]?", "[Your brand] pricing", "[Your brand] features." Write these in a Q&A format that AI systems can easily extract.

Comparison pages: Publish your own comparison pages ("[Your brand] vs [Competitor]") with accurate, up-to-date information. When AI systems encounter conflicting information, your authoritative source carries significant weight.

Regular content updates: AI systems that retrieve live web content (Perplexity, Google AI Overviews, Bing Copilot) use your latest published content. Keep pricing pages, feature pages, and about pages current.

Direct Correction Requests

Some AI platforms accept feedback:

- Perplexity: Use the feedback mechanism on individual answers

- Google AI Overviews: Use Google's feedback tools in search

- ChatGPT: Report incorrect information through the interface

These correction mechanisms have varying effectiveness, but submitting corrections creates a signal that can influence future answers.

Monitor for SEO Impact

AI hallucinations can indirectly affect your SEO. When AI platforms describe your product incorrectly, users who then search for those phantom features find nothing on your site, increasing bounce rates. Monitor your SEO metrics alongside AI monitoring to detect these secondary effects.

Monitor Review Boards and Comparison Sites

AI systems heavily draw on third-party review boards (G2, Capterra, TrustRadius, Product Hunt) and comparison sites when generating brand descriptions. Inaccurate or outdated information on these platforms often becomes the source of AI hallucinations. PageCrawl can monitor your brand's profile pages across review boards, alerting you when ratings change, new reviews appear, or your product description is modified. Catching an inaccurate review board listing early and correcting it at the source is often more effective than trying to fix the downstream AI answer directly.

Metrics to Track

Inclusion Rate

How often does your brand appear in AI responses for relevant category queries? Track the percentage of category and problem-solution queries where AI mentions your brand. If your inclusion rate drops, it may indicate a competitor is gaining AI visibility at your expense.

Accuracy Score

For each AI response that mentions your brand, score it on factual accuracy:

- 5 (Perfect): All facts correct, up-to-date, fair representation

- 4 (Minor issues): Mostly correct with small omissions or outdated details

- 3 (Significant issues): Some correct, some wrong, missing key information

- 2 (Mostly wrong): More inaccuracies than accurate statements

- 1 (Harmful): Fundamentally misleading, fabricated claims, or confusion with another brand

Track this score over time across platforms. Improvements in accuracy score after you publish corrective content indicate your content strategy is working.

Sentiment Analysis

Is the AI's description of your brand positive, neutral, or negative? AI systems can adopt the sentiment of their training data. If negative reviews or critical articles dominate the training data for your brand, AI responses may skew negative even when describing accurate facts.

Competitive Position

In comparison queries, where does AI rank or position your brand relative to competitors? Track whether you are mentioned first, mentioned alongside, mentioned unfavorably, or omitted entirely. Changes in competitive positioning within AI responses can signal broader market perception shifts.

Response Consistency

How consistent are AI answers about your brand across platforms and over time? High consistency suggests a stable AI understanding of your brand. High variability suggests conflicting signals in the training data, which is an opportunity to publish clarifying content.

Advanced Monitoring Strategies

Automated Multi-Query Monitoring

For brands that need to track dozens of queries, use PageCrawl's API and webhook integration to build an automated monitoring pipeline:

- Define your query list (brand, category, comparison, problem-solution queries)

- Create Perplexity and Google AI monitors for each query

- Configure webhooks to send change data to a central database

- Build a dashboard that tracks accuracy, inclusion, and sentiment over time

Monitoring by Geography

AI answers can vary by geography. A user in the UK might get a different AI answer about your brand than a user in the US, especially for localized platforms. If your brand operates in multiple markets, consider monitoring the same queries across regional versions of AI platforms.

Monitoring Competitor AI Presence

Do not just monitor what AI says about you. Monitor what it says about your competitors. Track your top competitors' inclusion rates, accuracy scores, and positioning. When a competitor's AI presence improves, investigate what content they published that caused the shift.

Tracking AI Model Updates

AI platforms periodically update their models. When ChatGPT or Gemini releases a new model version, answers can change dramatically. Monitor for cluster changes (multiple AI monitors triggering simultaneously), which often indicates a model update rather than a gradual drift.

Industry-Specific Considerations

SaaS and Technology Companies

AI hallucinations about SaaS products are common because the category is crowded and features overlap between competitors. Pay special attention to:

- Feature attribution (your features credited to competitors and vice versa)

- Pricing accuracy (AI often quotes outdated pricing)

- Integration claims (AI may fabricate integrations)

- Category classification (AI may miscategorize your product)

Healthcare and Pharmaceutical Companies

AI hallucinations about healthcare brands carry additional risk because they can influence patient decisions. Monitor for:

- Incorrect drug interactions or side effects

- Wrong dosage information

- Fabricated clinical trial results

- Confusion between brand-name and generic products

Financial Services

Financial product descriptions must be accurate for regulatory compliance. Monitor for:

- Incorrect fee structures or rates

- Fabricated product features (like insurance coverage terms)

- Wrong regulatory status claims

- Outdated compliance certifications

E-commerce and Consumer Brands

Consumer brands face AI hallucinations about:

- Product specifications and materials

- Return policies and warranty terms

- Availability and shipping information

- Product safety claims

The Ongoing Nature of AI Monitoring

AI monitoring is not a one-time project. Models update, new platforms emerge, and your brand evolves. Build a sustainable monitoring practice:

- Weekly review: Check all AI monitoring alerts and score new responses

- Monthly analysis: Review trends in accuracy, inclusion, and sentiment

- Quarterly content updates: Publish or update content addressing the most persistent inaccuracies

- Annual audit: Review your full query list and platform coverage

Choosing your PageCrawl plan

PageCrawl's Free plan lets you monitor 6 pages with 220 checks per month, which is enough to validate the approach on your most critical pages. Most teams graduate to a paid plan once they see the value.

| Plan | Price | Pages | Checks / month | Frequency |

|---|---|---|---|---|

| Free | $0 | 6 | 220 | every 60 min |

| Standard | $8/mo or $80/yr | 100 | 15,000 | every 15 min |

| Enterprise | $30/mo or $300/yr | 500 | 100,000 | every 5 min |

| Ultimate | $99/mo or $999/yr | 1,000 | 100,000 | every 2 min |

Annual billing saves two months across every paid tier. Enterprise and Ultimate scale up to 100x if you need thousands of pages or multi-team access.

When an AI platform quietly changes how it describes your product, catching that shift within a day lets you publish corrective content before the wrong answer propagates to more users. All plans include the PageCrawl MCP Server, so your team can ask Claude to pull every AI response change mentioning your brand over the last month and surface which platforms shifted their description, turning your monitoring history into an auditable accuracy log rather than a set of alerts you scan manually. Paid plans unlock write access so AI tools can create monitors and trigger checks through conversation. Standard at $80/year covers 100 monitors, enough to track your brand across Perplexity, Google AI Overviews, and Bing Copilot for every pricing, comparison, and category query that drives purchase decisions, all checked every 15 minutes so you see the change before your customers do. Enterprise at $300/year scales to 500 monitors with 5-minute checks, covering the full matrix of queries, competitor comparisons, and regional AI platform variants that larger brand teams need.

Getting Started

Start with three Perplexity monitors: your brand name, your top category keyword, and your brand vs. top competitor. Set daily check frequency and enable email or Slack notifications. This takes about 10 minutes and immediately reveals how AI represents your brand.

Expand to Google AI Overviews for your same three queries. Then add Bing Copilot. Within an hour, you have cross-platform monitoring for your most important brand queries.

For a deeper dive into monitoring your brand on ChatGPT specifically, including API-based approaches for platforms without persistent URLs, see our guide on monitoring your brand in ChatGPT and AI search.

PageCrawl's free tier includes 6 monitors, enough to cover 2 brand queries across 3 AI platforms. The Standard plan ($80/year) with 100 monitors provides coverage for a comprehensive multi-query, multi-platform monitoring matrix.