You can find all your invoices here.

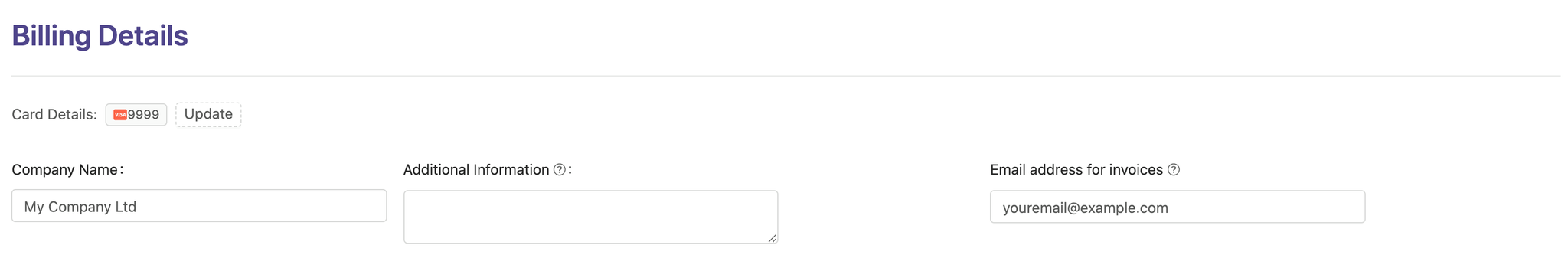

If you wish to receive invoices to your email each month/year, enter your email address in the billing details section:

If you would like to change or upgrade your plan, just go to your Subscription settings and choose a plan you want to switch to. Upgrades/downgrades are prorated, meaning, that the unused time will be applied as a credit for the next payment. e.g. you subscribed to $8/mo plan but you only used it for half-a-month and decided to upgrade to $30/mo plan. When upgrading, 4$ will be credited back and the remaining half-of-the-month of $30/mo plan will only cost you 11$.

You can cancel your subscription by going to your Subscription settings and clicking on the red "Downgrade to Free" button. This will open a multi-step confirmation modal where you can optionally provide feedback about why you are canceling. To complete the cancellation, you will need to type "CANCEL" and confirm.

Once confirmed, your subscription will not end immediately. You will retain full access to your paid features until the end of your current billing period (grace period). After that date, your account will automatically downgrade to the Free plan.

]]>Unfortunately, for security and to prevent service abuse, email addresses cannot be changed directly by users.

To change your email address please contact support at help_me@pagecrawl.io from your originally registered email address. We will verify the information and get back to you as soon as possible.

Email address for 'Free Forever' plan users cannot be changed to prevent service abuse.

]]>Unfortunately, it is not yet possible to pay via Paypal.

We support subscription billing by credit/debit card, Apple Pay, and Google Pay for monthly and annual billing intervals.

]]>You can find all your invoices here.

If you wish to receive invoices to your email each month/year, enter your email address in the billing details section:

We accept all major credit and debit cards for subscriptions.

For Ultimate plans paid annually, we also support:

If you would like to arrange an alternative payment method, please contact support at support@pagecrawl.io.

]]>The most common reasons for a failed transaction include insufficient funds, incorrect card details, and suspicions of fraud.

In case of a transaction failure first, check if the card details you entered are correct and make sure that there are enough funds in your account to make a purchase.

If the transaction keeps getting declined try using another card or contact your card issuer. In most cases your card issuer will be able to remove the block and allow the transaction to go through.

Common reasons for a payment failure:

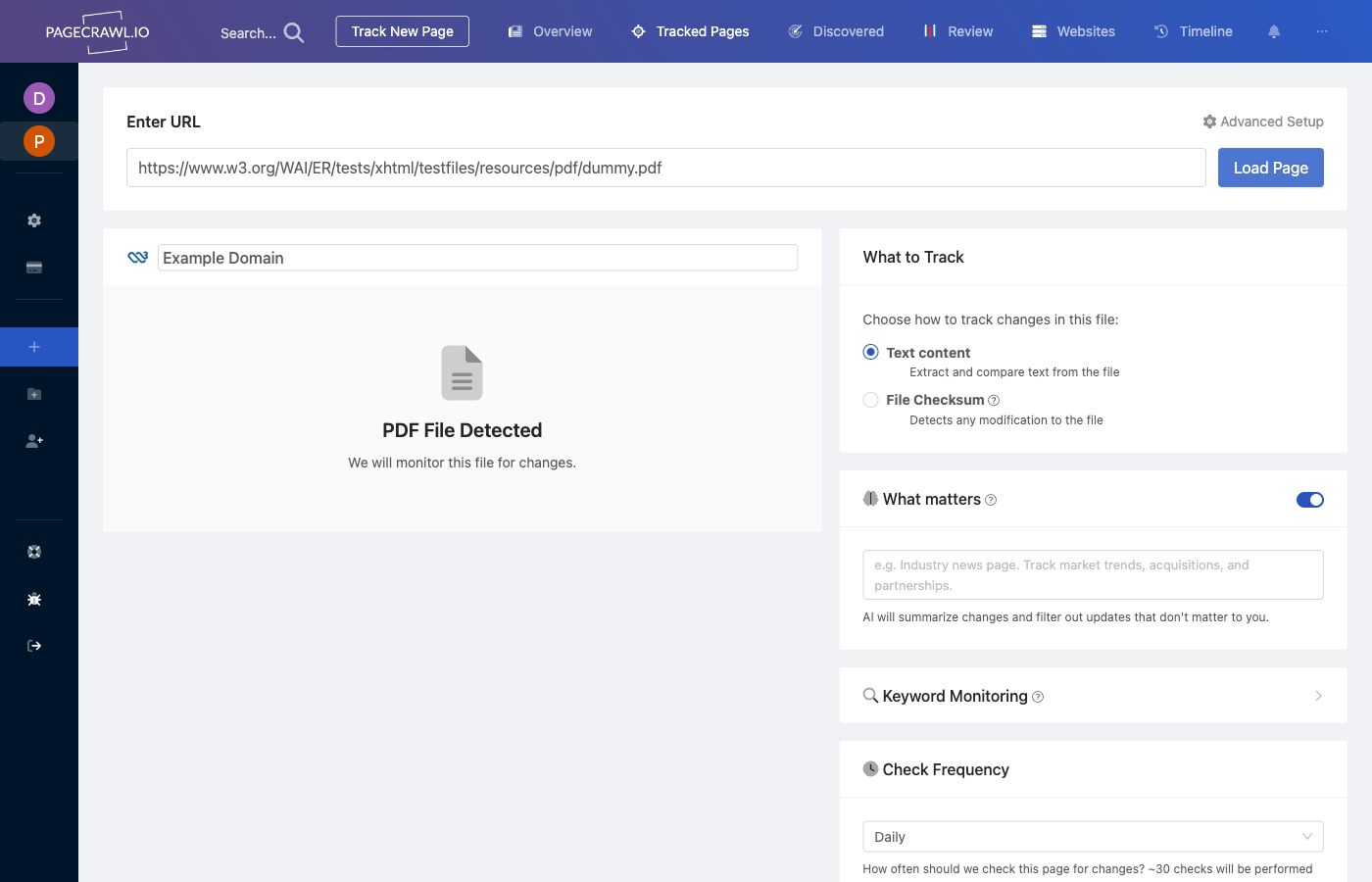

PageCrawl can monitor PDF files hosted online and notify you when the text content changes. It extracts text from the PDF, compares it against the previous version, and highlights exactly what was added, removed, or modified.

PDFs behind login authentication are also supported. Configure an authentication setup first, then select it when adding the PDF to monitor.

| Method | What It Detects | Diff Available |

|---|---|---|

| PDF text tracking | Text content changes (additions, deletions, edits) | Yes, line-by-line diff |

| File checksum | Any modification to the file (including metadata, images) | No, only detects that something changed |

Use PDF text tracking when you need to see exactly what text changed. Use file checksum monitoring when you need to detect any modification, including non-text changes.

While SMS messages can be useful for mission-critical applications, to avoid increasing the subscription costs, we do not include native SMS notifications in our subscription plans.

For personal use, we suggest using Telegram Messenger as an alternative of the SMS notifications. It is free of charge, and you only need Internet connection on your mobile phone, which you most likely already have and will need to review what has changed on your monitored page.

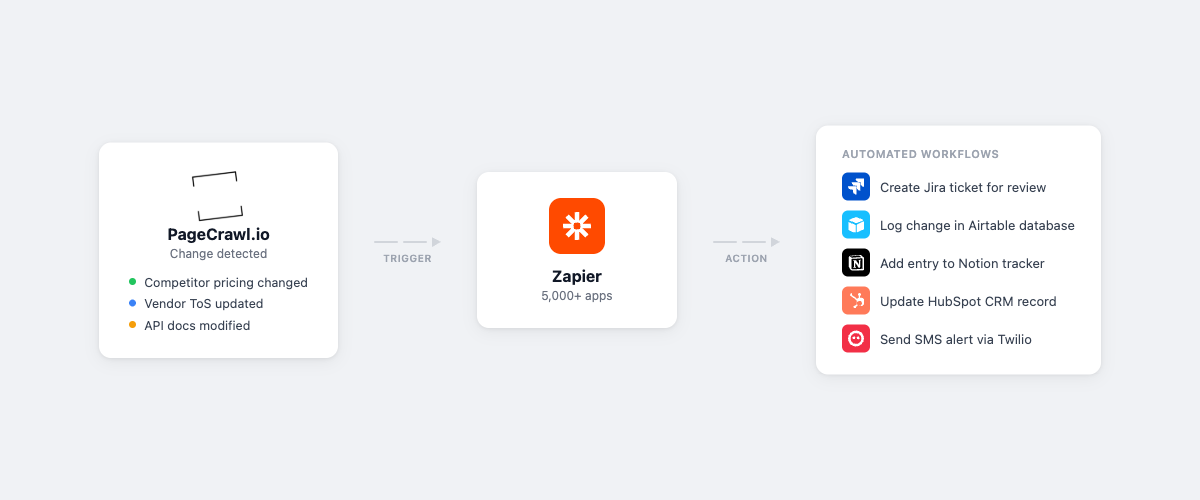

If you really need to receive change notifications by SMS, you can receive them by setting up Zapier integration to send SMS messages. Zapier allows integrating our application to over 2000 services easily (for an additional cost and there may be a limit for the number of SMS each month).

We have integrations with other notification channels, visit PageCrawl.io Integrations to learn more.

]]>We aim to respond to your inquiries promptly but sometimes due to an increased number of support requests Enterprise customer requests/emails are prioritized over the Standard customers. Therefore, the response time is faster, also you may expect a 'higher level' of support in case you are not able to set up the page the way you want.

For technical support our response times are prioritized according to your subscription plan:

No. We price our services based on the number of pages primarily and you can upgrade your plan if you need to track more pages.

]]>The Standard plan includes 15,000 checks, the Enterprise plan allows for 100,000 checks, and the Ultimate plan also includes 100,000 checks each month. All paid plans can be purchased in multiples if you require more pages checked or more frequent checks.

It all depends on how many pages you want to track and how frequently. Also, adjusting your schedule may reduce the number of checks needed. You may start with the Standard plan and upgrade if you notice that you need more.

A few rules of thumb:

If your estimated number of checks for this period will be over the limit, you will see an alert. You can check your usage statistics to find out your current estimate.

]]>Deletion of your account will result in loss of ALL data associated with it.

To delete your account go to the Account Settings, scroll to the bottom of the page, press Permanently delete my account, and proceed with the instructions.

]]>

PageCrawl.io monitors websites for changes and sends instant notifications through your preferred channels. This guide walks you through connecting PageCrawl.io with Microsoft Teams to receive alerts directly in your Teams channels.

Before starting, ensure you have:

A PageCrawl.io account

→ Sign up here if you don't have one yet

Microsoft 365 For Business subscription

Basic Teams plans don't support external webhooks - you need a Business plan

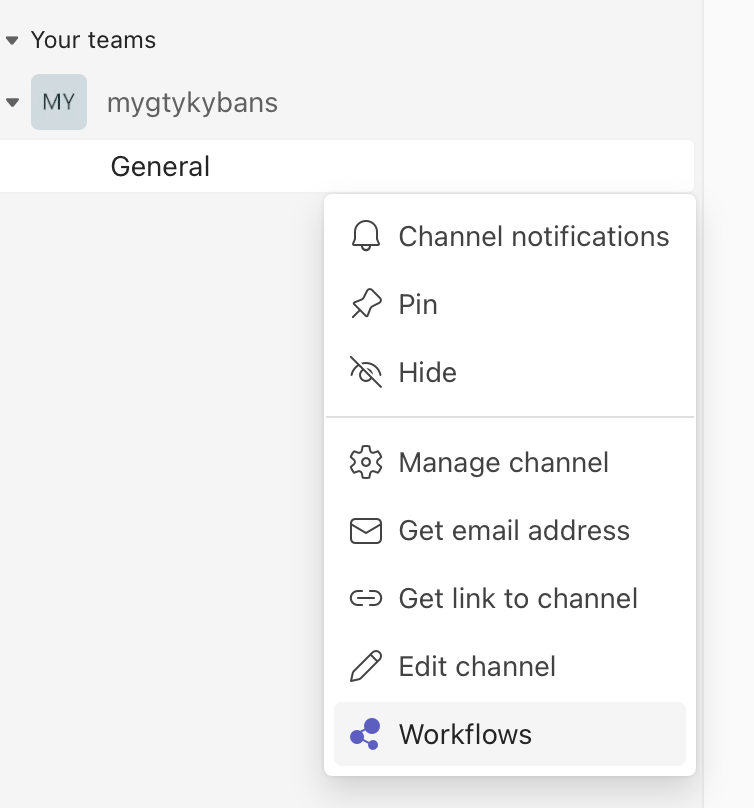

1.1 In your Teams channel, click the Workflows menu

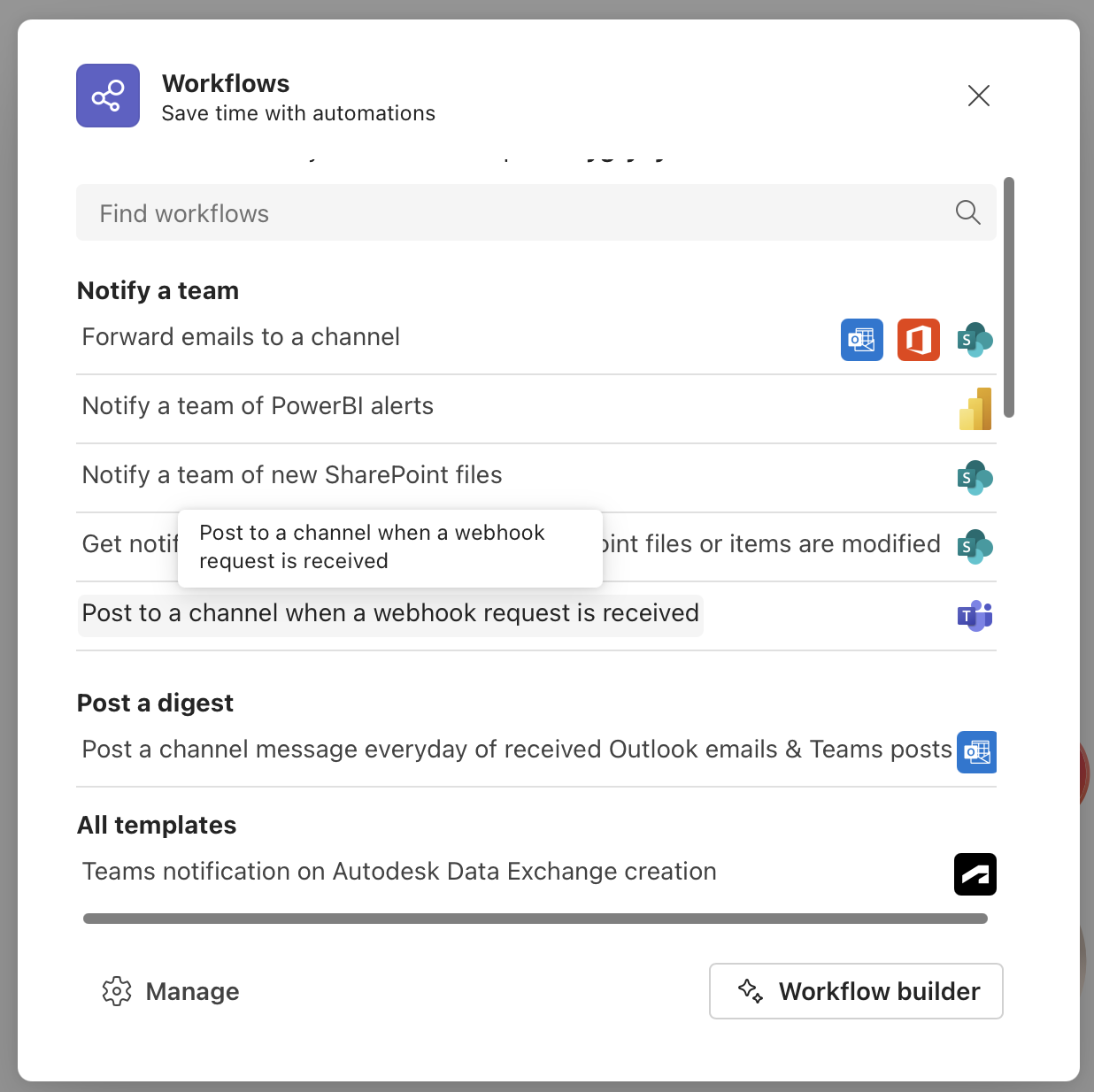

1.2 Select "Post to a channel when a webhook request is received"

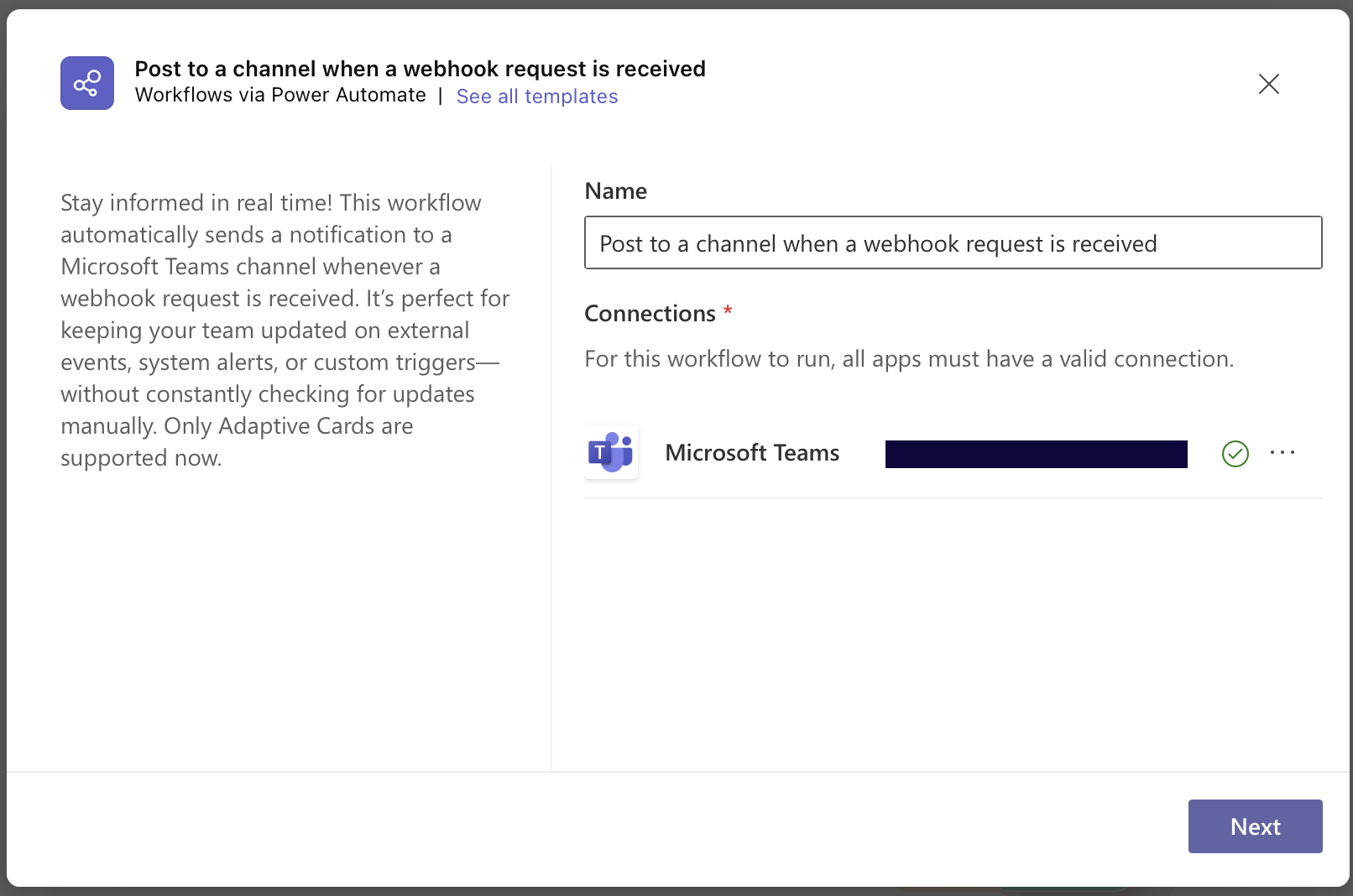

1.3 Click Next and name your workflow Use a descriptive name like "PageCrawl Website Monitoring"

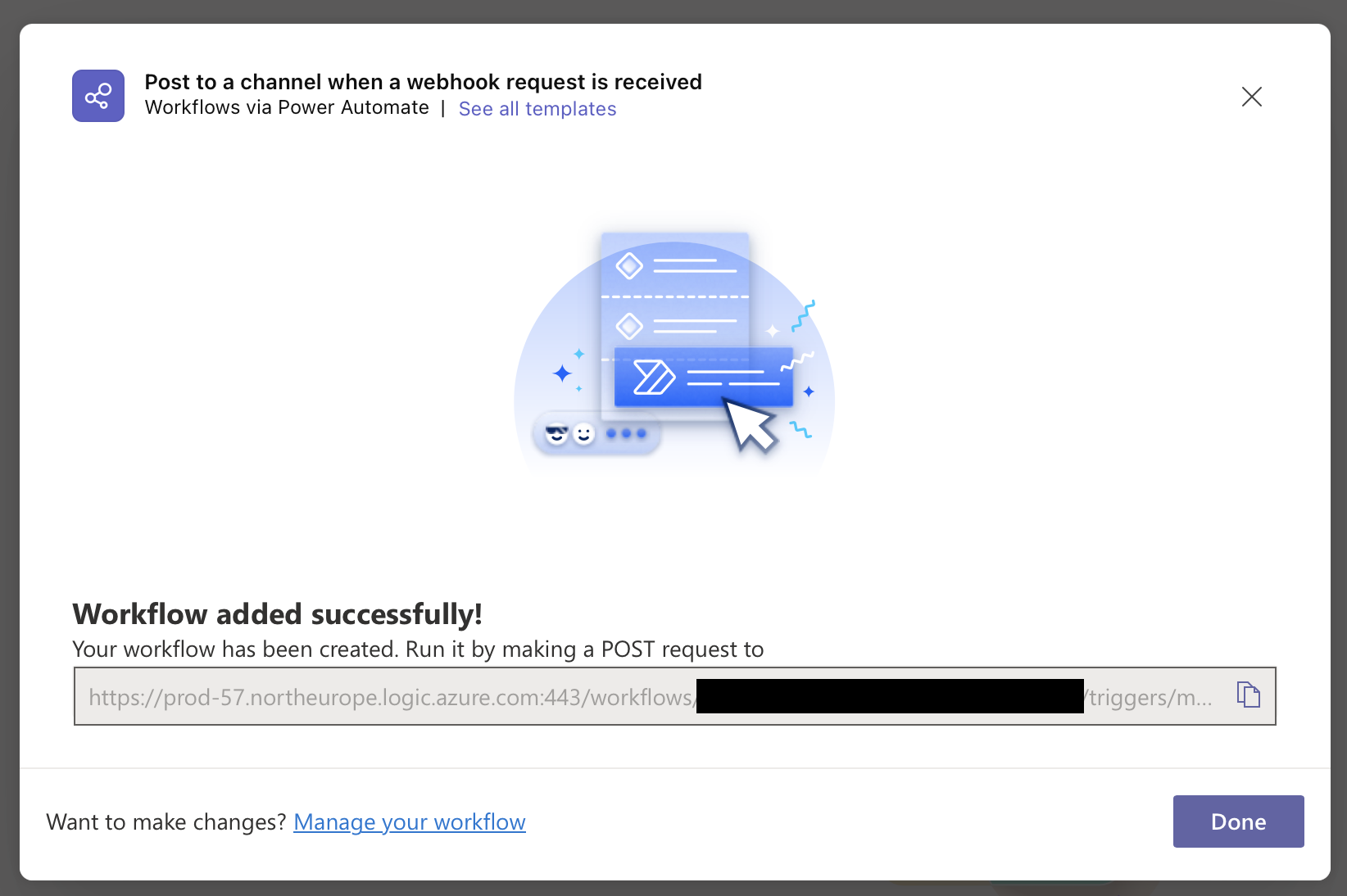

1.4 Copy the generated webhook URL

Choose your notification scope:

Option A: Monitor All Pages

→ Go to Workspace Settings

→ Paste the Teams webhook URL

→ Save changes

Option B: Monitor Specific Pages

→ Open settings for individual pages

→ Add the Teams webhook URL

→ Save changes

Tip: Set a default webhook for all pages, then override for specific ones that need special handling.

Not working? Check that:

We do have more supported notification channels to suit everyone's preferences.

PageCrawl allows you to track changes in websites and get notified instantly via your preferred method. In this article we will discuss how you can setup PageCrawl to receive notifications in Discord.

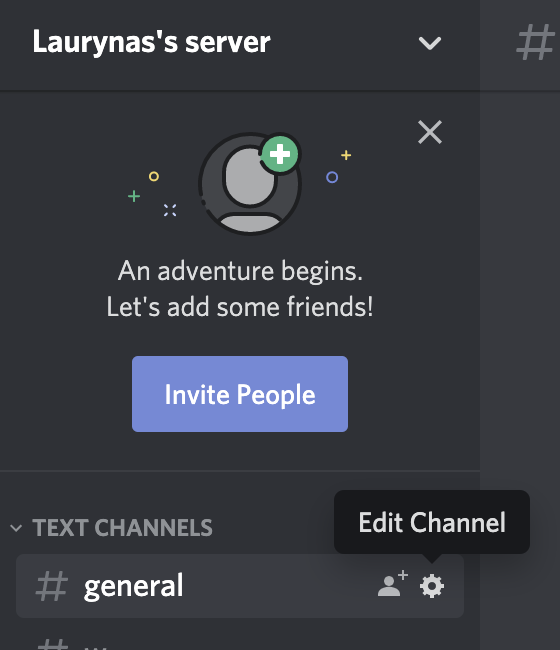

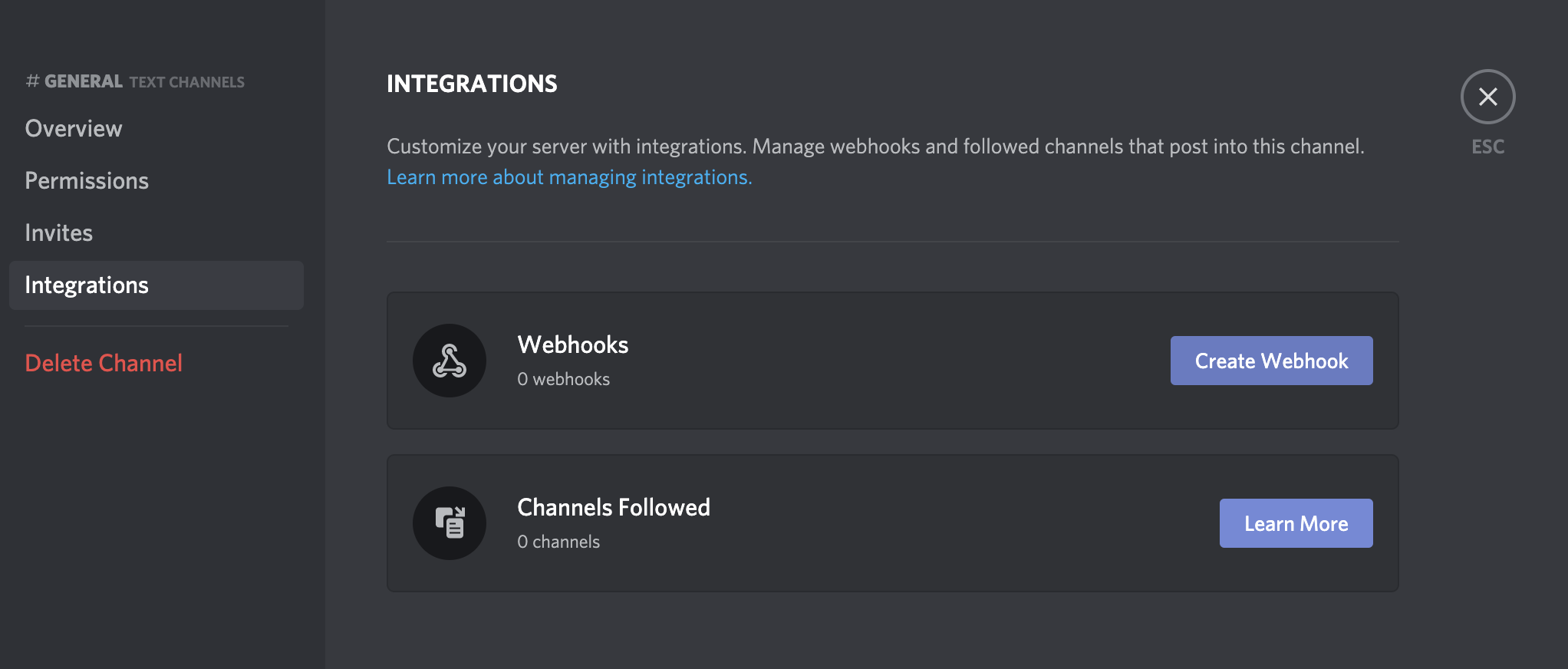

You need an PageCrawl.io account. This works in both Free and Paid accounts. If you don't already have one, go here to register an account.

Follow the steps below to retrieve a Discord Webhook URL

If you would like to receive notifications for all tracked pages, simply paste webhook URL in user notification preferences.

If you only want a single page to be notified about in Discord. Just set this Webhook URL in a specific page.

What if I can't edit the server? You should ensure you have permissions from the server owner to edit channel.

I didn't receive a notification Please wait for page to change. We will only send a notification when we detect a change.

I receive too many notifications? What can I do? You may setup notification rules to be notified only when e.g. text disappears, number increases, etc.

We do have more supported notification channels to suit everyone's preferences.

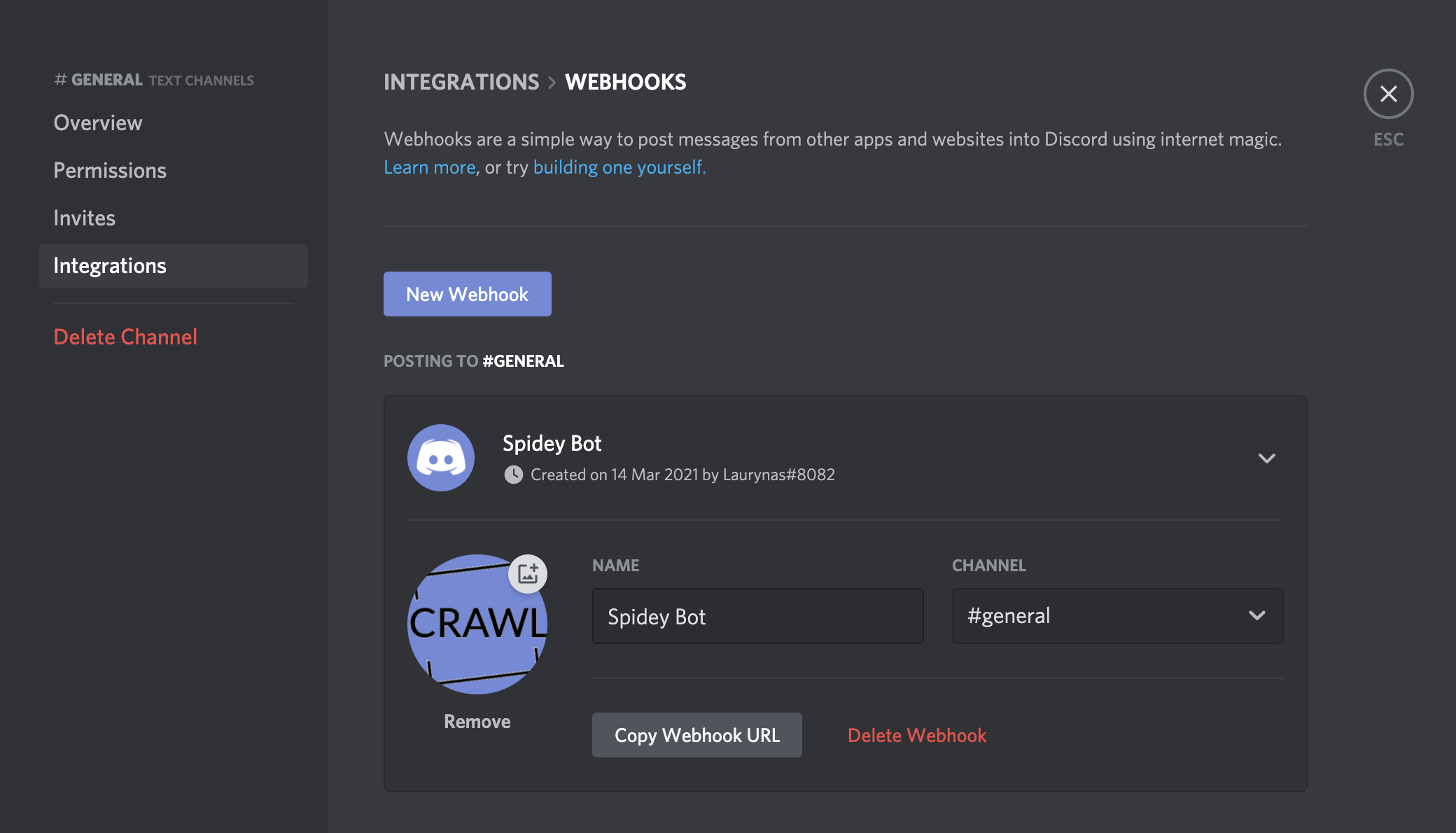

PageCrawl.io allows you to track changes in websites and get notified instantly via your preferred method. In this article we will discuss how you can setup PageCrawl to receive notifications in Telegram.

You need an PageCrawl.io account. This works in both Free and Paid accounts. If you don't already have one, go here to register an account.

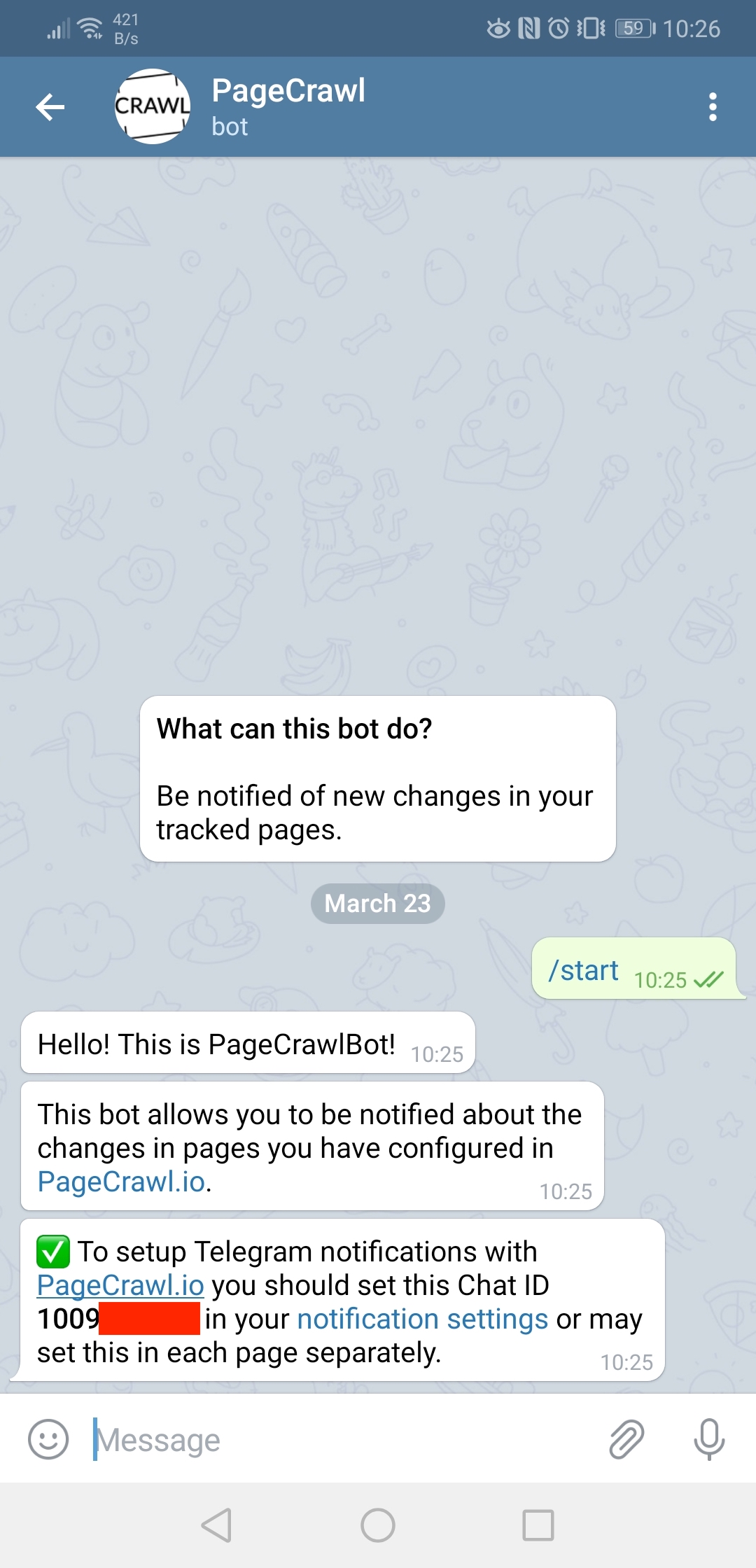

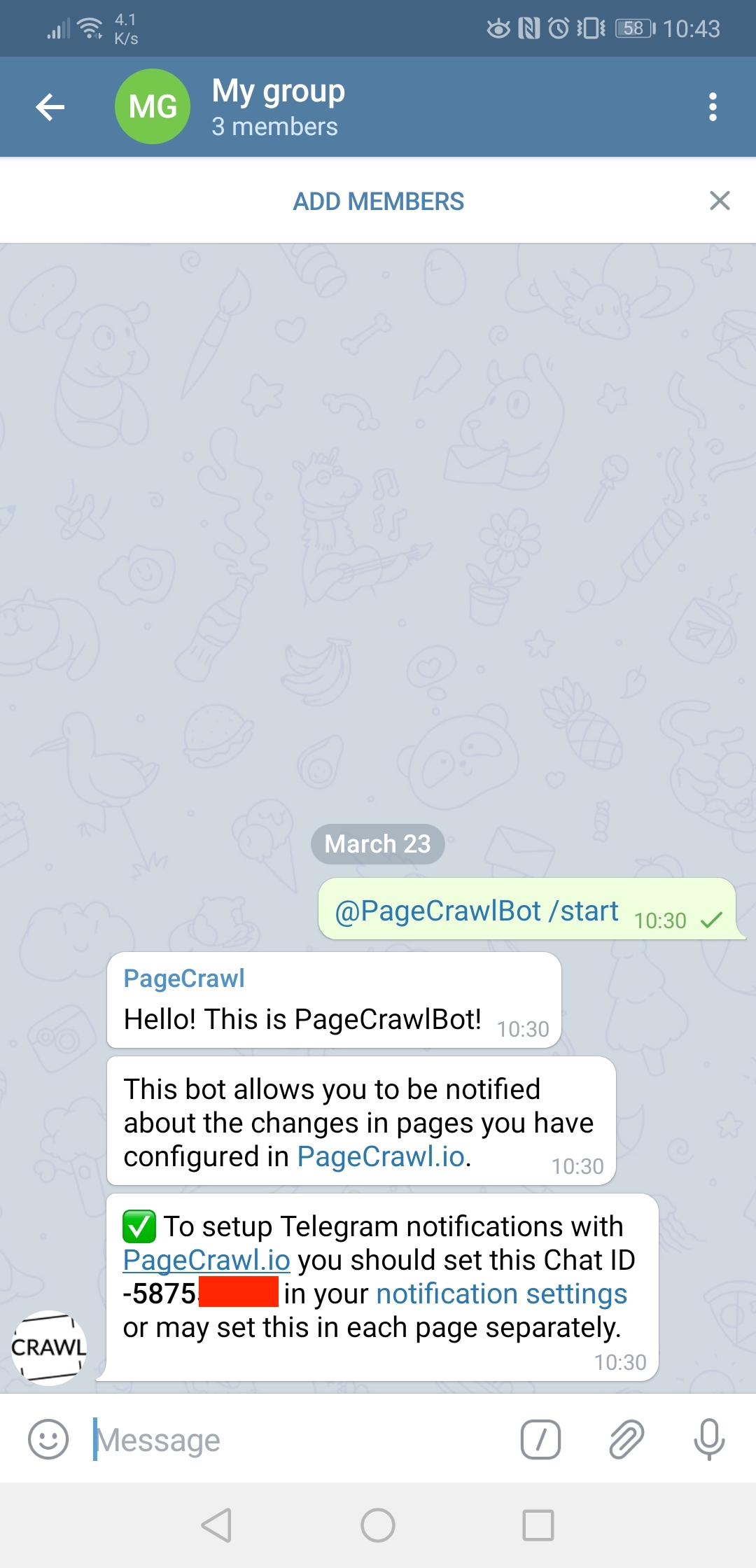

Follow the steps below to retrieve a Telegram Chat ID. This is needed so you could receive notifications in a 1-to-1 chat, channel or a group conversation.

Simply begin a conversation with @PageCrawlBot and you will receive instructions how to configure it.

Instructions for Channels and Groups are identical. To include the bot in the Channel or Group you should invite @PageCrawlBot to the channel. You may likely also need to adjust bot permissions, so it could read and send messages. To get instructions what code you should put in PageCrawl.io settings, send a /start message to the bot: @PageCrawlBot /start

Keep in mind that Channels or Group conversations have a negative chat id! 1-to-1 conversations - always positive chat id.

If you would like to receive notifications for all tracked pages, enter the Chat ID you obtained in previously in user notification preferences.

If you only want a single page to be notified about in Telegram. Just set this Chat ID in a specific page.

What if I can't edit the server? You should ensure you have permissions from the server owner to edit channel.

I didn't receive a notification Please wait for page to change. We will only send a notification when we detect a change.

I receive too many notifications? What can I do? You may setup notification rules to be notified only when e.g. text disappears, number increases, etc.

We do have more supported notification channels to suit everyone's preferences.

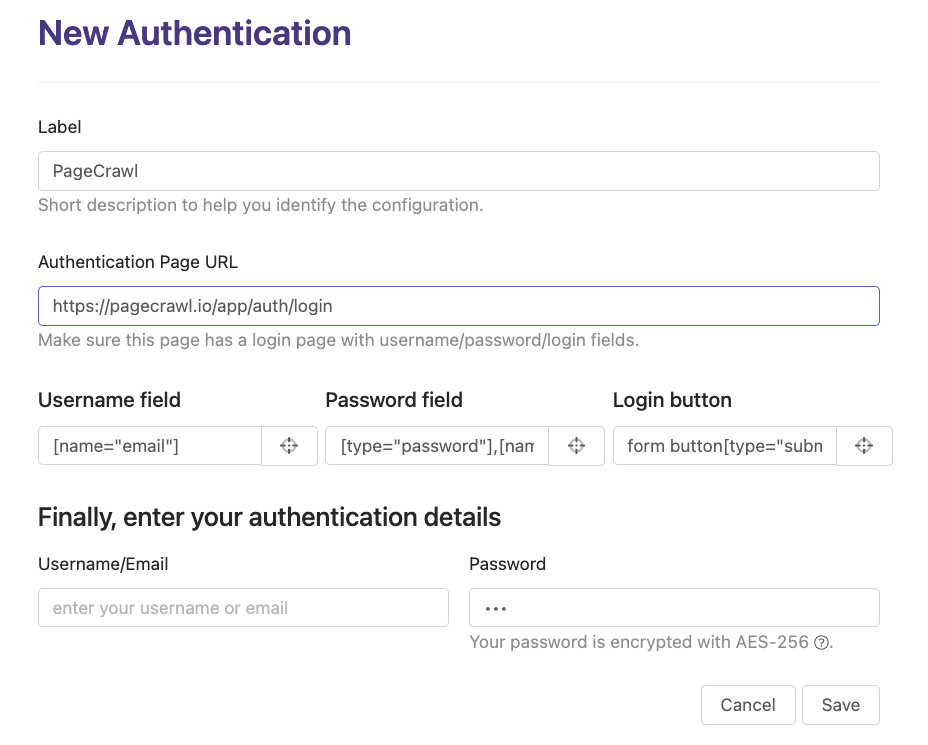

If you're looking to track pages on websites that require login authentication, the answer is yes – it is possible. Please note that this feature is only available on paid plans.

Monitoring password-protected pages is a two-step process:

Before you can monitor password-protected pages, you need to set up an authentication configuration:

You can create multiple authentication configurations for different websites.

Once your authentication is configured:

The system automatically detects and shows only authentication configurations that match the domain of the URL you're monitoring. For example, if you're monitoring https://app.example.com/dashboard, it will show authentication configs set up for example.com.

If you want to track files such as PDFs, Excel spreadsheets, CSVs, or Word documents, you're in luck. These types of files can also be tracked, even if they are behind login authentication. Simply provide the link to the file and select the appropriate authentication configuration.

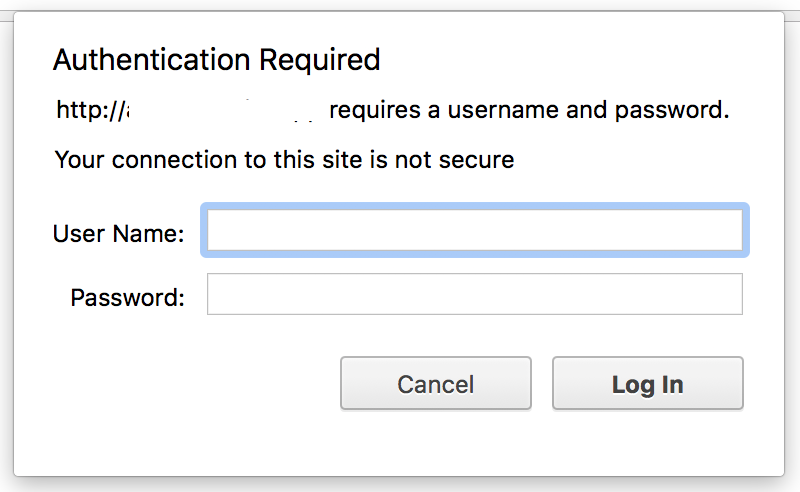

In case the website is using "HTTP Basic Authentication" (the browser popup that asks for credentials), you can enter the credentials under "Advanced Settings" when setting up your monitored page. This is different from form-based login authentication.

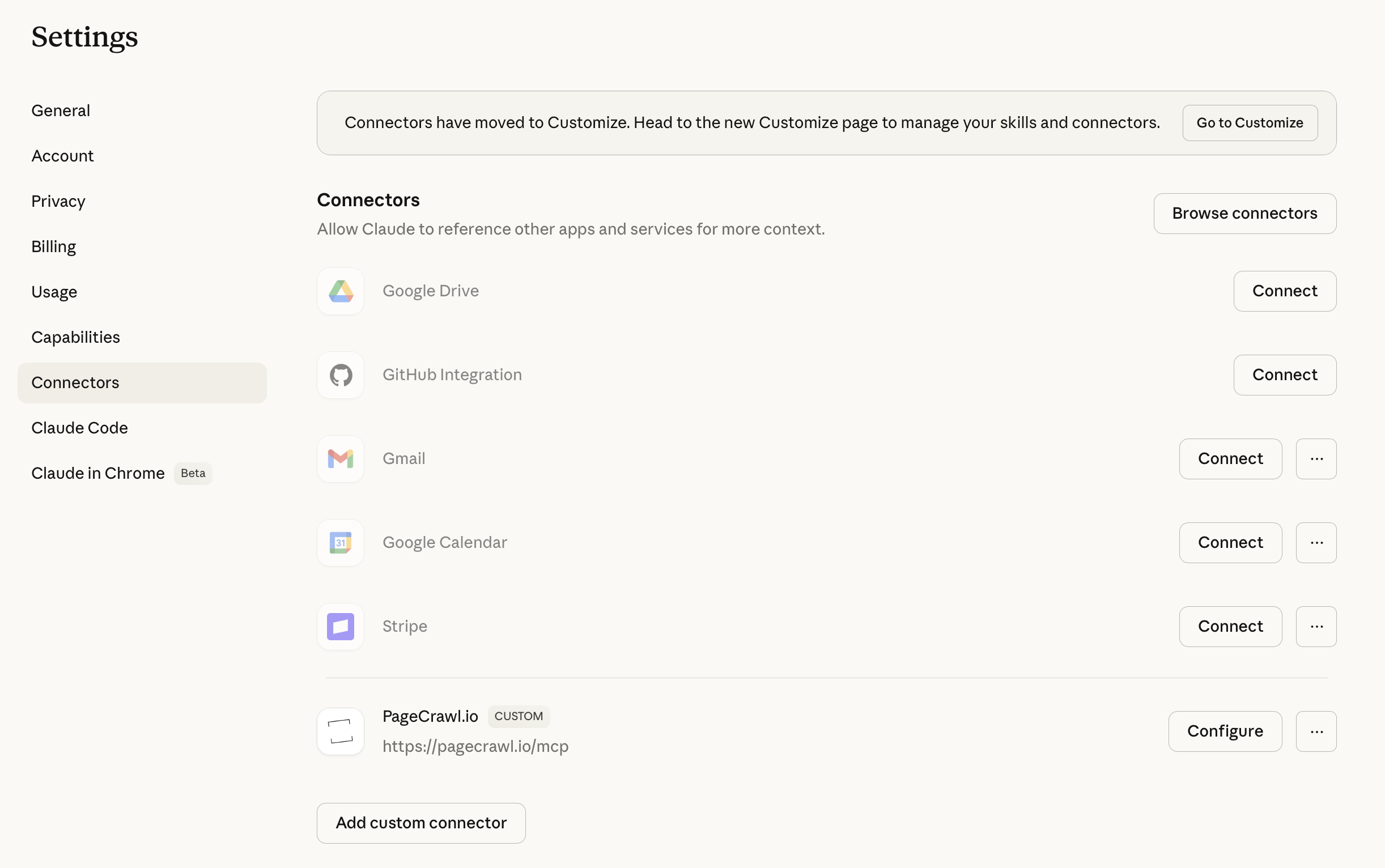

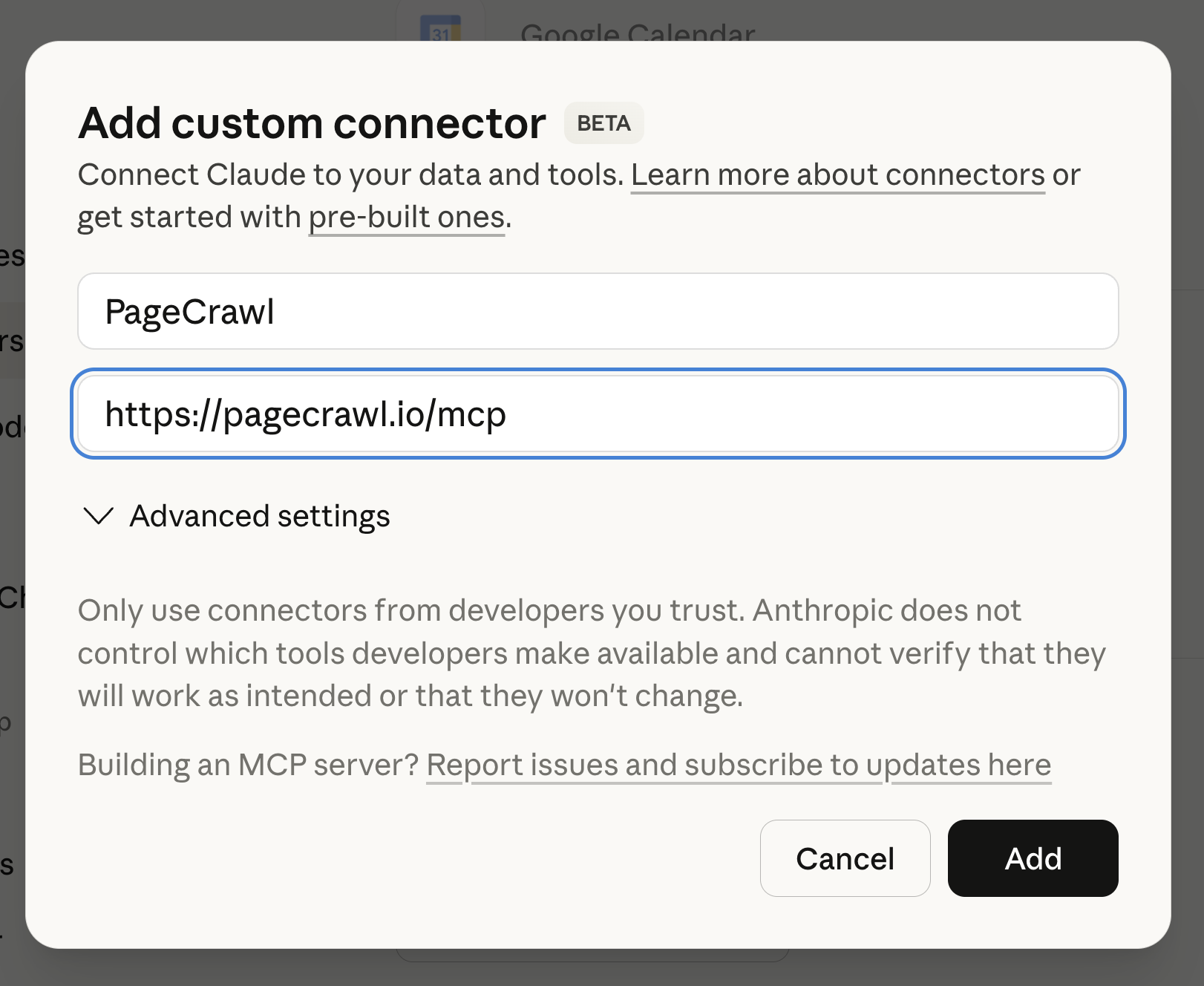

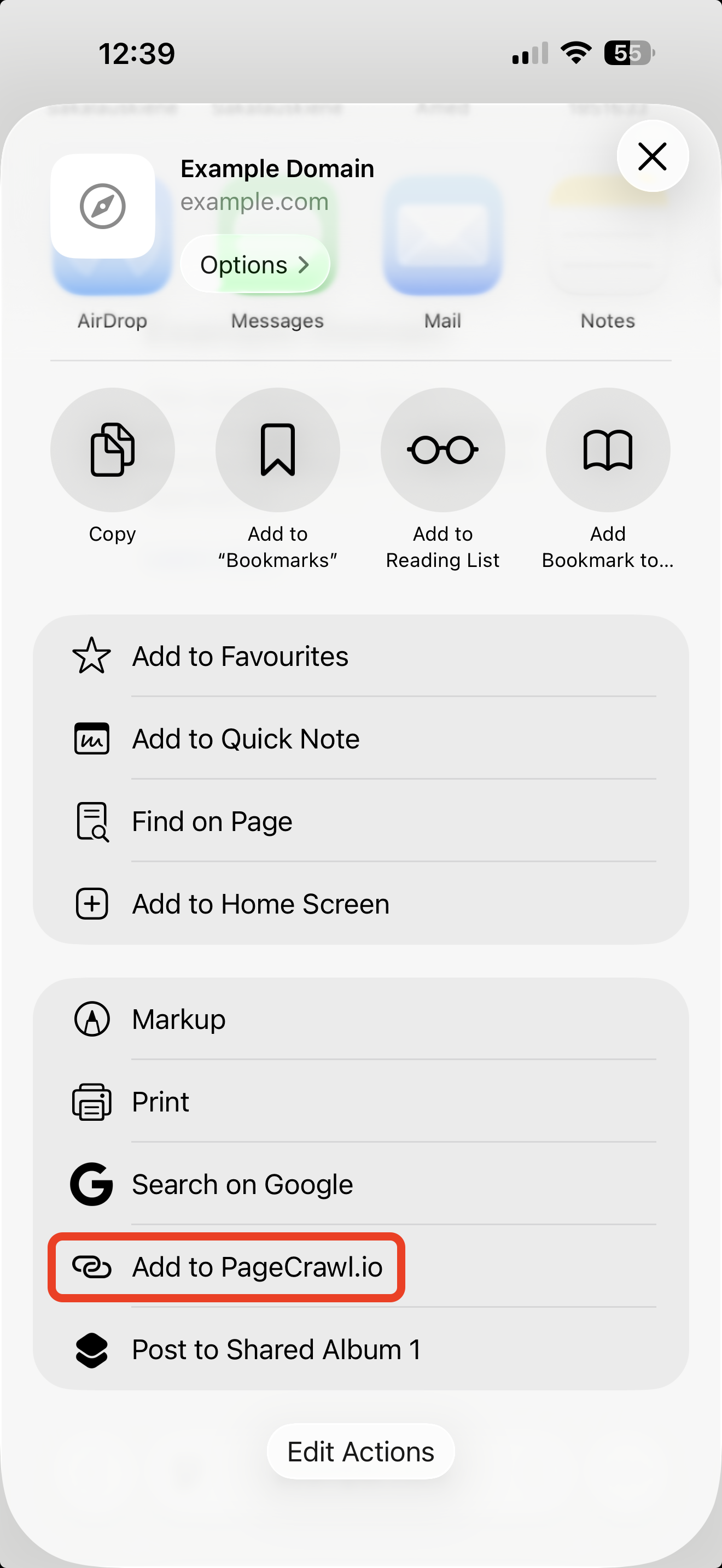

]]>PageCrawl provides three ways to integrate with external systems: a REST API, webhooks, and RSS feeds.

API and webhooks are available on paid plans.

The REST API lets you manage monitors programmatically, including creating pages, retrieving change history, and triggering checks. Find your API key in Settings > API.

See the API & Webhooks guide for endpoints and authentication details. For the full endpoint reference and schemas, see pagecrawl.io/developers.

Webhooks send HTTP POST requests to your endpoint whenever a change is detected or an error occurs. Configure them in Settings > Workspace > Integrations > Webhooks.

See the Webhook Integration guide for setup, payload fields, and example payloads.

Access recent changes in Atom RSS format. Generate a public RSS URL for a single page or for all pages in the workspace.

See the RSS Feeds guide for setup instructions.

]]>PageCrawl can monitor CSV (comma-separated values) files hosted online and notify you when their content changes. It retrieves the file, compares the data against the previous version, and shows exactly what rows or values were added, removed, or modified.

CSV files behind login authentication are supported. Configure an authentication setup first, then select it when adding the file.

PageCrawl can monitor Excel files hosted online and notify you when their content changes. It extracts text and data from the spreadsheet, compares it against the previous version, and shows exactly what was added, removed, or modified.

xls, xlsx, ods

Excel files behind login authentication are supported. Configure an authentication setup first, then select it when adding the file.

PageCrawl can monitor PowerPoint presentations hosted online and notify you when their text content changes. It extracts text from the slides, compares it against the previous version, and shows exactly what was added, removed, or modified.

pptx

PowerPoint files behind login authentication are supported. Configure an authentication setup first, then select it when adding the file.

PageCrawl can monitor Word documents hosted online and notify you when their text content changes. It extracts text from the document, compares it against the previous version, and shows exactly what was added, removed, or modified.

doc, docx, odt

Word files behind login authentication are supported. Configure an authentication setup first, then select it when adding the file.

PageCrawl.io allows you to track changes in websites and get notified instantly via your preferred method. In this article we will discuss how you can setup PageCrawl to receive notifications in Slack.

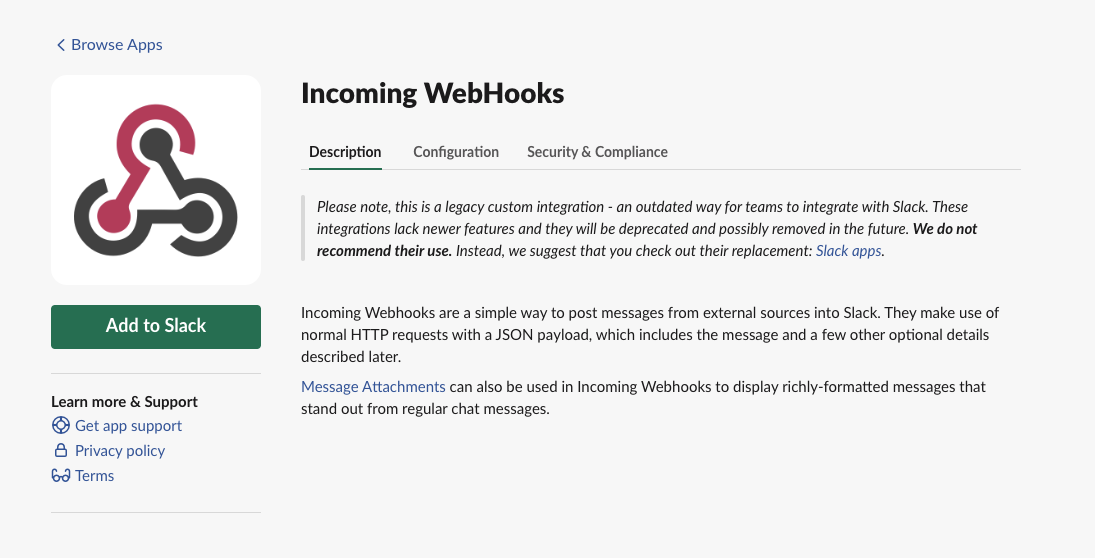

Follow the steps below to create a new Incoming Webhook connector

Visit https://slack.com/apps/A0F7XDUAZ-incoming-webhooks to enable "Incoming WebHooks" for your workspace.

Please note, this is a legacy custom integration - an outdated way for teams to integrate with Slack. You may create Slack app instead, but the setup procedure of "Slack app" is significantly longer so we suggest using the legacy integration.

Simply click "Add to Slack" button. You may be prompted to sign in to your Slack account.

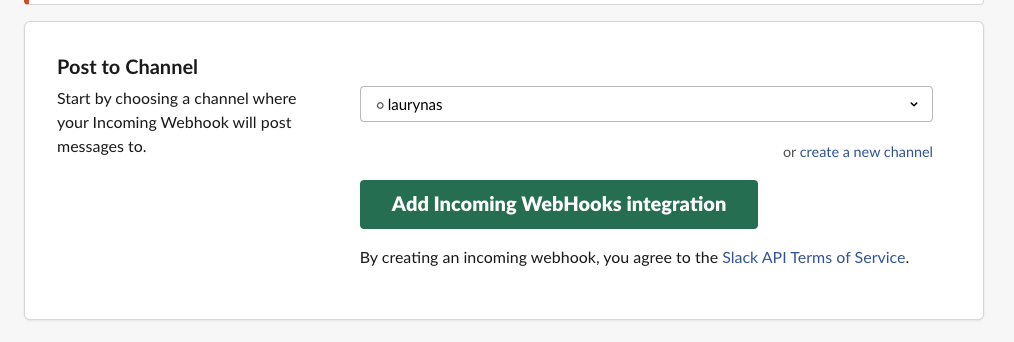

Here you will need to select a Slack channel where the messages from PageCrawl.io bot should be sent to and press "Add Incoming Webhook integration"

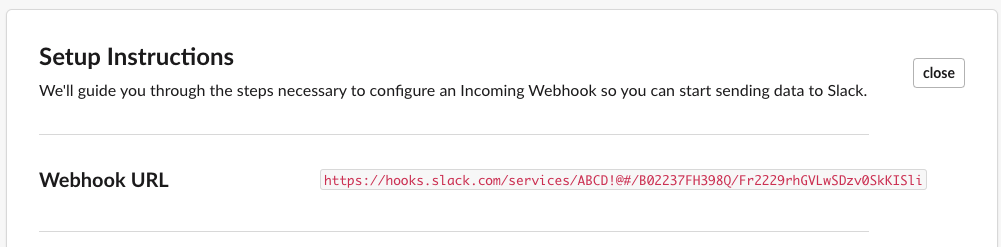

Finally you should receive URL address. Copy it and paste in the notification settings as indicated below.

If you would like to receive notifications for all tracked pages, simply paste webhook URL in user notification preferences.

If you only want a single page to be notified via Slack. Just set this Webhook URL for a specific page.

What if I can't the app? You should ensure you have permissions from the Slack workspace owner.

I didn't receive a notification Please wait for page to change. We will only send a notification when we detect a change.

I receive too many notifications? What can I do? You may setup notification rules to be notified only when e.g. text disappears, number increases, etc.

We do have more supported notification channels to suit everyone's preferences.

Be notified about website changes via Email

Be notified about website changes via Webhook

Be notified about website changes via Zapier

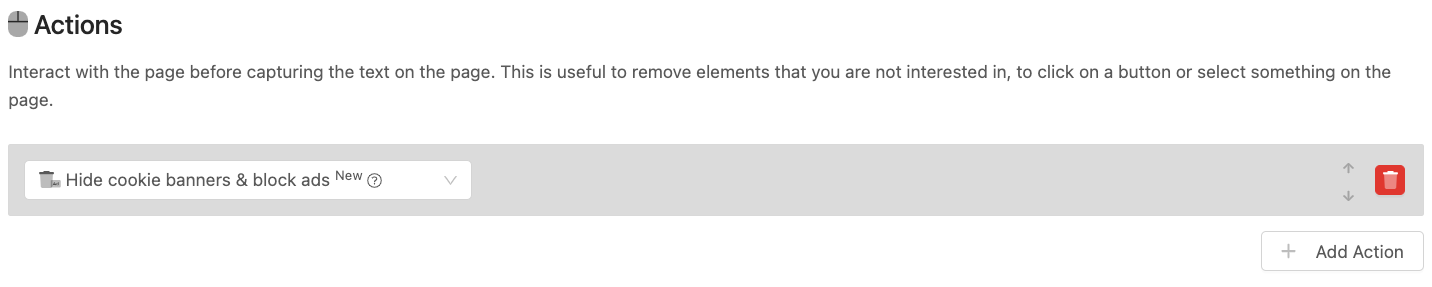

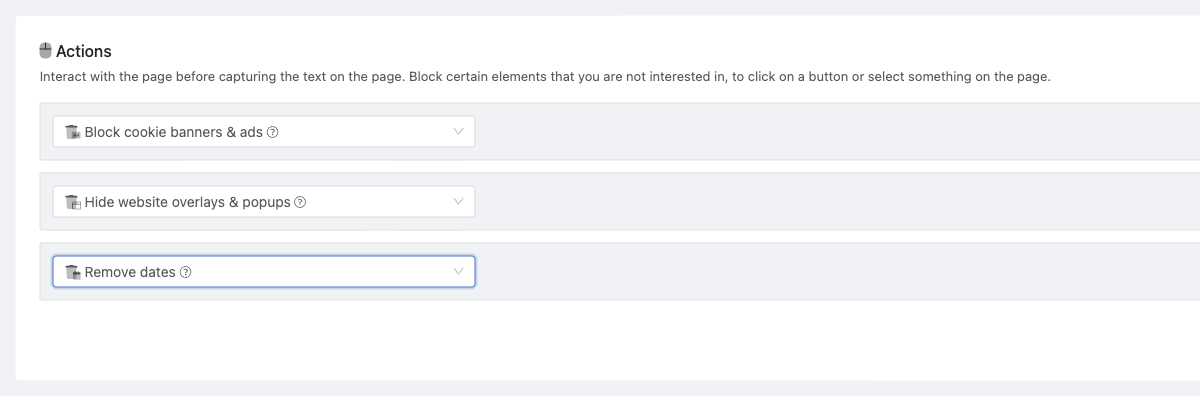

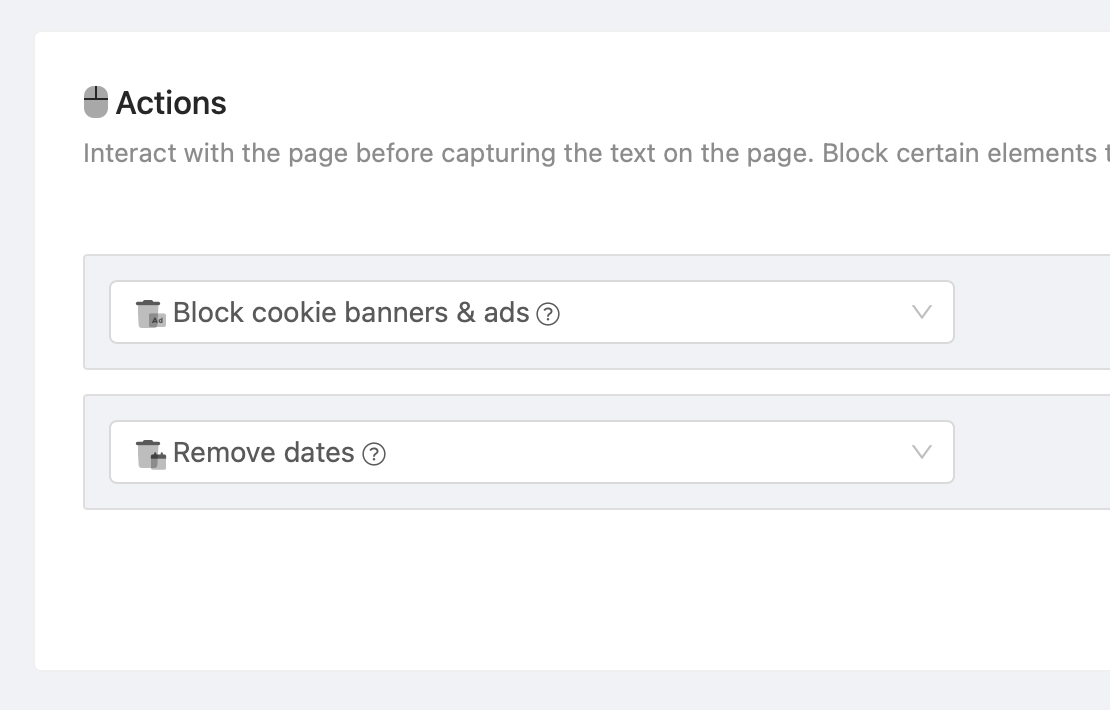

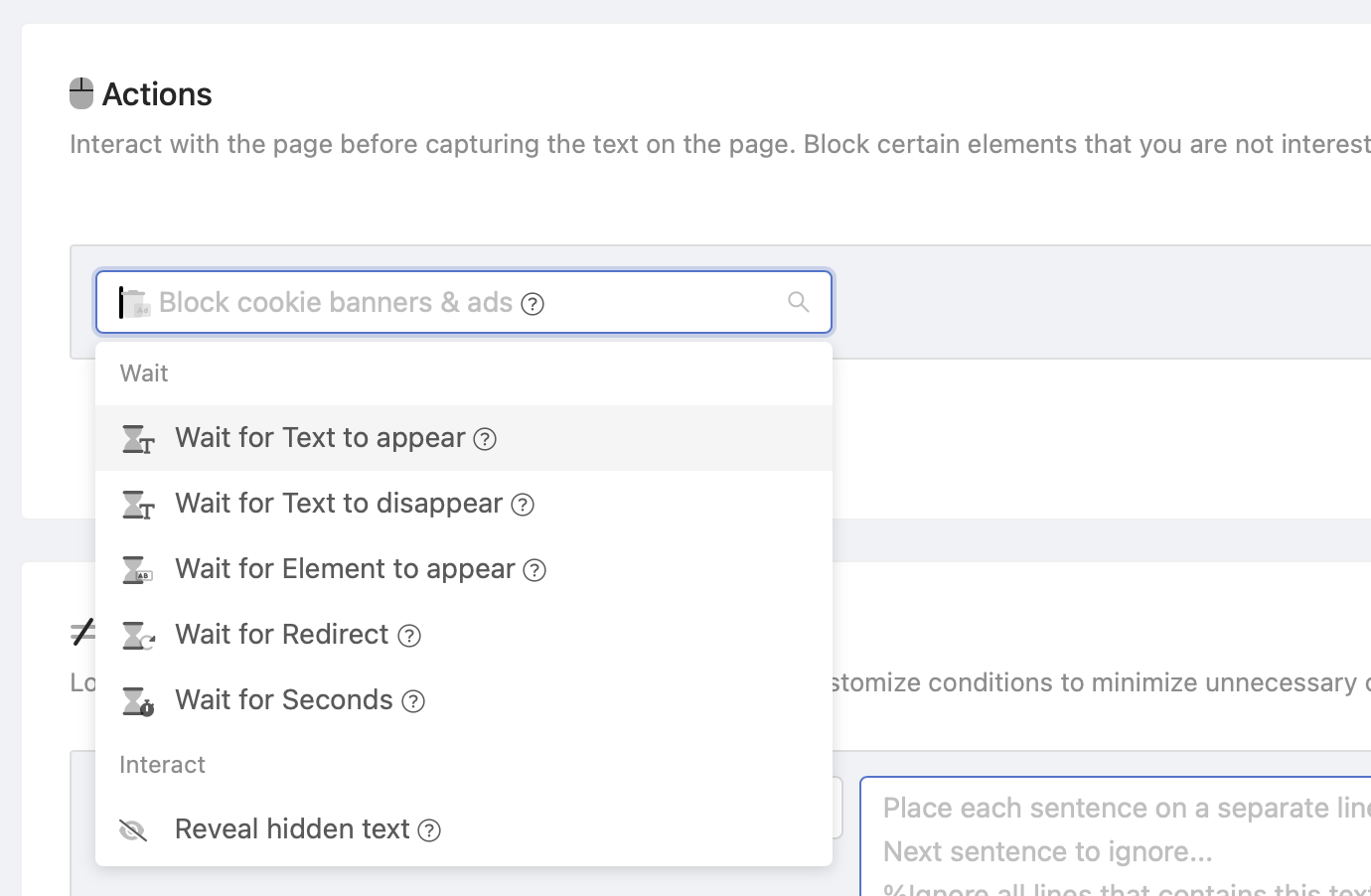

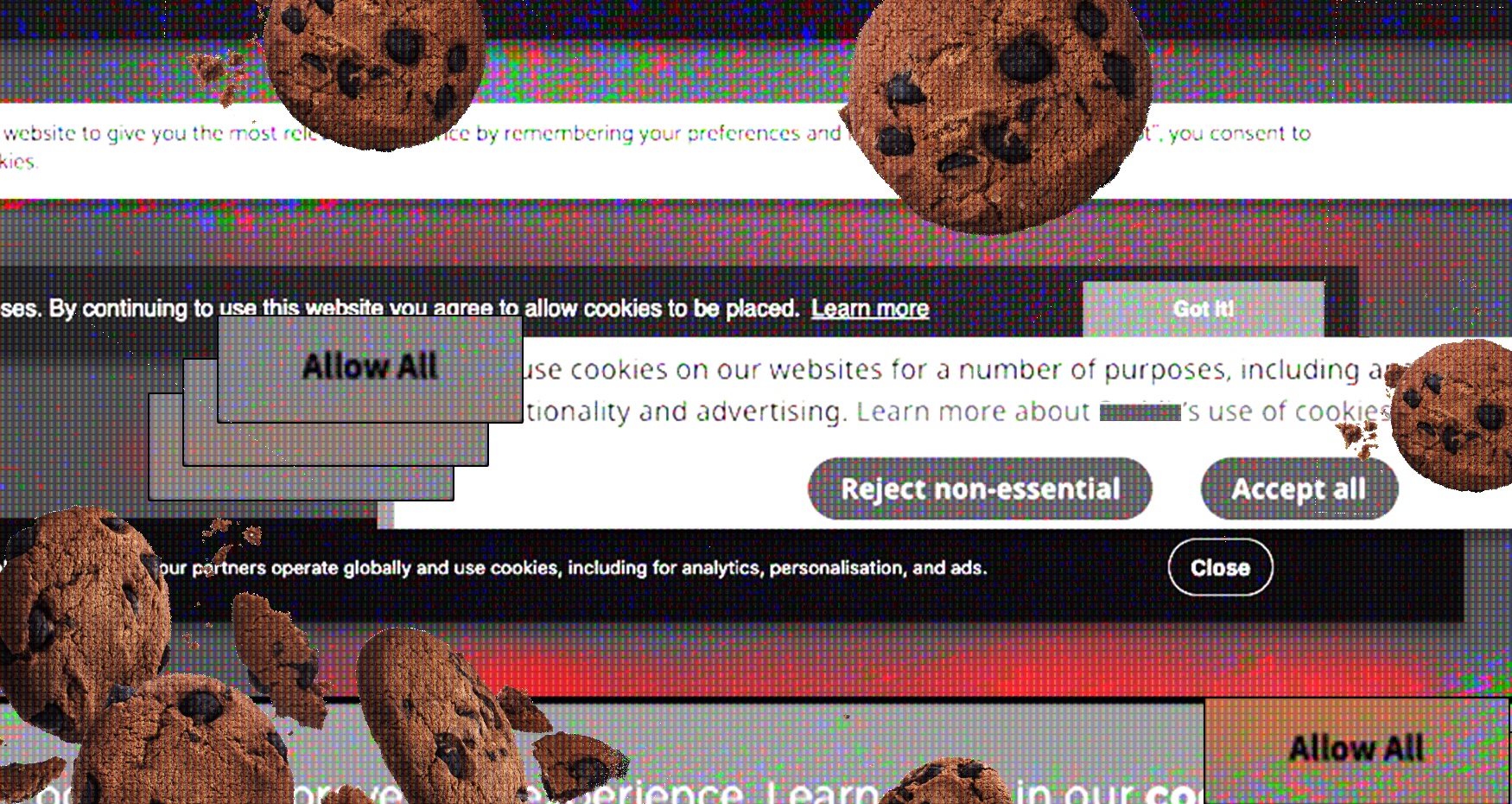

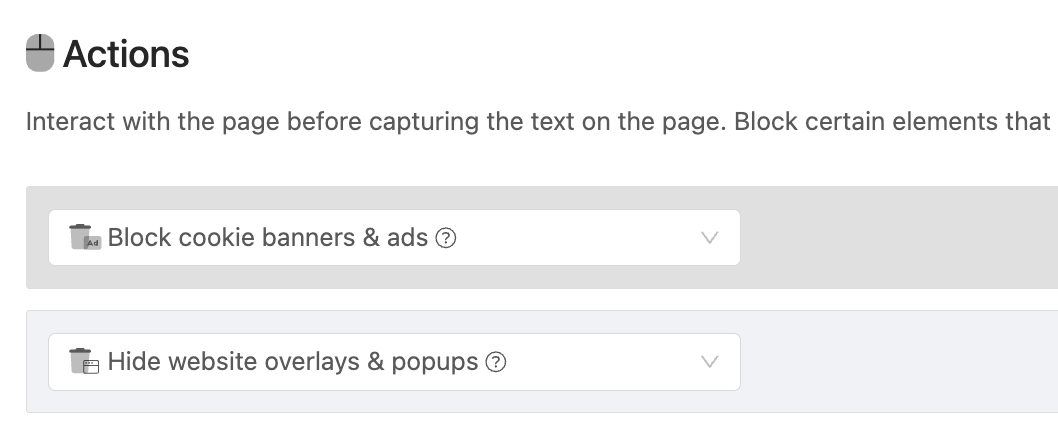

Monitoring tracked pages can sometimes result in frequent false-positive notifications, often stemming from pesky cookie popups. To address this issue and enhance your monitoring experience, we provide the "Block cookie banners & ads" action. This action effectively handles the majority of cookie windows and blocks ads, minimizing unnecessary notifications. Here are some considerations and alternatives to optimize your monitoring experience.

To mitigate false positives, we highly recommend implementing the "Block cookie banners & ads" action on all tracked pages. This action has proven to be remarkably effective, successfully handling approximately 99% of cookie popups and preventing ad content from triggering notifications.

In specific cases, if the tracked page is accessed from a location outside of Europe, cookie popups might not be displayed. As an alternative approach, you can opt to perform checks from a different country to avoid encountering cookie-related notifications.

Please be aware that a deprecated version of the "Block Cookies and Ads" action exists, which targets a narrower range of cookie popups. For optimal performance and to take advantage of the full feature set, we strongly advise updating to the current version. Keep in mind that automatic updates are not applied to prevent triggering unnecessary notifications.

]]>

Frequently, you encounter text like "updated 1 month ago" or "last changed 1 hour ago" that continually updates on your monitored pages. While this information might seem informative, it often leads to false-positive notifications.

To address this issue and improve your monitoring experience, we recommend applying the "Remove Dates" action to your tracked page. This action will intelligently detect and replace all date-related text with a standardized [DATE REMOVED] tag.

The "Remove Dates" action is designed to handle a wide range of common date formats, including:

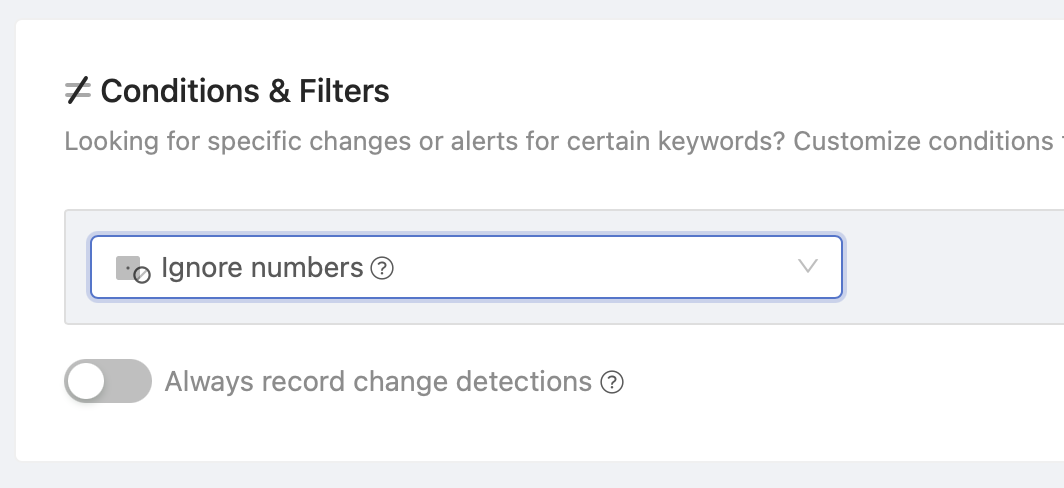

Instead of replacing dates with [DATE REMOVED] placeholders you may completely ignore all changes in numbers by adding "Ignore numbers" filters to "Conditions/Filters" section. Only use this if you are not interested in numeric changes.

]]>

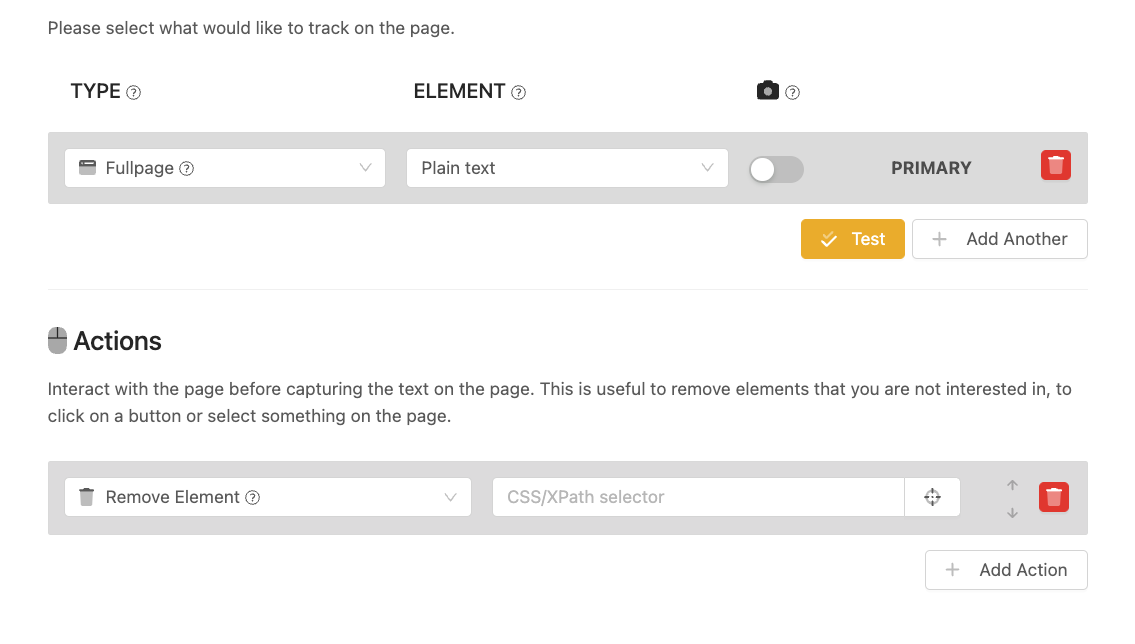

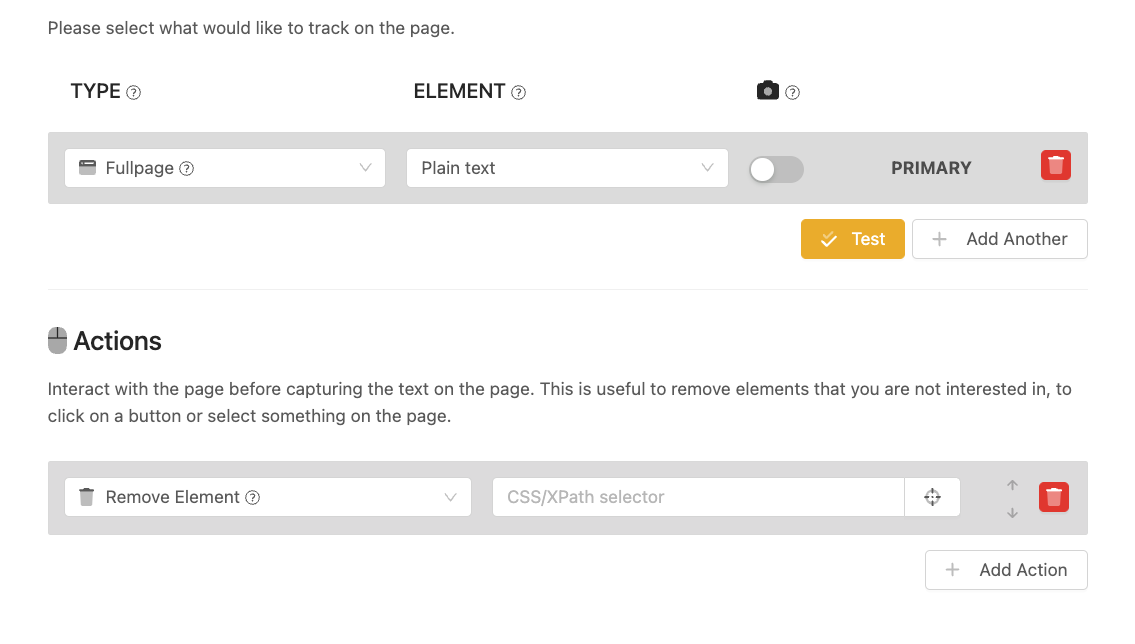

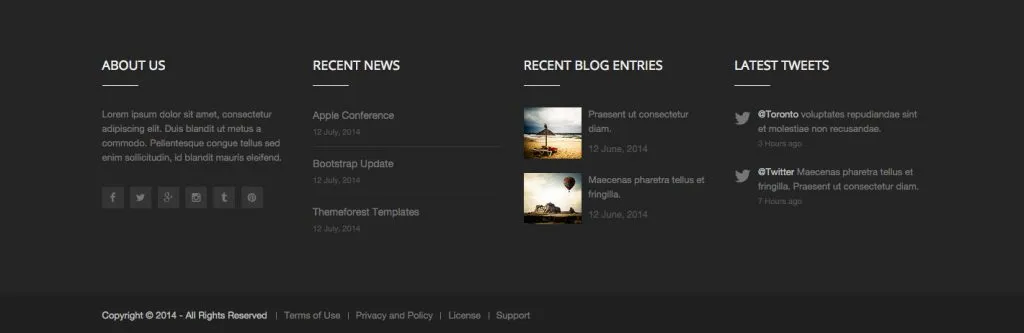

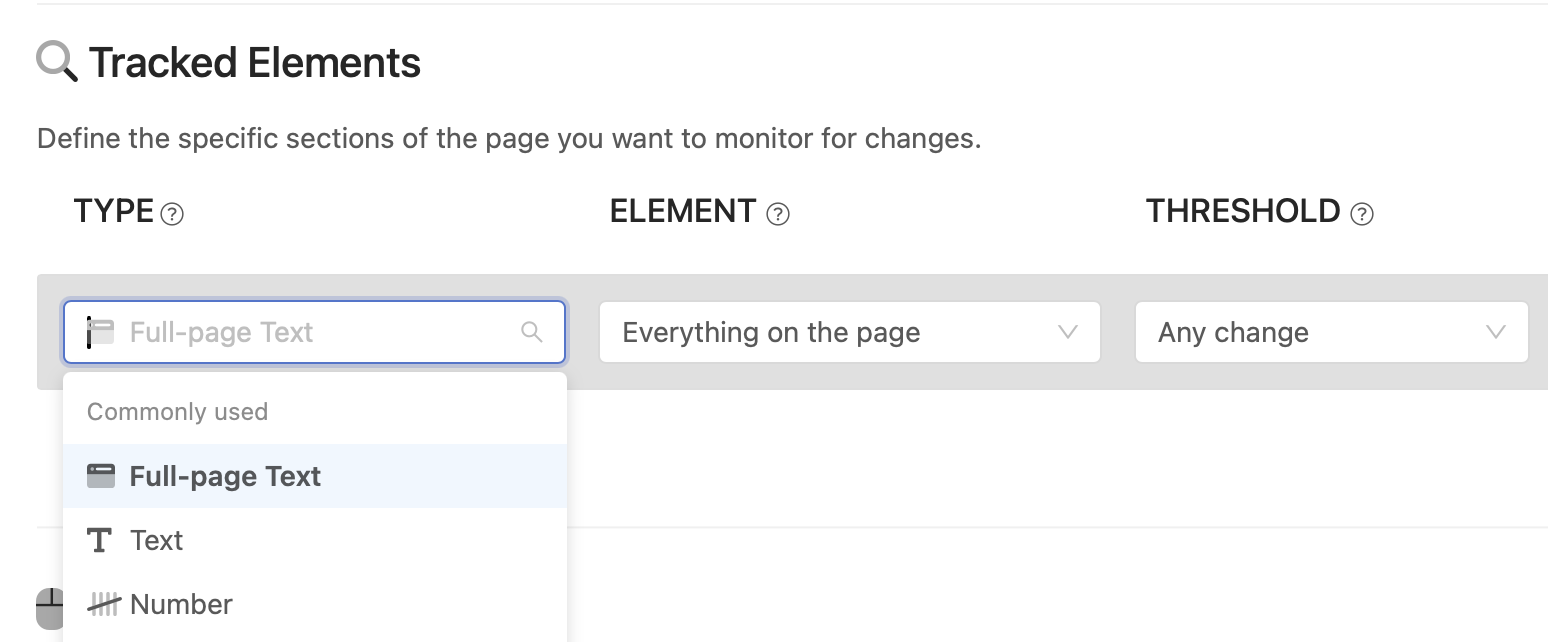

In certain situations, you may wish to exclude or remove a specific section on the page to prevent (false positive) notifications, especially when the content changes frequently. For instance, you might want to exclude a sidebar containing new blog posts or a Twitter feed at the bottom of the page.

When your tracked element type is "Full page" you may choose to track Everything on the page or Content only. If you choose Content only, text in header, sidebar, footer will not be tracked.

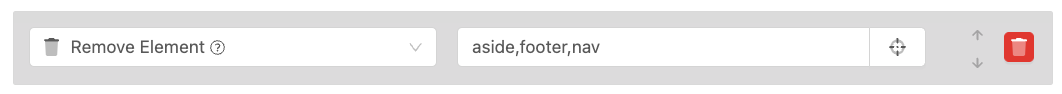

If you would like more control on what is removed, we recommend using the "Remove page element" action to exclude sections that do not interest you. You can either utilize the visual selector to remove the area or add the selector manually. Below you will find a few suggested selectors you can use.

Frequently, there are areas where tracking changes may not be of interest, including:

You can use the following selector (which you can paste into the "CSS/XPath selector") to exclude the mentioned elements: nav,aside,footer,.footer,header

Unfortunately, not all websites adhere to the content sectioning guidelines. In such cases, you may need to use the visual selector to identify the area or manually input the selector.

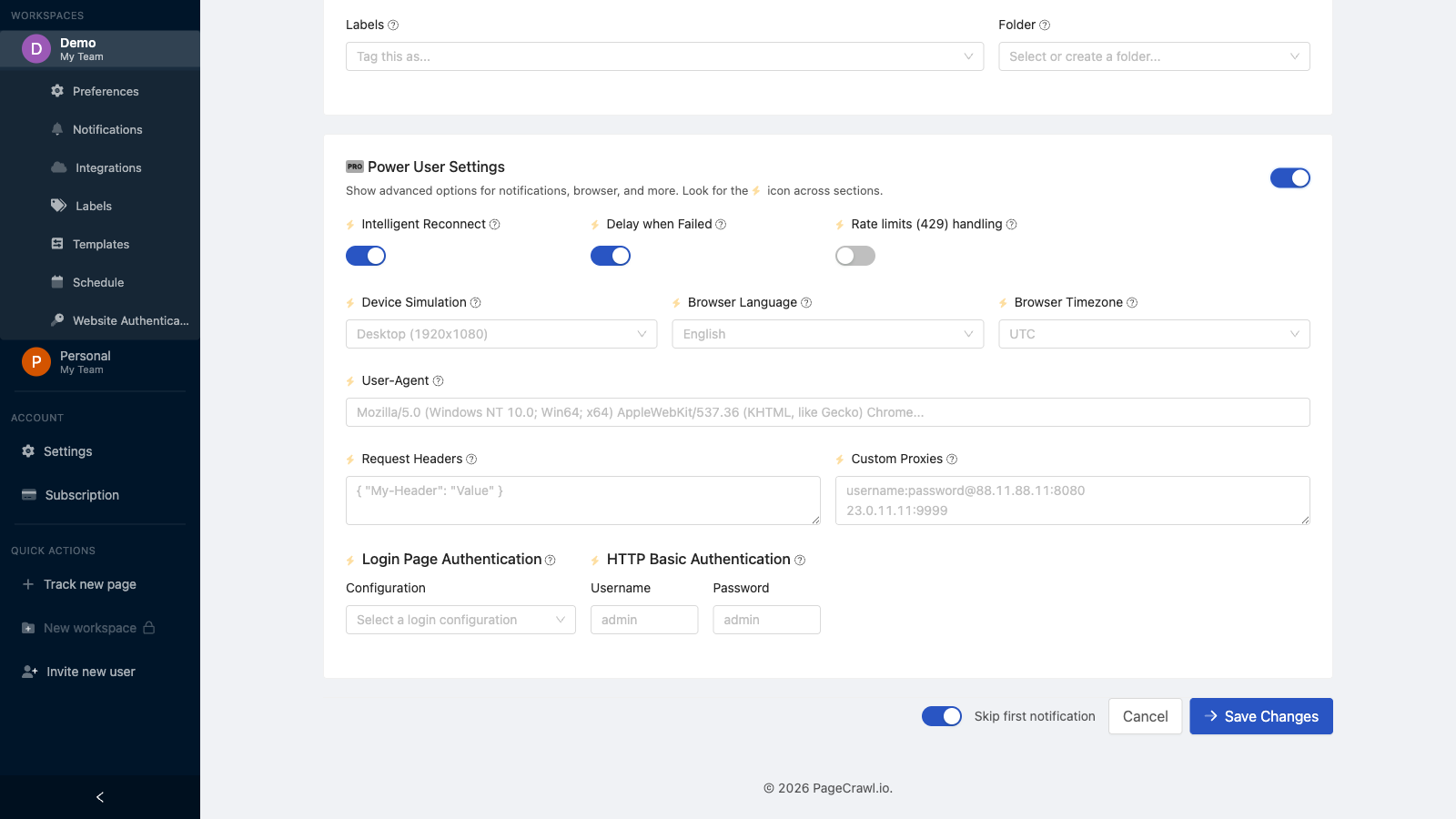

]]>PageCrawl provides built-in proxy locations and supports custom proxy servers for pages that require specific geographic access or have IP-based restrictions.

PageCrawl offers multiple proxy locations across North America, Europe, and the Middle East, plus a residential proxy option. Select a proxy location per page or apply one to multiple pages via Bulk Edit. You can also choose Random to rotate between locations automatically.

Use your own proxy servers when the built-in locations do not work for your use case.

Supported formats:

host:port

username:password@host:portConfiguration options:

| Method | How |

|---|---|

| Single page | Edit the page > Power User settings > Custom Proxy |

| Multiple pages | Select pages > Bulk Edit > Custom Proxies |

| Template | Add proxy settings to a template for reuse |

You can paste multiple proxy servers (one per line). PageCrawl will randomly select one for each check. If a proxy fails, the system automatically retries with a different proxy from the list.

When a page is blocked (timeout, 403, or 401), PageCrawl automatically switches to Stealth mode in addition to the proxy configuration. This combination resolves most access issues.

For pages that require residential proxies, PageCrawl offers Premium Residential Proxies with pay-as-you-go bandwidth starting at $10/GB. Purchase bandwidth in your account settings and select "Premium Residential" as the proxy location on your monitors. See the residential proxies guide for details on pricing, geo-targeting, and setup.

Most pages work fine without any proxy configuration. You only need a custom proxy if a website is actively blocking bots or restricting access by geographic location. Start without a proxy, and only set one up if you are seeing access errors (403, bot protection blocks, empty pages).

If the built-in proxy locations are not enough for your needs, you can use a third-party proxy provider. Here is what to look for and some popular options.

Understanding bandwidth usage:

Each page check downloads the full page without caching, so bandwidth adds up quickly. An average web page uses 2-3 MB per check. Heavier pages (news sites, e-commerce, image-heavy pages) can use 5-10 MB or more. For example, monitoring 50 pages every 30 minutes at 3 MB each would use roughly 7 GB per day, or around 216 GB per month. Because of this, avoid proxy providers that charge per GB of traffic. Those plans are designed for one-off scraping, not ongoing monitoring.

What to look for:

username:password@host:port authentication.Datacenter vs. residential proxies:

Datacenter proxies with unlimited bandwidth are the most cost-effective option for monitoring. They work well for most websites. Residential proxies (using real ISP addresses) are only needed for sites with strict bot detection that blocks datacenter IPs. If you need residential proxies, look for providers that offer them with unlimited bandwidth or per-IP pricing rather than per-GB billing.

Popular proxy providers that work with PageCrawl:

| Provider | Type | Pricing Model | Notes |

|---|---|---|---|

| Webshare | Datacenter, Residential | Per proxy, unlimited bandwidth | Free tier available, good for testing. Paid datacenter plans include unlimited bandwidth. |

| IPRoyal | Datacenter, Static residential | Per proxy (datacenter) | Datacenter proxies with unlimited traffic. Static residential proxies available per IP. |

| Proxy-Cheap | Datacenter, Static residential | Per proxy, unlimited bandwidth | Budget-friendly static residential and datacenter proxies with no traffic limits. |

| ProxyRack | Datacenter, Residential | Flat monthly rate | Unlimited bandwidth on most plans. Rotating and geo-targeted options. |

These are independent providers and not affiliated with PageCrawl. Prices and features may change.

Not every provider works for every website. A proxy that works perfectly for one site may get blocked on another. This depends on the website's bot detection, the proxy provider's IP reputation, and the type of proxies used. Always test a provider against your specific pages before committing to a long-term plan. Most providers offer short trial periods or small starter plans for this purpose.

Country-specific access: Some websites restrict content to visitors from a specific country (geo-blocking). Government portals, local news sites, and region-locked services often require an IP address from that country to load correctly. If you are monitoring pages like these, make sure the proxy provider offers proxies in the required country. Check the provider's location list before purchasing, as coverage varies significantly between providers, especially for smaller countries.

Note: Most providers give you a proxy endpoint in the username:password@host:port format. Paste it directly into the Custom Proxy field in PageCrawl. If the provider offers rotating proxies through a single gateway endpoint, you only need to add one line.

Free proxy servers are unreliable, slow, and frequently stop working. They should not be used for monitoring pages where uptime matters. Use the built-in proxy locations, your own paid proxy service, or contact us for residential proxy options.

Some websites use CAPTCHA challenges to block automated access. PageCrawl integrates with 2Captcha, a CAPTCHA-solving service, to handle these protections automatically.

Available on Enterprise and Ultimate plans.

2Captcha handles most common CAPTCHA types including reCAPTCHA v2, reCAPTCHA v3, hCaptcha, and image-based challenges.

CAPTCHA solving is billed by 2Captcha separately from your PageCrawl subscription. Typical costs are $1-3 per 1,000 CAPTCHAs solved. Check 2captcha.com/pricing for current rates.

There may be various reasons why a page fails to open. This guide describes the most common problems and suggests solutions to help you overcome these issues.

A timeout occurs when the page takes too long to respond. This may be a temporary issue with the page, or the page may be loading very slowly. Timeout limits vary depending on your plan:

To avoid timeouts please consider subscribing to a paid plan or upgrading your plan.

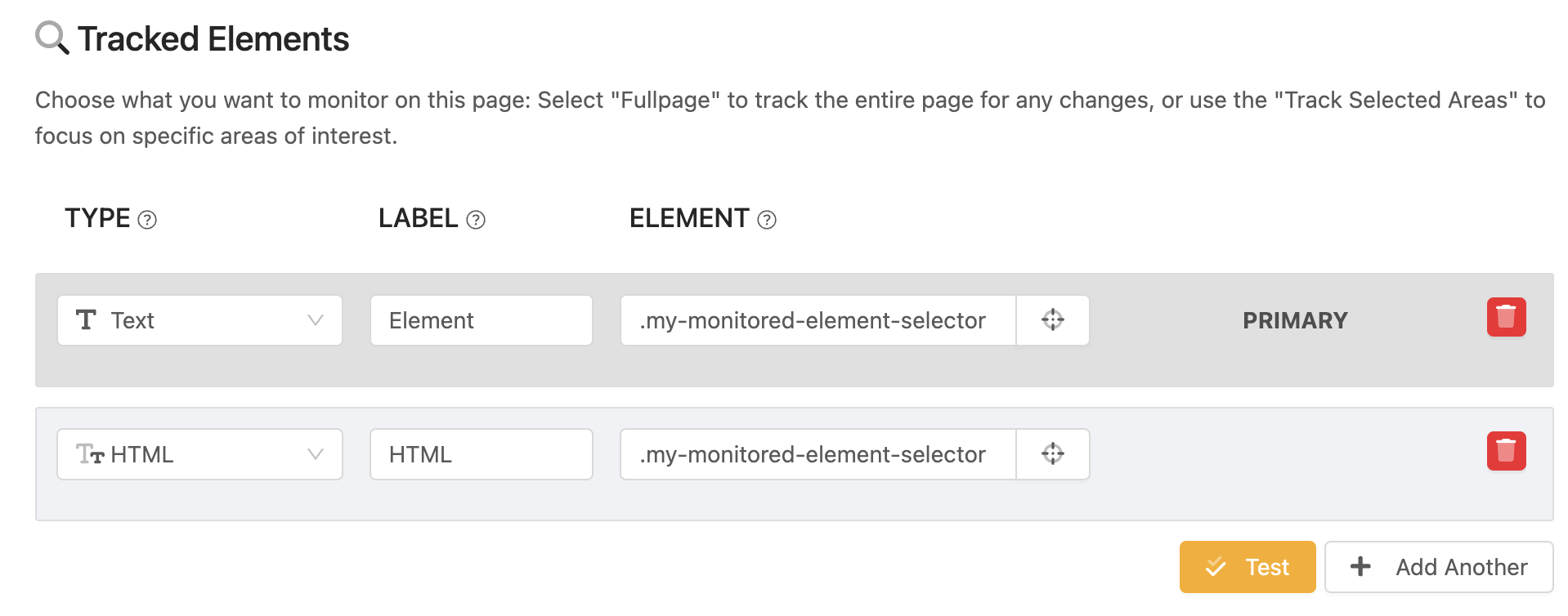

This error will be shown if the page has changed significantly and element with configured XPath/CSS selector could not be found. In this case, you should review the page and update selector if needed.

Some pages may use site protection features to block scrapers and website tracking tools like PageCrawl.io. Different pages may use different blocking mechanisms, but here are the most common ones:

Access Restricted to Specific Countries Page may be configured to only allow visitors from a specific country.

Proxy Location blocked The website may block the IP address of the proxy server PageCrawl is using.

Most often indicates that PageCrawl.io Bot was not allowed to access the website. Use "Residential proxy pool" to avoid being blocked.

In most cases this error indicates that page is no longer available to view. You should check and update the page URL.

500, 502, 503, 504 indicates that website server is not responsive, overloaded, currently in maintenance or experiencing server issues. If such error occurs, our bots will retry page check later.

The page can't be opened. In most cases website is down or the website in only reachable from a specific country

Pages may use CAPTCHA to protect the website from bots. To bypass this, you can use a service like 2Captcha which will use human workers to solve the captcha for you. PageCrawl.io has an integration with 2Captcha (you must be subscribed to an Enterprise or Ultimate plan) you can sign up for and configure the API token generated from 2Captcha.

In some cases there could be an unexpected error that causes the PageCrawl bot to fail to check the page for changes. In case this error does not go away after a while, please contact support to notify us about the problem so we could prioritize the issue.

]]>

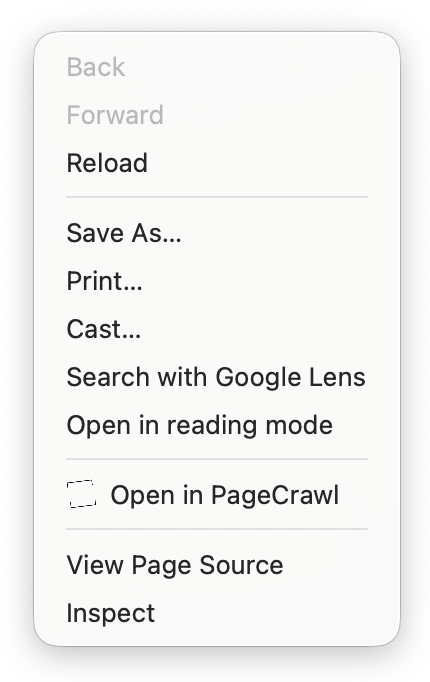

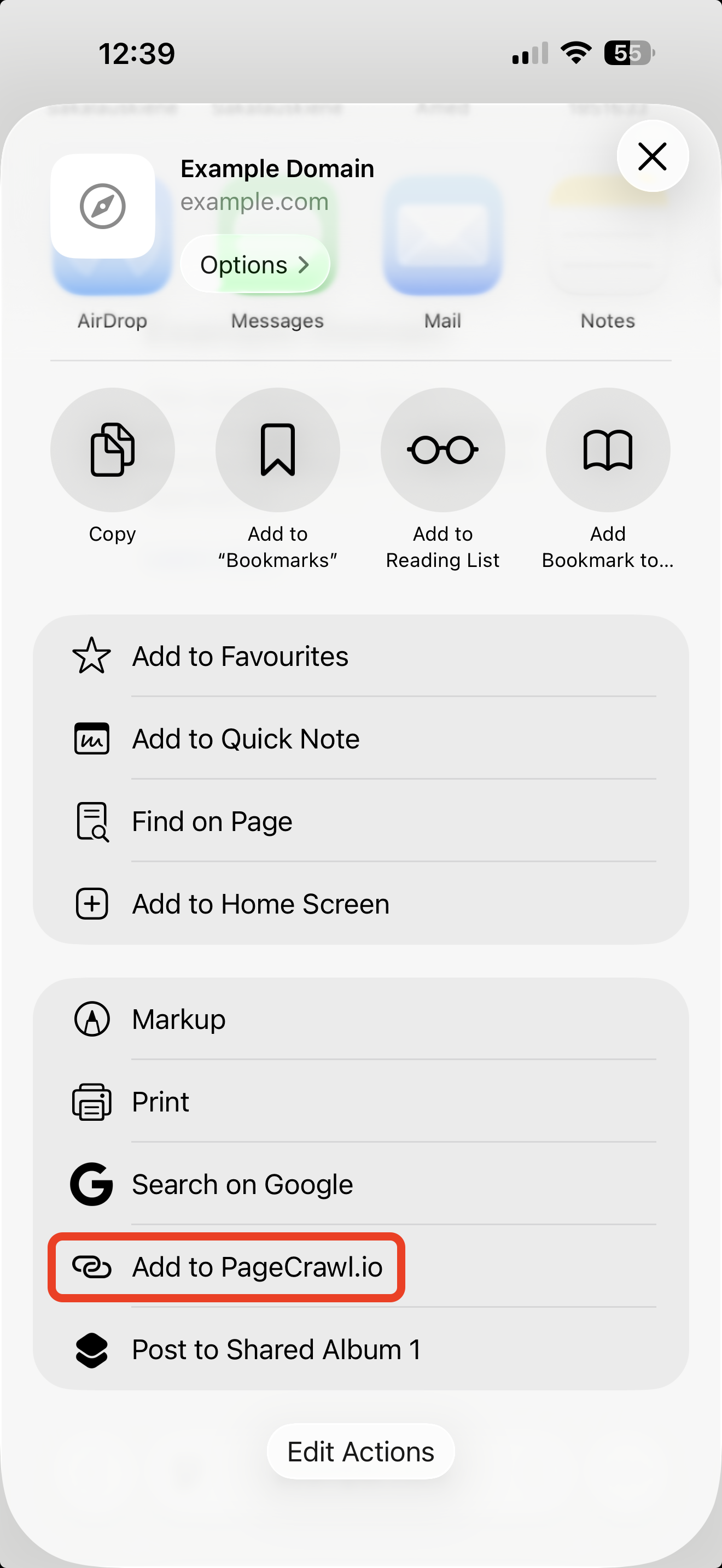

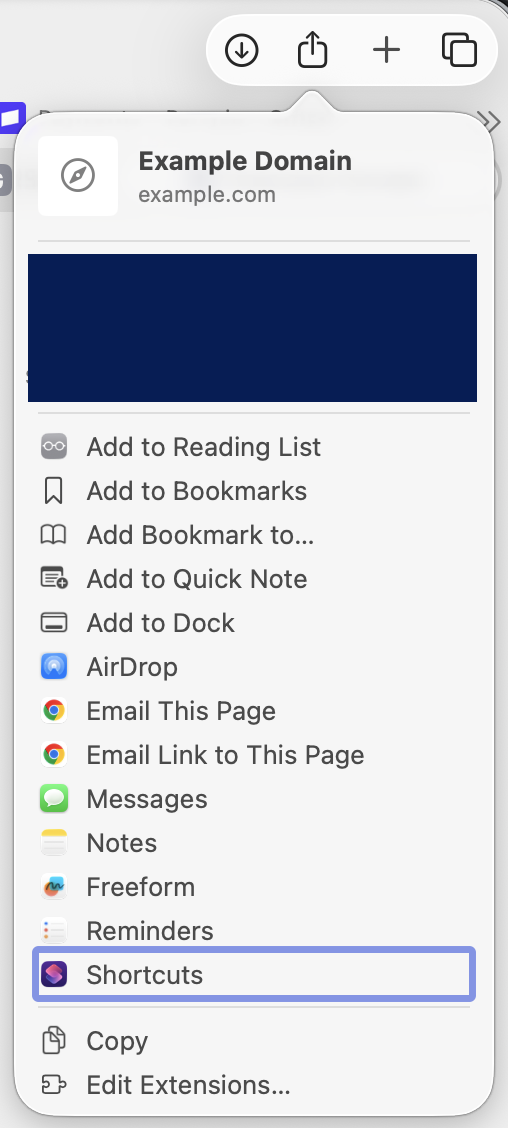

If you encounter a problem with PageCrawl's visual selector and are unable to open the page you are trying to access, there is another option you can try. You can manually copy the selector by opening the desired page in your preferred web browser. This manual method may be more time-consuming, but it can provide a reliable solution if the visual selector is not functioning properly. Additionally, by manually copying the selector, you can have greater control over the elements on the page and the data you want to extract.

This guide will show you how to do it quickly and easily for Chrome, Firefox and Safari browsers.

When it comes to web scraping, finding the right element on a webpage can be a challenge. This is where expression languages like XPath and CSS Selector come in handy. These two powerful tools help you locate elements on a webpage, and choosing between them can be difficult.

CSS Selectors are favored by many web developers as they are easy to learn if you already know CSS syntax. On the other hand, XPath Selectors offer greater power and flexibility, such as the ability to find elements that contain specific text. However, the learning curve for XPath can be steeper.

For those just starting out, CSS Selectors are the recommended choice due to their simplicity and versatility. Most advanced selectors can be written in CSS, making it a good option for web scraping beginners.

When it comes to CSS and XPath Selectors, there are two ways to generate them: relative and absolute.

Relative selectors are preferred in most cases, as they are less prone to break.

Absolute selectors, on the other hand, are useful when tracking a large number of pages, and you are only interested in specific elements. However, with even a slight change in page layout, the selector will break. If an element is added or removed from a page, the absolute XPath will need to be updated to continue tracking the page contents.

Relative selectors tend to be short, while absolute selectors can be lengthy. Here are some examples of relative and absolute selectors for both CSS and XPath:

There are multiple browser extensions available that can help you copy CSS or XPath Selectors. Two options that we tried and can recommend include "SelectorsHub" and "SelectorGadget".

If you prefer not to use a browser extension, you can also find CSS or XPath Selectors by inspecting an element. In most cases, you will get an absolute selector, and if the page content changes, you will need to update the selector.

In conclusion, choosing between XPath and CSS Selectors for web scraping comes down to your personal preference and level of experience. Both offer powerful tools for locating elements on a webpage, and with a little practice, you can become an expert in no time!

If you are reading this, you may have experienced the frustration of the language suddenly switching on your monitored page, causing false positive notifications. Unfortunately, the language behavior of a website is determined by the site developers, and there are several approaches they may use. Some websites base their language on the browser or system settings, which is the best option. Others guess the language based on the country information from the IP address, while others use a mixed approach. There are two approaches you can use to prevent the page language from changing.

To prevent language switching from occurring when monitoring a website for changes, there are a few things you can do. One option is to set the browser language to a specific language, such as "Danish", in "Advanced Settings" by editing the tracked page configuration in PageCrawl. However, keep in mind that some bot detection services can detect this, so use this option only if absolutely necessary.

If you are using "Stealth Mode", be aware that setting the browser language may cause issues. Overriding the browser language can be inconsistent with what bot detection services expect, which may trigger blocks.

Another option is to access the website from a fixed IP address by setting Proxy Location to "Fixed IP". This ensures that the same IP is used to check for changes on the page. However, if the proxy location gets blocked, PageCrawl may not be able to bypass the blocks and displays a crawl error.

]]>

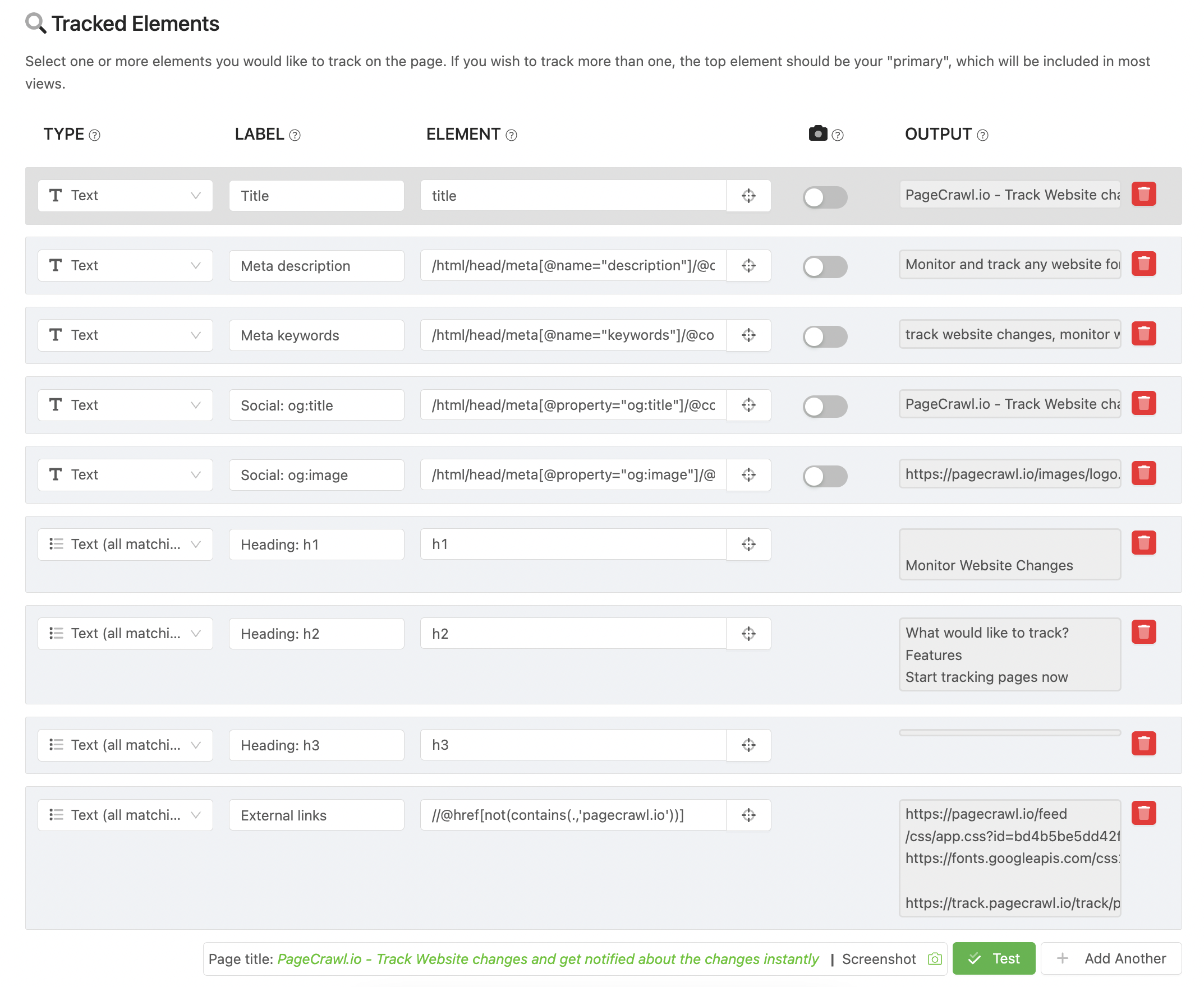

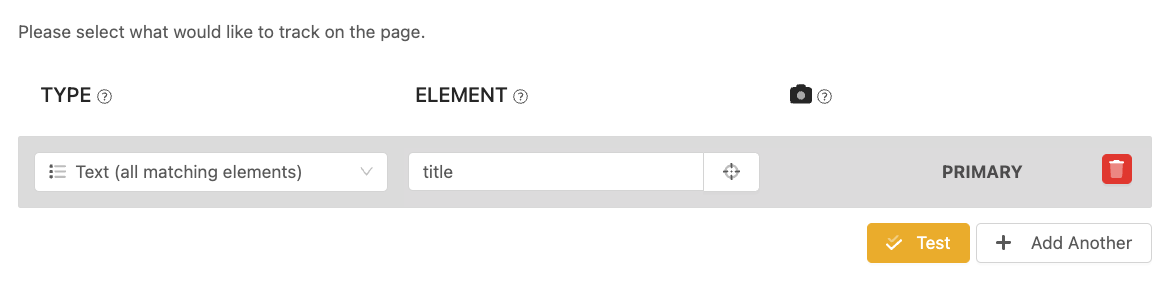

Optimizing your website for search engines requires effective monitoring of SEO tags. PageCrawl makes it easy to track changes to title tags, meta descriptions, canonical URLs, robots directives, Open Graph tags, and headings.

The fastest way to monitor SEO tags is with the built-in SEO Tags tracking mode:

PageCrawl will automatically extract and track:

When any of these fields change, you will see exactly which tag was modified and what the previous and new values are.

If you plan to monitor SEO tags for multiple pages, we recommend creating a Template with the SEO Tags tracking type. This lets you reuse the configuration across many pages without repeating setup.

If you only need to monitor specific SEO tags (rather than all of them), you can create individual tracked elements using CSS or XPath selectors.

Use "Text" for the following tracked elements:

SEO

title/html/head/meta[@name="description"]/@content/html/head/meta[@name="keywords"]/@content/html/head/meta[@name="robots"]/@content/html/head/meta[@name="viewport"]/@contentSocial Media Tags

/html/head/meta[@property="og:title"]/@content/html/head/meta[@property="og:type"]/@content/html/head/meta[@property="og:image"]/@content/html/head/meta[@property="og:url"]/@contentUse "Text (all matches)" for the following tracked elements:

Headings

h1h2h3h4h5

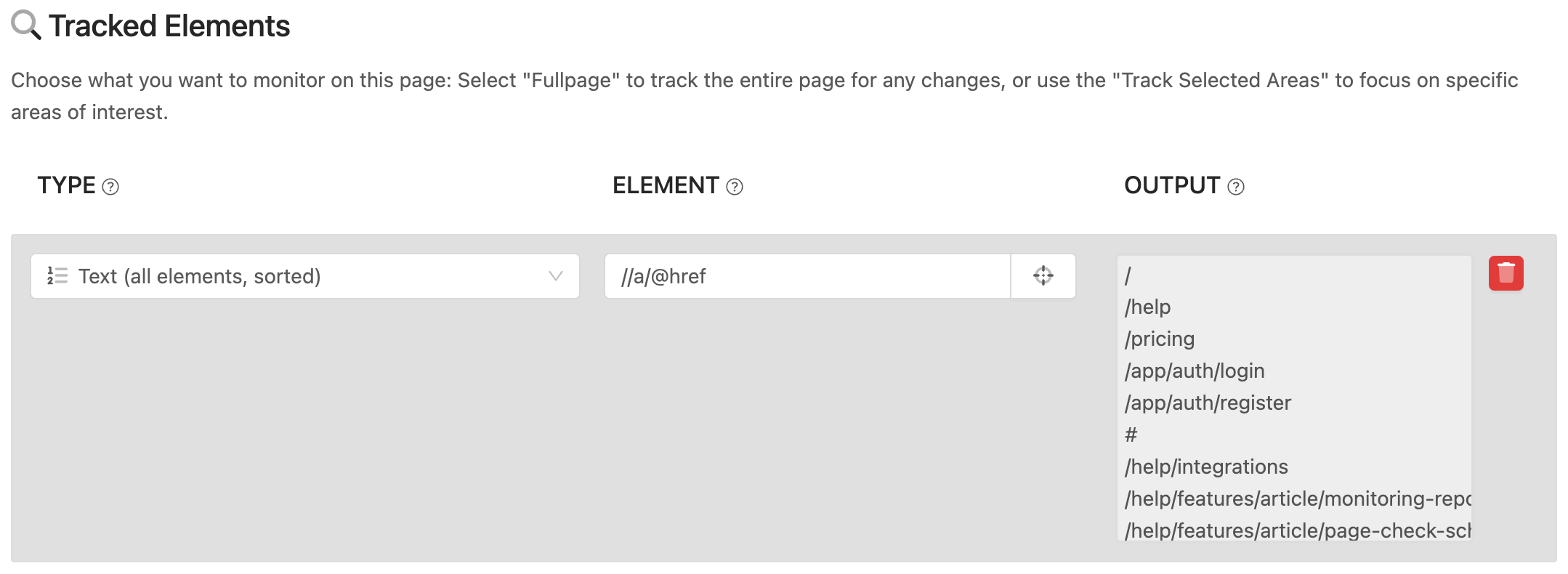

You may also wish to track outgoing links that exist on the page. We suggest using "Text (all matches, sorted)" to capture links to other pages. You may use these selectors to track:

Use the following selector to track all links on a web page:

//a/@hrefTo track only external links (those not belonging to a specific website), use this selector:

//@href[not(contains(.,'not-this-website.com'))]

Note: You should substitute 'not-this-website.com' with the website URL.If you want to track links containing specific keywords in their URLs, use this selector as an example:

//a[contains(@href,'/download/oursoftware_')]/@hrefTo specifically track links leading to PDF documents, you can use this selector:

//a[contains(@href,'.pdf')]/@href//a[contains(text(),'Download')]/@href

Note: This selector is case-sensitive. e.g. if the text actually is "download", it will not be foundIf you want to track links with specific CSS classes, use this selector:

//a[contains(@class,'your-class-name')]/@href

Note: You should substitute 'your-class-name' with the class.To track links with specific attributes (other than href), use this selector and replace "attribute-name" with the name of the attribute you're interested in:

//a[@attribute-name='attribute-value']/@href

Note: You should substitute 'attribute-name' and 'attribute-value' with the relevant attribute values.Over 30% of websites now use bot protection services like Cloudflare, Akamai, and similar tools that block automated access. This means your monitored pages can stop returning data without warning.

PageCrawl provides multiple layers of protection to keep your monitors working, most of which happen automatically.

PageCrawl handles most bot protection automatically. When a check fails, PageCrawl detects the block and adjusts its approach on the next attempt. This includes automatic retries, switching to stealth mode, and rotating through different proxy locations.

For most pages, you do not need to configure anything. The steps below are only needed if automatic handling does not resolve the issue.

PageCrawl will show a warning on the page if it detects a block. You may also notice that the captured content is empty, shows an error code (403, 401), or looks different from what you see when you visit the page yourself.

Note: The settings below require Advanced mode. To enable it, click Edit on any page and toggle Advanced at the bottom of the form.

Follow these steps in order. After each step, wait for the check to complete before moving on.

This is the first thing to try and resolves most blocking issues.

If the content now loads correctly, you are done. Stealth mode will be used for all future checks on this page.

If Stealth mode alone does not work, the site may be blocking the specific IP address or region.

Random proxy rotation means each check comes from a different IP address, making IP-based blocking ineffective.

You can also try specific locations (London, New York, San Francisco, Toronto, Frankfurt) if you know the site serves content differently by region.

For sites with the strictest protections, residential proxies are the most effective option. These route requests through real consumer internet connections, making them virtually indistinguishable from regular visitors.

Residential proxy traffic is available as an add-on. You can purchase residential proxy traffic directly from your PageCrawl account.

Note: Residential proxies consume traffic from your purchased balance. Each check uses a small amount of traffic depending on the page size.

If none of the built-in options work, you can use your own proxy server from a third-party provider.

http://user:password@host:port)This is useful when you need a proxy from a specific country or provider, or when you already have a proxy subscription. See Custom Proxies for more details.

| Solution | How to Enable | When to Use |

|---|---|---|

| Stealth mode | Edit > Advanced > Engine: Stealth | First thing to try for any blocked page |

| Proxy rotation | Edit > Proxy: Random | When a specific IP is blocked |

| Residential proxy | Edit > Proxy: Residential | For the strictest access controls |

| Custom proxy | Edit > Advanced > Custom Proxy | When you need a specific provider or location |

If you have tried all the steps above and the page is still not loading:

<?xml version="1.0"?>

<catalog>

<book id="bk101">

<author>Gambardella, Matthew</author>

<title>XML Developer's Guide</title>

<genre>Computer</genre>

<price>44.95</price>

<publish_date>2000-10-01</publish_date>

<description>An in-depth look at creating applications with XML.</description>

</book>

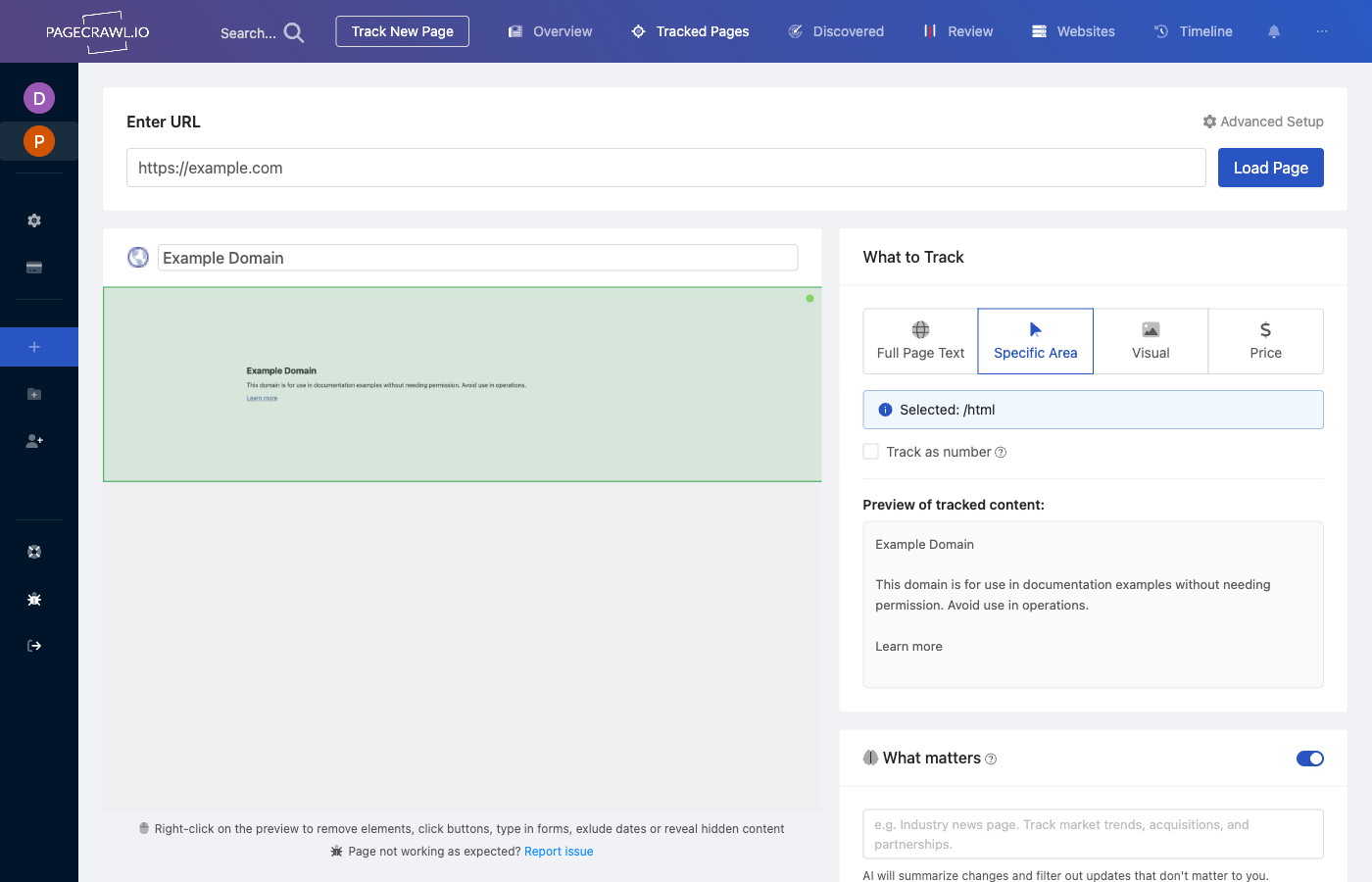

</catalog>pagecrawl.io offers an efficient way to monitor and track changes in XML files. Instead of sifting through the whole XML file for changes, which can be overwhelming due to frequent updates, you can focus on specific things that matter. This helps you avoid getting flooded with unnecessary alerts for minor changes like 'updated at' dates.

This guide will walk you through the process of setting up and utilizing this feature to simplify your tracking experience.

To reduce the number of false positive you may want to monitor a specific attribute (or multiple attributes), whether it was added, removed or changed.

To begin tracking changes in XML files, follow these steps:

Access PageCrawl: Log in to your PageCrawl account or sign up if you're new to the platform.

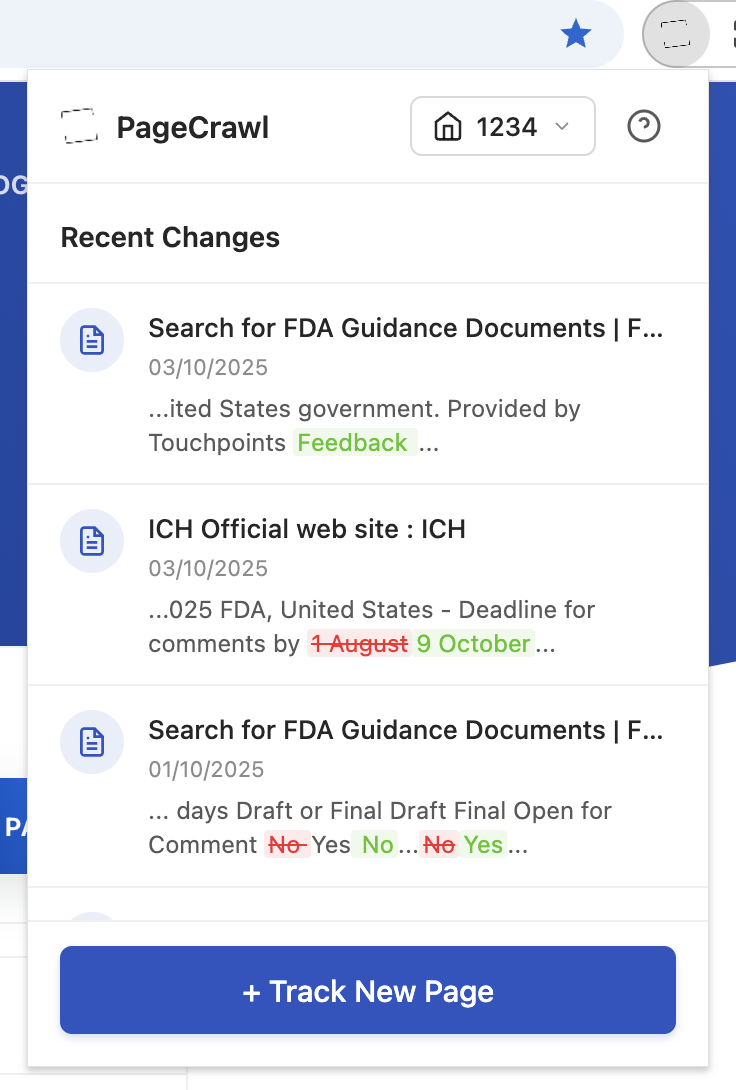

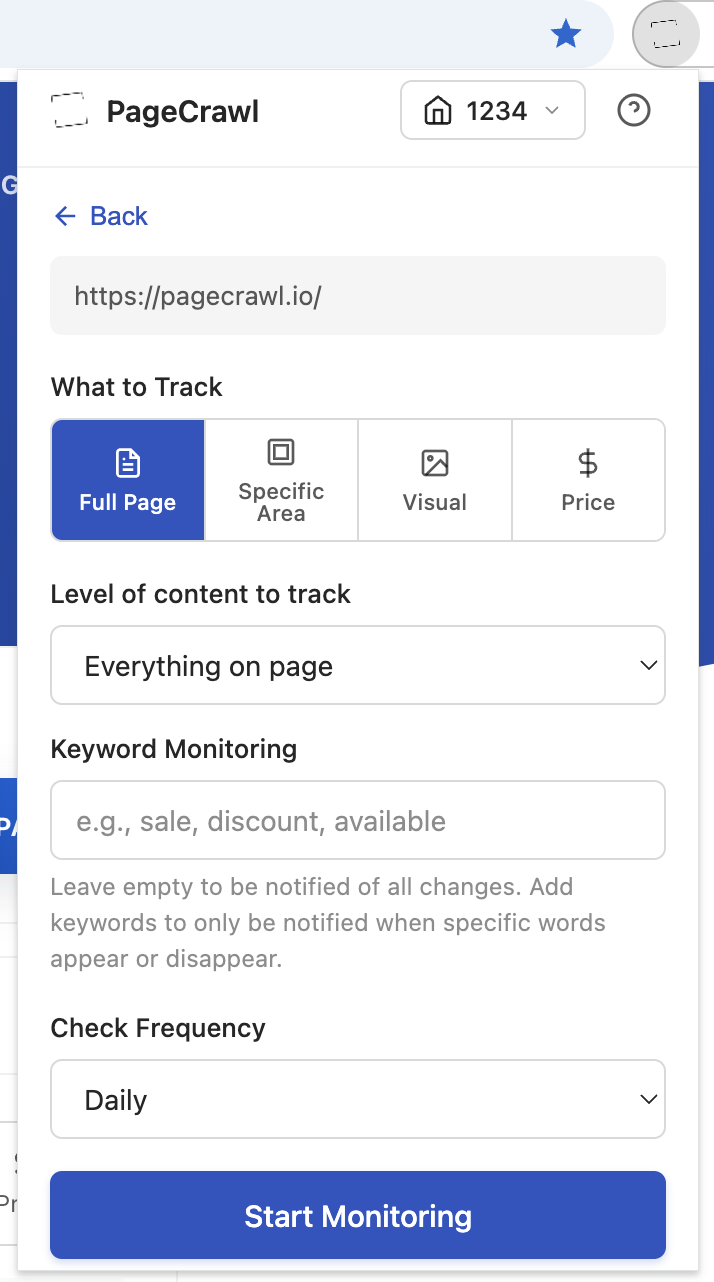

Create a Monitored Page: Once logged in, navigate to the dashboard and click on the "Track New Page" button. This will initiate the setup process for monitoring pages for changes.

Instead of monitoring the entire XML file, you can narrow down your focus to specific attributes that are relevant to you. For example, you might want to track changes in book names within an XML catalog.

Consider the following example xml file structure:

<?xml version="1.0"?>

<catalog>

<book id="bk101">

<title>XML Developer's Guide</title>

<!-- Other book details... -->

</book>

<book id="bk102">

<title>Dummy XML Developer's Guide</title>

<!-- Other book details... -->

</book>

<!-- More book entries... -->

</catalog>

Follow these steps to configure tracking elements for your XML file:

Select Tracked Element: Within the PageCrawl setup interface, choose the "Text (all matches)" as tracking element type.

Specify Element to Track: In this step, you'll specify the exact element within the XML that you want to track. For instance, if you're interested in changes to book titles, you'll set the element as title.

In this case, by focusing on the title element, you'll receive notifications only when book titles change, new is added or removed, filtering out less significant updates.

If you would like to also keep the full history of what has changed in the XML document but only be notified when a specific attribute changes, you can also add "Full Page" as the Tracked Element and then add a condition to be notified when the monitored attribute changes.

]]>Note: This feature is available on paid plans only.

With pagecrawl.io, you can group your monitors into scheduled briefings that compile every detected change into a single digest, delivered automatically on the cadence each audience expects. Reports turn raw change notifications into something stakeholders actually open and read: a clean, AI-summarized email with the most important items at the top.

Instant alerts on every change flood inboxes until people mute the channel. Scheduled reports solve that without losing the safety net for genuinely urgent items, which still escalate immediately to your channel of choice.

A single workspace often serves several audiences with very different appetites for detail:

Reports let you serve all of these from the same monitor set. Group monitors by tag, folder, or domain, then deliver a tailored briefing to each audience on their preferred schedule. High-priority changes still escalate instantly; everything else lands in the next digest.

| Scenario | Recommended |

|---|---|

| Monitoring a handful of critical pages | Instant notifications |

| Tracking 50+ competitor pages for pricing | Scheduled report (daily or weekly) |

| Legal/compliance pages that rarely change | Scheduled report (weekly or monthly) |

| Stock availability that needs immediate action | Instant notifications with escalation |

| Executive stakeholder updates | Scheduled report with AI summary |

| Onboarding a non-PageCrawl recipient (CEO, board, client) | Scheduled report (public share link) |

You can mix both approaches. Monitors not assigned to any report continue to send instant notifications as usual. Monitors assigned to a report will only appear in digests, unless priority escalation is configured for urgent changes.

Each report has four moving parts:

Each generated digest is stored as a record in the workspace and gets a unique public share link, so anyone with the URL can view it in their browser without a PageCrawl account.

For step-by-step instructions on each option, see the Scheduled Reports setup guide.

The scope determines which monitors feed into the digest. Five options are available:

#competitors, #pricing, #legal. Easiest way to slice cross-cutting topics.Tag and folder scopes are dynamic: any monitor that picks up the tag or moves into the folder later will start appearing in the next digest, with no report change required.

| Schedule | Description |

|---|---|

| Daily | Receive a digest every day at your chosen hour |

| Weekdays only | Monday through Friday |

| Weekly | On a specific day of the week |

| Monthly | On a specific day of the month |

| On-demand only | Only generated when you manually click "Generate now" |

All times are based on your workspace timezone.

A single report can ship to multiple channels at once. Each channel can override the workspace defaults if you want this report to use a different webhook or email list.

Channels can be enabled or disabled per report. If a channel is disabled or its webhook is missing, that channel is skipped without affecting the others.

Every digest can include an AI-written summary at the top of the report. The summary is generated each time the digest is built, using the latest changes plus your workspace-level focus prompt for context. You choose the style that fits the audience.

Eight summary styles are available:

You can change the style per report at any time. The next digest will reflect the new style.

In addition to the summary, every digest gets a short, content-aware title generated by AI. Examples:

The title appears in three places:

Competitor Intel: 3 high-priority competitor changes this week.Stakeholders can tell at a glance whether this digest is worth opening, without having to scroll.

Reports batch changes by design, but some changes are urgent enough that waiting for the next digest is unacceptable, like a competitor dropping prices 30% or a page being taken down. Priority escalation handles this.

When you enable escalation on a report:

This means stakeholders subscribed to the digest channel are not woken up at 3am, but the people who need to act on critical changes are paged the moment they happen.

Each report can filter what makes the cut:

Most digests focus on what changed. The "Pages Currently Failing" section flips that and shows what isn't being checked successfully right now: monitors stuck on timeouts, blocked by bot protection, returning server errors, or hitting SSL issues. 404s are not included, since broken pages have their own dedicated section with replacement suggestions.

The list shows the page name, the current status (timeout, blocked, server error, etc.), and how long ago the last attempt happened. It's a single block at the bottom of the digest, off by default for new reports but easy to enable per report.

This is the difference between thinking your monitor is healthy because no email arrived, and knowing it's silently broken.

Every change in the digest carries a thumbs-up / thumbs-down pair, plus a comment field. Recipients (whether they have a PageCrawl account or not) can:

For teams that want a structured workflow, enable review board actions. Each change shows a board selector (To Review / Flagged / Reviewed) so the team can triage changes directly inside the digest without opening the dashboard.

Every digest gets a unique URL that anyone can open in their browser without signing in. The URL is included in every email, and you can copy it from the digest header for pasting into Slack, a Notion doc, or a board deck.

Sharing options:

Links can be rotated (invalidates the old URL) or revoked at any time. Default expiry is 30 days.

Each digest is laid out for print. Open it in your browser, hit print, and you get a clean, paginated PDF for board decks, audit archives, or quarterly reviews.

Beyond print:

The Excel attachment is automatically skipped if the file would be too large for SMTP delivery, so the email itself never bounces.

Recipients live on the report, not the workspace. You can mix:

Each recipient slot can be set as To, Cc, or Bcc. Use Bcc when you want to send to a long list without exposing the recipient list (e.g., a board distribution).

If a report is misconfigured (no recipients, broken webhook), the workspace owner is notified after the first failure. Subsequent failures don't re-notify, so a broken report can't spam the owner.

Marketing — weekly competitor briefing. Scope: tag #competitors. Schedule: weekly, Monday 8am. Recipients: marketing team + CMO. Style: detailed executive summary. Escalation: enabled, threshold 90, channel Slack #competitor-intel.

Sales — daily pricing watch. Scope: tag #pricing. Schedule: daily, weekdays only, 7am. Recipients: VP Sales, RevOps lead. Style: bullets. Importance threshold: 50. Group by domain: on.

Legal — monthly compliance roundup. Scope: folder /vendor-legal. Schedule: monthly, 1st of the month, 9am. Recipients: General Counsel, compliance@. Style: risk assessment. Excel attachment: on.

Product — weekly launch radar. Scope: domains competitor1.com, competitor2.com, competitor3.com. Schedule: weekly, Friday 4pm. Recipients: product team + CEO. Style: action briefing. Priority escalation: on, threshold 80, Slack #product-radar.

Executive — monthly board pack. Scope: all monitors. Schedule: monthly, last Friday. Recipients: board distribution (Bcc). Style: detailed executive summary. Failing pages: on. Public share link: rotated each month.

#enterprise-watch, #regulatory).When a monitor is assigned to any scheduled report, its instant workspace-level notifications are bypassed. Changes are collected and delivered in the next digest instead.

Exceptions: escalation alerts still fire immediately, and public subscriber notifications are unaffected. If you delete or disable a report, the monitors it covered go back to receiving instant notifications automatically.

Standard plans include up to 2 reports. Higher-tier plans include unlimited reports plus on-demand generation, the full eight summary styles, custom AI focus prompts per report, the failing-pages section, and Excel attachments.

For exact limits, see the pricing page.

Control when PageCrawl runs checks on your monitored pages by setting active days, hours, and check frequency. This is configured per workspace.

Available on paid plans.

Set how often each page is checked. Available frequencies depend on your plan:

| Plan | Minimum Interval |

|---|---|

| Free | Every hour |

| Standard | Every 15 minutes |

| Enterprise | Every 5 minutes |

| Ultimate | Every 2 minutes |

Full frequency options: every 2 min, 3 min, 5 min, 15 min, 30 min, 45 min, hourly, every 2 hours, 3 hours, 6 hours, twice daily, daily, every 2 days, every 3 days, weekly, every 2 weeks, and monthly.

Limit checks to specific days and times for an entire workspace:

When outside the scheduled hours or on inactive days, PageCrawl pauses checks for all pages in the workspace. Checks resume automatically when the next active period begins.

Instead of receiving individual notifications for each change, you can configure a daily email digest:

The digest summarizes all changes detected since the last digest was sent.

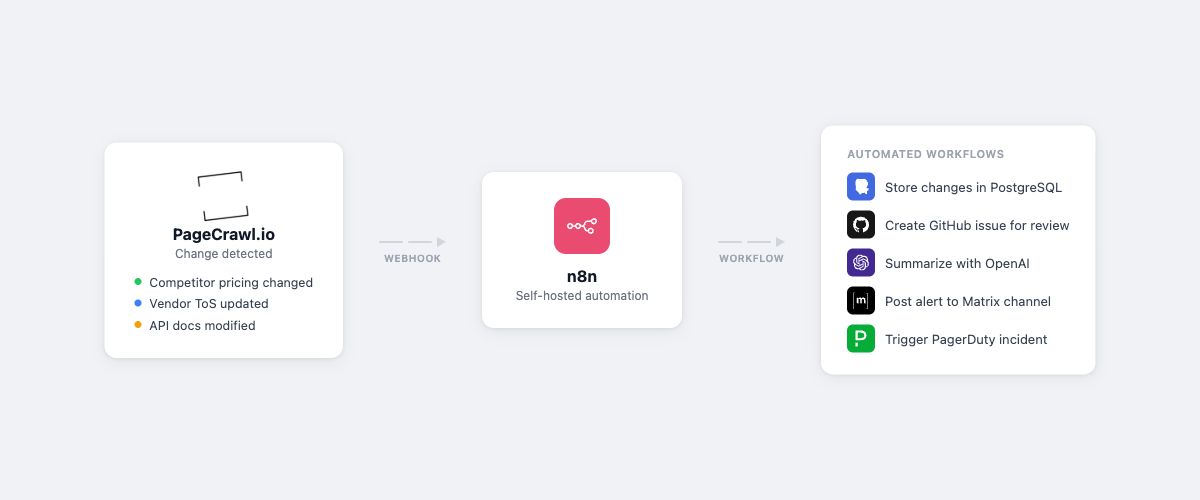

The integration of PageCrawl.io with Zapier takes web monitoring to the next level by automating tasks and connecting your web monitoring data to countless other applications. In this guide, we'll explore how to set up this powerful integration and unlock a world of possibilities.

Zapier is an automation platform that connects your favorite apps and services, allowing them to work together seamlessly. By integrating PageCrawl.io with Zapier, you can:

Here's a step-by-step guide to help you integrate PageCrawl.io with Zapier and enhance your web monitoring capabilities:

If you're not already a PageCrawl.io user, sign up for an account.

Set up the monitoring settings for the web page you're interested in tracking. Customize the elements you want to monitor and your notification preferences.

Visit the Integrations page and click Setup on the Zapier integration. In the modal that opens, click Open on Zapier to set up the Zapier + PageCrawl.io integration.

Define the actions you want to take when a trigger event occurs. This can include sending notifications, updating other apps, or performing custom actions.

Once you're satisfied with the setup, activate your Zap, and it will start automating tasks based on changes detected by PageCrawl.io.

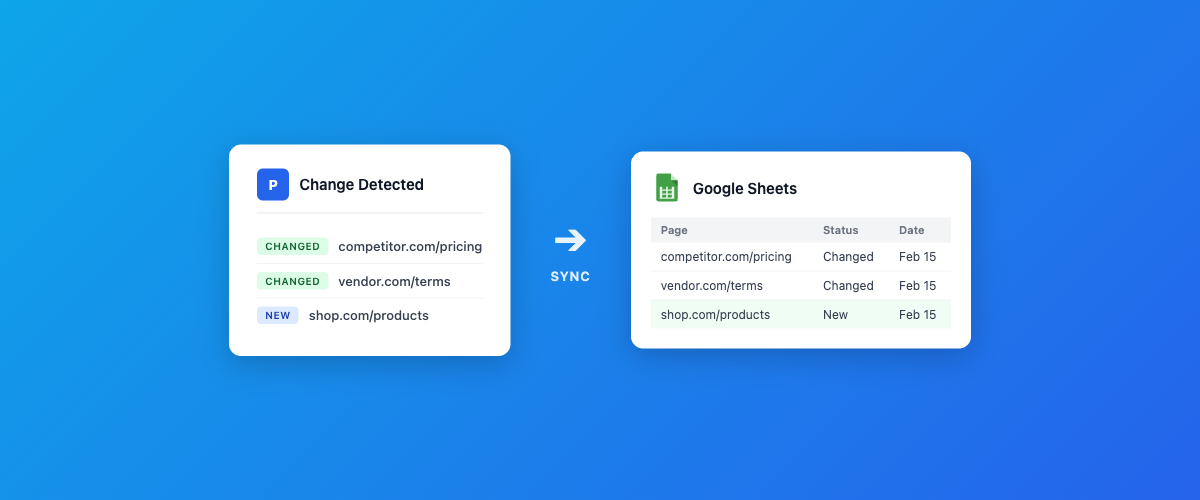

Managing and tracking changes on websites is essential for various purposes, from monitoring competitors to ensuring your web services are running smoothly. PageCrawl.io simplifies this process by allowing you to effortlessly monitor web page changes and integrate the data directly into Google Sheets. In this guide, we'll explore how to set up this powerful integration to store website change history efficiently.

Google Sheets offers a versatile and collaborative platform for storing and analyzing data. By integrating PageCrawl.io with Google Sheets, you can keep all your web page change history in one place for easy access and analysis.

Here's a step-by-step guide to help you integrate PageCrawl.io with Google Sheets and start storing website change history effortlessly:

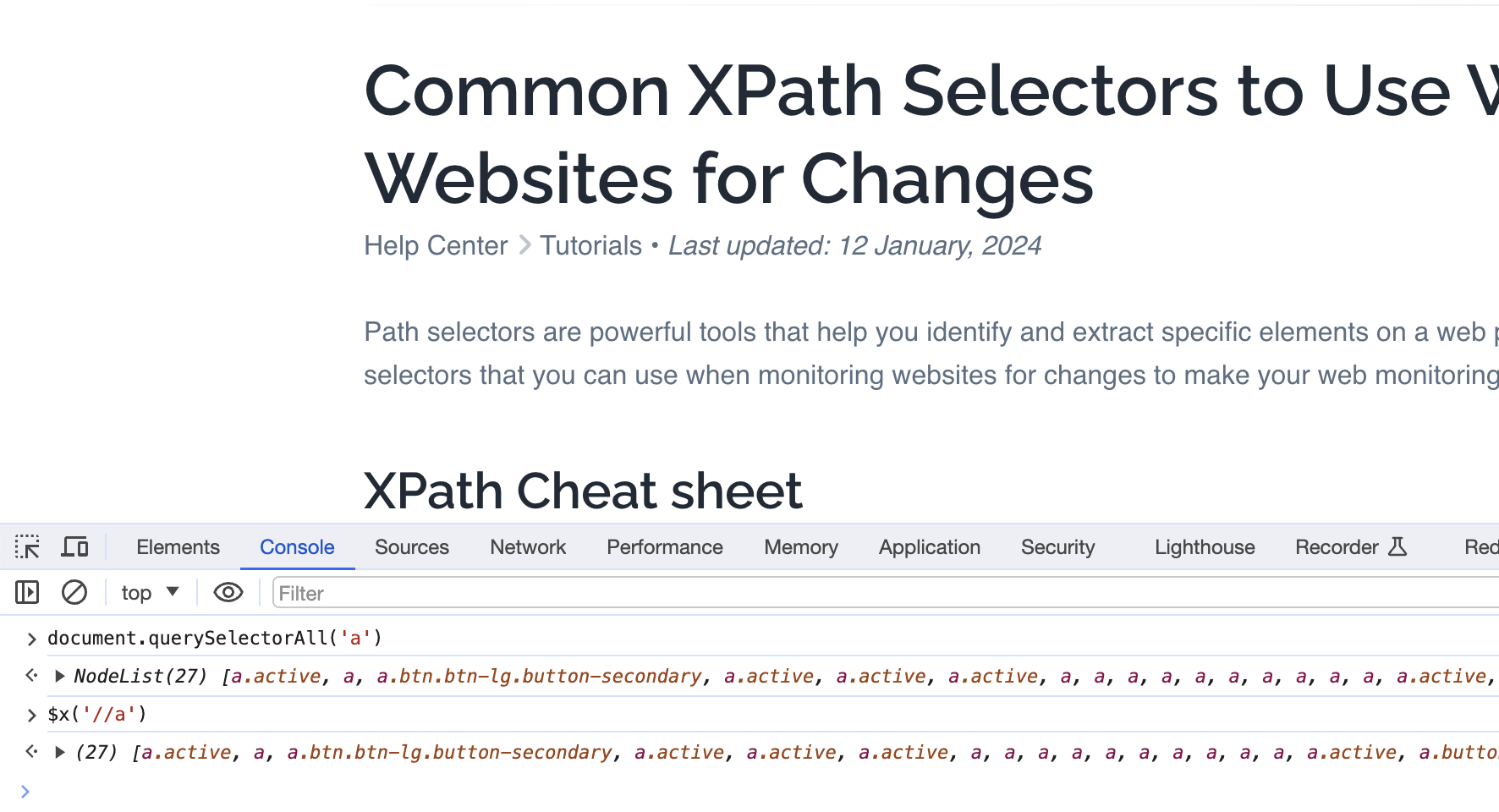

XPath selectors are powerful tools that help you identify and extract specific elements on a web page. In this guide, we'll explore common XPath selectors that you can use when monitoring websites for changes to make your web monitoring efforts more effective.

CSS Selectors are favored by many web developers as they are easy to learn if you already know CSS syntax. On the other hand, XPath Selectors offer greater power and flexibility, such as the ability to find elements that contain specific text. However, the learning curve for XPath can be steeper. If you already know CSS - that's good, you should be able to use it for most use cases. If you don't know any, we recommend starting with XPath, since it can be more flexible.

Here, you'll find a convenient 'cheat sheet' that comprehensively covers the most commonly used XPath selectors for your reference. We suggest taking a quick look through this list before proceeding to the Common XPath Selectors for Web Monitoring section below.

Before we start, you should familiarize yourself with some fundamental concepts to better understand the terminology and functionality. Here are a few key terms:

Attribute: An attribute provides additional information about an HTML element. It is always specified in the start tag of an element and usually comes in name/value pairs like name="value". For example, in <a href="https://example.com">, href is an attribute name and https://example.com is its value.

Element: An HTML element is an individual component of an HTML document or web page. It is written with a start tag, with an optional end tag, and content in between. For example, <p>This is a paragraph</p>; here, <p> is the start tag, </p> is the end tag, and This is a paragraph is the content.

ID: The id attribute is used to specify a unique id for an HTML element. You cannot have more than one element with the same id in an HTML document. It is used for identifying and targeting the element with CSS and JavaScript. For example, <div id="header"> defines a division with a unique id of header.

Class: The class attribute is used for specifying a class name for an HTML element. Unlike the id attribute, the same class can be used on multiple elements. This is useful for applying the same styling or behavior to different elements. For example, <span class="highlight"> assigns the highlight class to a span element, which can be targeted with CSS or JavaScript.

You might wonder where you can try the selector before pasting it in PageCrawl.io You should open browser console and use following commands to test your selector.

XPath

$x('//a')CSS

document.querySelectorAll('a')//: Selects all matching elements anywhere in the document./: Selects from the root element.element: Selects elements with the specified name.[@attribute]: Selects elements with the specified attribute.[@attribute='value']: Selects elements with a specific attribute value.[@attribute!='value']: Selects elements with an attribute value not equal to 'value'.[starts-with(@attribute,'prefix')]: Selects elements with an attribute starting with 'prefix'.[substring(@attribute, string-length(@attribute) - string-length('suffix') + 1) = 'suffix']: Selects elements with an attribute ending with 'suffix'. Note: there is no direct ends-with() function in XPath 1.0, so this workaround is needed.[contains(@attribute,'substring')]: Selects elements with an attribute containing 'substring'.[@attribute1='value1' and @attribute2='value2']: Selects elements that meet multiple attribute conditions.[@attribute1='value1' or @attribute2='value2']: Selects elements that meet at least one of the attribute conditions.not(expression): Negates a condition.text(): Selects the text content of an element.contains(text(),'substring'): Selects elements containing specific text.starts-with(text(),'prefix'): Selects elements with text starting with 'prefix'.substring(text(), string-length(text()) - string-length('suffix') + 1) = 'suffix': Selects elements with text ending with 'suffix'. Note: ends-with() is an XPath 2.0 function and is NOT supported in browsers (which only support XPath 1.0). Use this substring() workaround instead./parent::element: Selects the parent of the current element./child::element: Selects the children of the current element./ancestor::element: Selects ancestors of the current element./descendant::element: Selects descendants of the current element.[position()=1]: Selects the first matching element.[last()]: Selects the last matching element.[position()>2]: Selects elements after the first two.*: Selects all elements.element[*]: Selects elements with at least one child element.element[@*]: Selects elements with at least one attribute.element[contains(@attribute,'value')]: Selects elements with attributes containing 'value'.count(expression): Counts the number of matching elements.sum(expression): Sums numeric values within matching elements.concat(string1, string2): Combines two strings.substring(string, start, length): Extracts a substring.normalize-space(string): Removes leading/trailing spaces and collapses internal spaces.Here are some common XPath selectors that you can employ when monitoring websites for changes. Initially, basic XPath selectors will be covered, and we will then proceed to more advanced examples.

XPath allows you to target specific text elements on a webpage, which is useful for tracking changes in content, headlines, or paragraphs. For example:

//h1 // Selects all h1 headers on the page.

//p // Selects all paragraph elements.

//div[@class='content'] // Selects text within div elements with a specific class.XPath selectors help you monitor links, whether you want to track all links on a page, external links, or links with specific text. For instance:

//a[@href] // Selects all links with an href attribute.

//@href[not(contains(.,'example.com'))] // Selects external links (replace 'example.com' with the target domain).

//a[contains(text(),'Download')] // Selects links with specific anchor text, case-sensitive.To view more examples with links, visit Tracking links with text tutorial.

To monitor images on a webpage, you can use XPath selectors to identify images by their source (src) attribute or alt text. For example:

//img // Selects all image elements.

//img/@src // Selects the src attribute of all images.

//img[contains(@alt,'logo')] // Selects images with specific alt text.XPath selectors are particularly useful for extracting data from tables, which are commonly used on websites for displaying structured information. For example:

//table // Selects all tables on the page.

//table//tr // Selects all table rows.

//table//tr/td[2] // Selects the second column (td) in all rows.You can target elements with specific attributes or attributes containing certain values using XPath selectors. For instance:

//*[@id='specificId'] // Selects elements with a specific ID attribute.

//*[@class='highlight'] // Selects elements with a specific class attribute.

To monitor elements when their class or ID contains a part of text, you can use XPath selectors with the contains() function. For example:

//*[contains(@class, 'partial-text')] // Selects elements with a class containing 'partial-text'.

//*[contains(@id, 'partial-text')] // Selects elements with an ID containing 'partial-text'.

//input[starts-with(@name, 'user_')] // Selects input elements with names starting with 'user_'.

//input[contains(@id, 'search')] // Selects input elements with IDs containing 'search'.

//button[contains(@class, 'btn-')] // Selects buttons with class names containing 'btn-'.

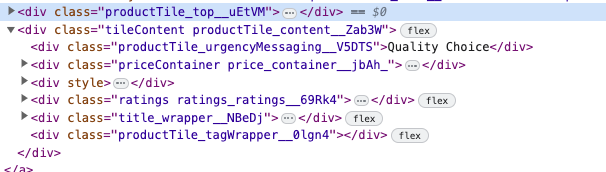

This XPath selector is particularly valuable, especially when dealing with CSS classes that include unpredictable or random text fragments.

For instance, suppose you want to extract the text 'Quality Choice' from an image, as shown in the example above. However, the CSS class, such as productTile_urgencyMessaging__V5DTS includes a suffix like __V5DTS that is prone to change with each website update.

To avoid having to update the selector each time website updates, you may employ the XPath contains() function to select an element.

//*[contains(@class, 'productTile_urgencyMessaging')] // Retrieve 'Quality Choice' textXPath supports logical operators for combining conditions. This is particularly useful for complex selections. For example:

//a[@class='external' or @class='external-link'] // Selects links with class 'external' or 'external-link'.

//div[@class='important' and contains(text(),'Alert')] // Selects divs with class 'important' containing 'Alert'.

You can create complex XPath expressions by combining multiple conditions and functions. This provides immense flexibility in your selections. For example:

//div[@class='content' and (contains(text(),'Important') or contains(text(),'Alert'))]

//table[not(@class='hidden')]/tbody/tr[td[2]='Completed']/td[3]

To leverage these advanced XPath selectors effectively for website monitoring, you can integrate them with web monitoring tools such as PageCrawl.io:

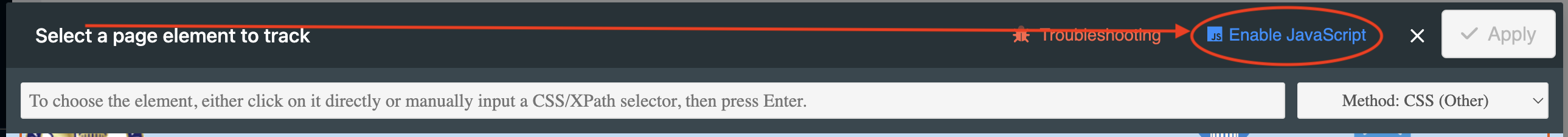

Occasionally, you might encounter challenges when using the Visual Selector tool. This guide outlines some common problems and provides solutions to help you resolve them.

You can sometimes see a page loaded but missing some or all of their styles or elements on page.

Solution: To go around this issue you may try Enabling/Disabling JavaScript. If that does not help, you can always copy and paste the selector from your browser window.

In some instances, the Visual Selector tool may have difficulty loading certain pages. Our development team is continually working to enhance its compatibility. You may contact support to report a page that is not working.

Solution: If you encounter this issue, you can try pasting the selector directly from your web browser to work around the problem.

The Visual Selector tool may generate CSS selectors that become obsolete when a website updates. In certain cases, websites intentionally modify CSS selectors or add suffixes to thwart page monitoring tools like PageCrawl.

Solution: For example, a selector like .productTile_urgencyMessaging__V5DTS might include a suffix like __V5DTS that is prone to change. To avoid having to update the selector each time the website changes you may use a specialized XPath function to search if class name contains:

//*[contains(@class, 'productTile_urgencyMessaging')]Visit XPath tutorial for common selectors for more information how to create a XPath selector by yourself.

We offer four selector generation methods:

By default, you can use the CSS selector method. In some cases, generated CSS may be more effective on certain websites, while generated XPath works better on others. If you have expertise in writing CSS or XPath selectors, you have the flexibility to choose your preferred method and optimize it as necessary.

Looking to learn how to write a XPath selector yourself or explore common XPath selectors? Check out our XPath tutorial for common selectors. As a tip, you can also request ChatGPT to assist you in creating a CSS/XPath selector.

]]>False positive notifications can be frustrating when monitoring websites. These alerts signal changes that are either irrelevant or nonexistent, leading to wasted time and reduced efficiency.

When using PageCrawl to monitor website changes, the rate of false-positive alerts is typically low if pages are correctly configured. However, some detected changes may not be relevant to your specific monitoring needs. This comprehensive guide will show you how to effectively reduce unnecessary alerts and ensure you only receive notifications for meaningful changes.

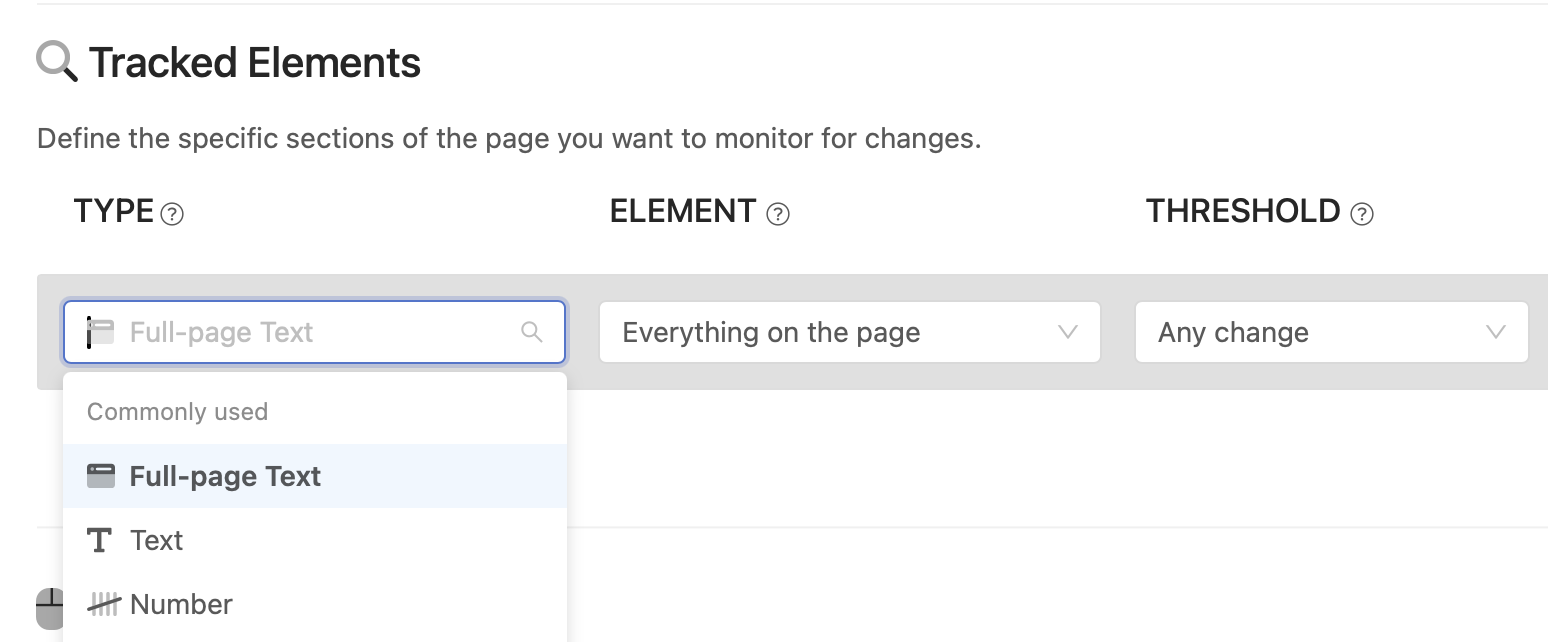

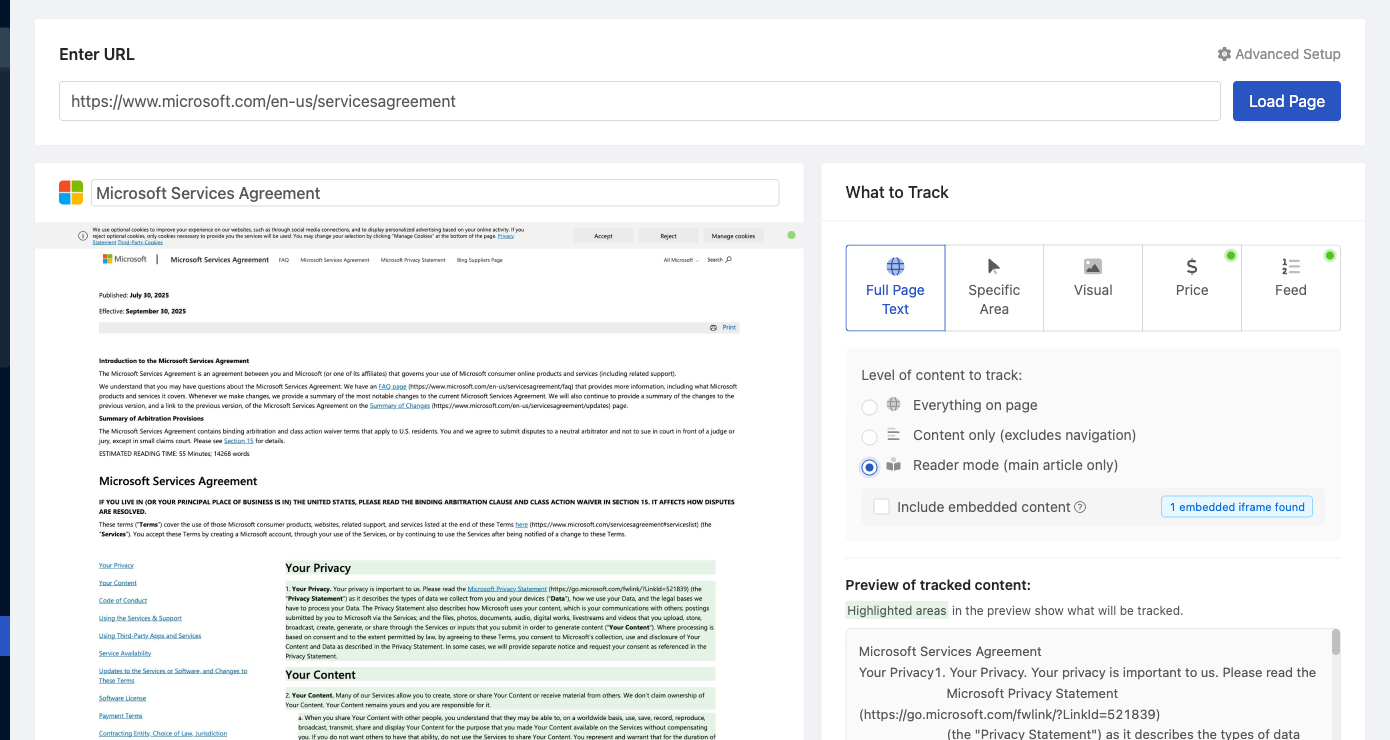

Selecting the wrong type of element to monitor is one of the most common causes of false positives. With multiple monitoring options available, it's easy to get overwhelmed, especially if you're new to website monitoring.

Begin by tracking the text of the full page. This approach works best as a starting point for most monitoring scenarios, particularly when you need to monitor a large number of websites. If you notice frequent false positives, you can always revisit your setup and focus on specific page sections instead.

Monitoring Content Only is the first step to reduce false positives. This option filters out common page elements like headers, navigation menus, sidebars, and footers, focusing only on the main content area of the page. It's an effective way to eliminate noise from less relevant sections while still capturing most important content changes.

Reader mode takes content filtering a step further, similar to the reader mode you may have used on your phone. This mode monitors only the primary article text, using advanced algorithms to identify and extract the core content while filtering out everything else.

Reader mode is more restrictive than "Content Only" and works best for:

However, Reader mode may not work well on:

Note: If you find that important content changes are being missed, consider switching back to "Content Only" for broader coverage.

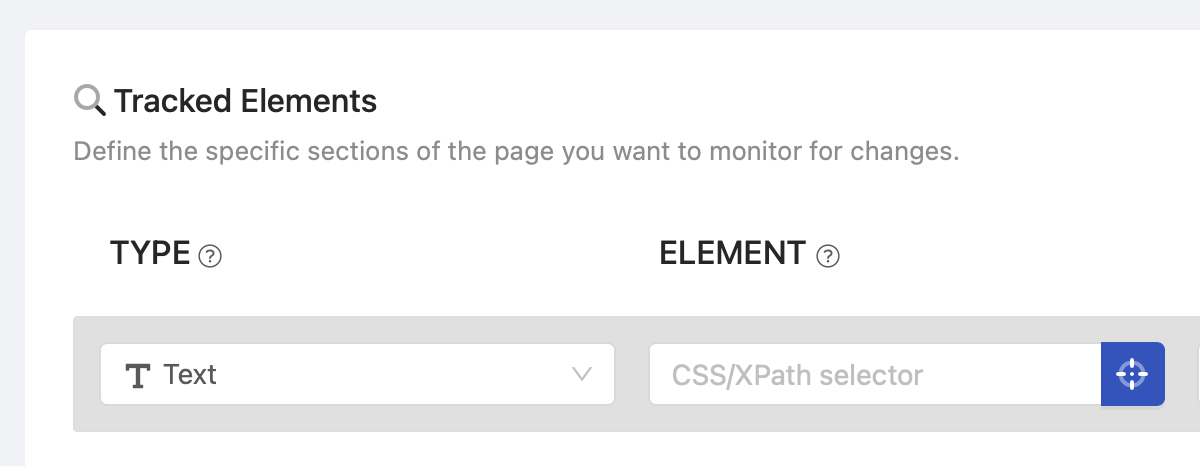

If tracking "Content Only" or "Reader mode" still results in unnecessary notifications, switch to the "Text" tracked element type and use our "Visual Selector" (click on the blue button) to pinpoint the exact area you want to monitor. Be aware that significant page redesigns can cause these selectors to stop working.

Advanced Tips:

Websites frequently undergo minor updates, such as date changes, without substantial alterations to their content. These small updates can create unnecessary alerts that distract from meaningful changes. Here's how to avoid them.

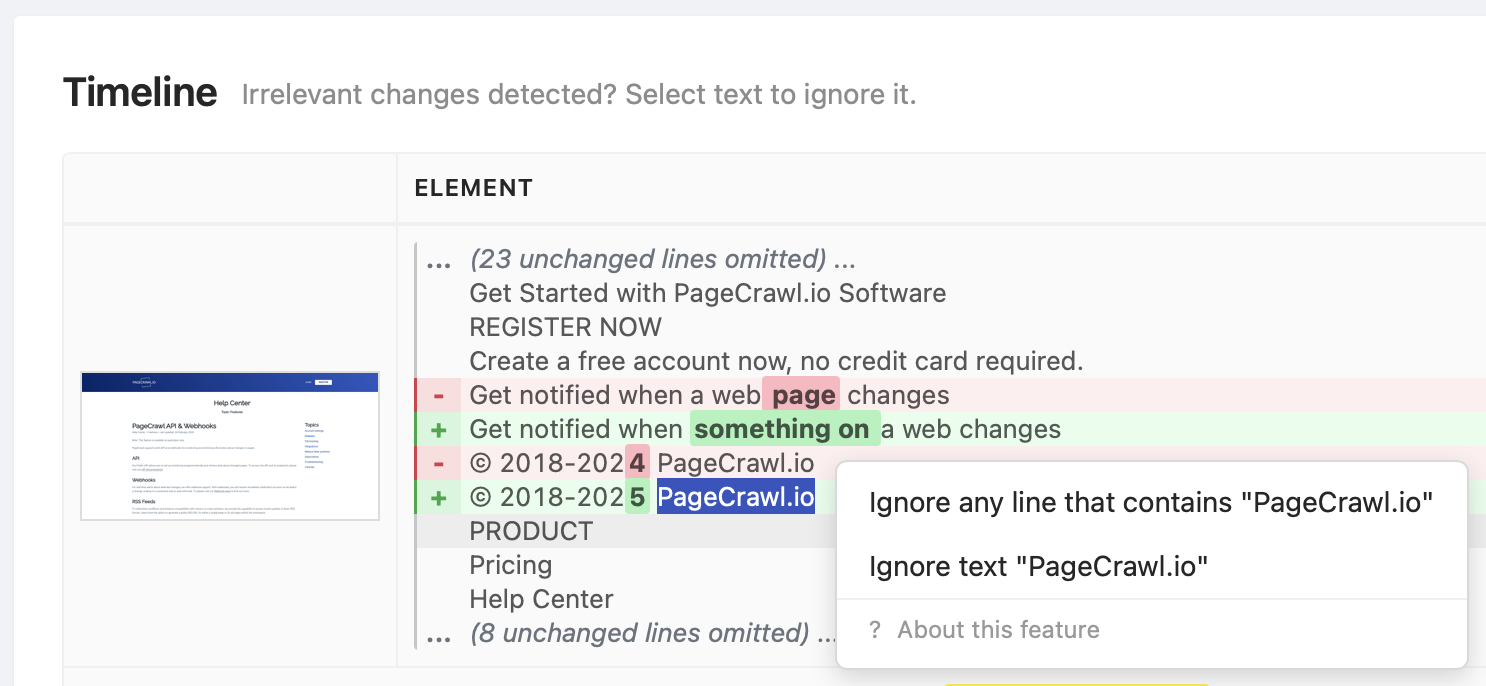

In Timeline, when reviewing detected changes, you can select irrelevant text and ignore any line that contains the selected text. For example, if a page has a section with a latest news headline like "Latest News: Bitcoin has reached a new all-time high," you can select "Latest News" and all lines containing this text will be ignored in future change detections. If you monitor multiple pages on the same website, this will be applied to all pages with the same domain name.

Alternatively, you can add an "Ignore Text" condition or create a global filter (update your team settings) to ignore it across all pages. Use % as a wildcard to indicate that any line containing a %specific word% or sentence should be ignored.

If a specific page area keeps triggering change detections, add a "Remove page element" action and select an area to suppress it completely.

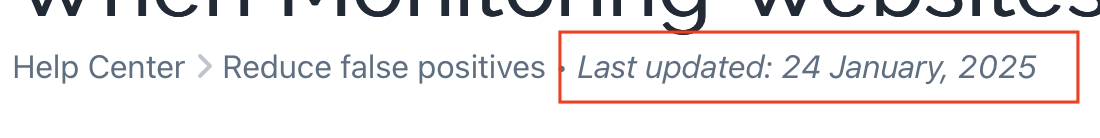

Use the "Remove dates" action to replace dates with placeholders like [DATE REMOVED]. This prevents alerts for irrelevant updates like "updated 3 minutes ago" or publication timestamps such as "Updated at: 2025-02-25" that change frequently even when nothing was updated on the page.

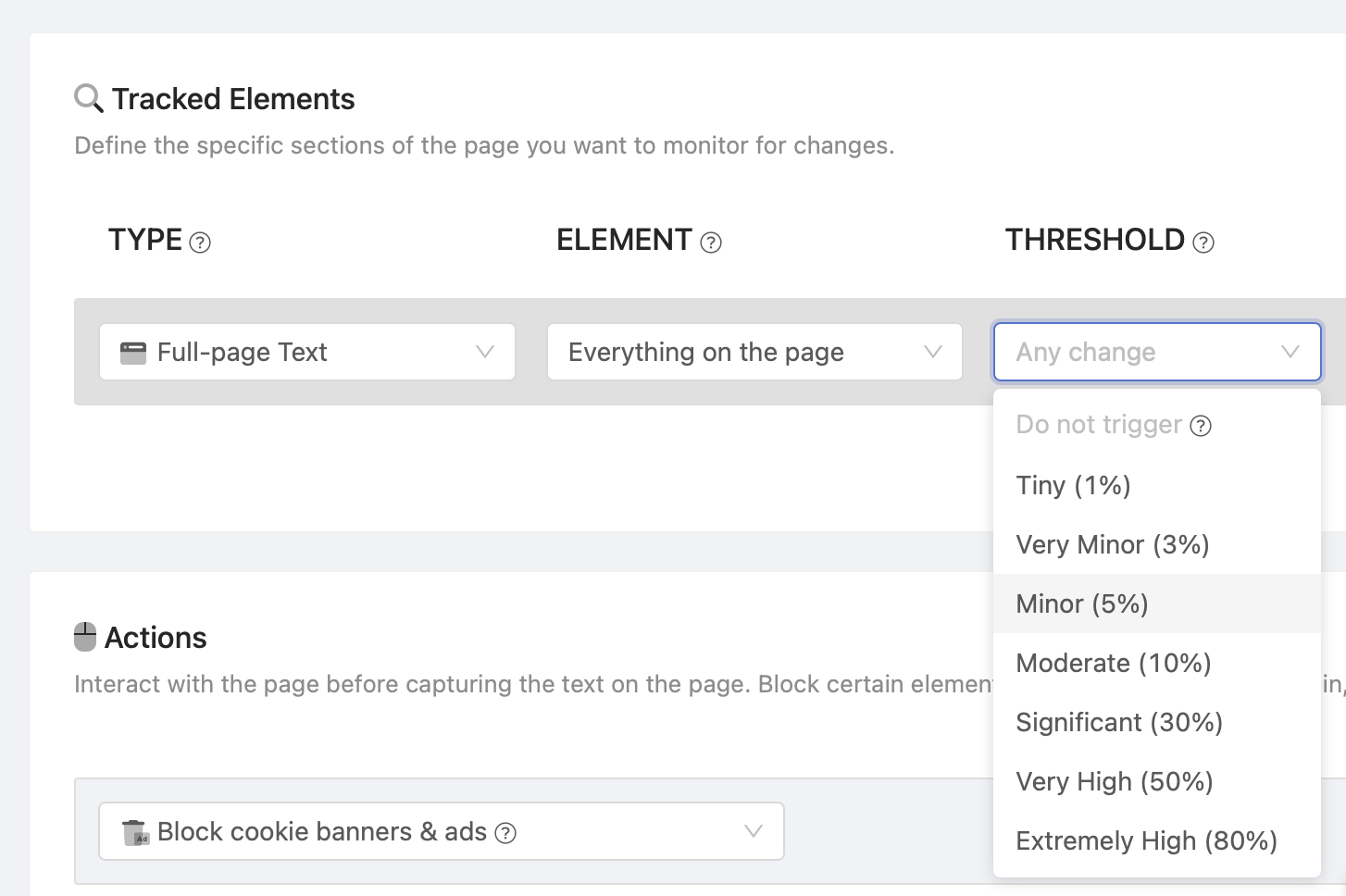

You can configure a threshold to be alerted only when significant changes occur (e.g., when more than 1% of the page content changes). Before setting the threshold, review historic changes in Timeline to avoid setting it too high and missing important updates.

If numeric changes aren't relevant to you, you can add an "Ignore numbers" condition in the "Conditions & Filters" section to prevent number changes from triggering change detections. This is particularly useful for pages with counters, view counts, or other metrics that change frequently.

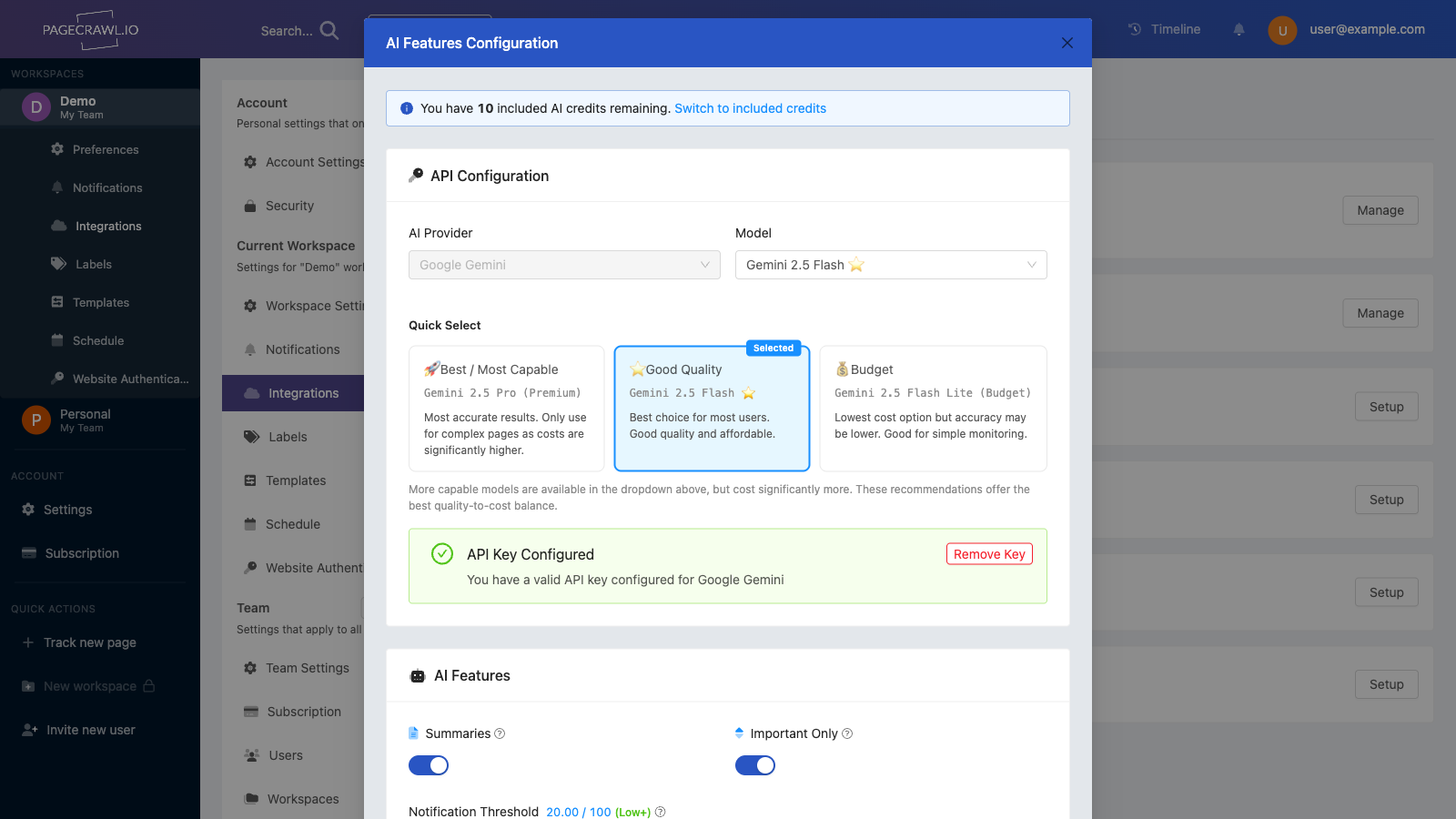

PageCrawl uses AI to analyze every detected change and help you focus on what matters most.

When a change is detected, our AI:

Use the feedback buttons to tell us which changes matter to you:

You can provide feedback:

Dynamic websites load or update parts of their content after the initial page load. For example, prices, stock availability, or user-specific recommendations might load dynamically, leading to unnecessary notifications. Here's how to handle these scenarios.

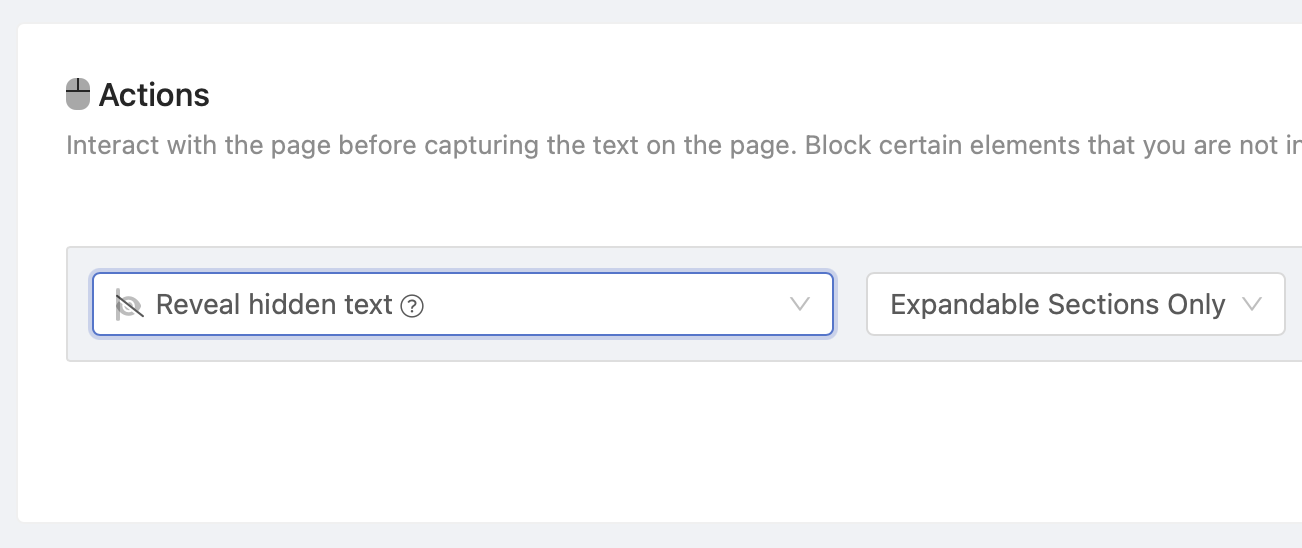

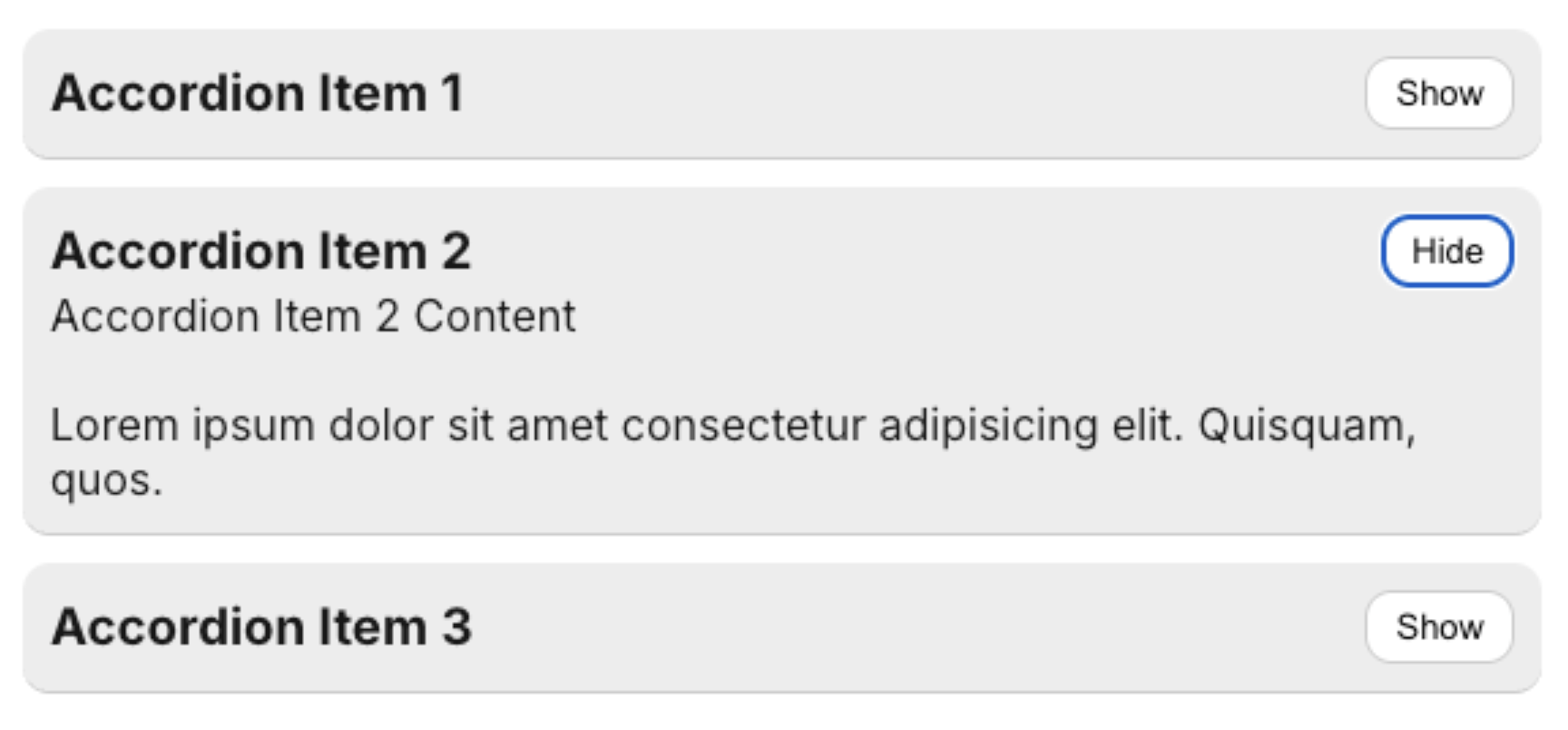

PageCrawl only captures text that is visible when in "Full-page text" mode. This can be problematic if the page contains collapsible sections (accordions, panels, etc.) that are only revealed when clicked.

To address this, add the "Reveal hidden text" action, which will automatically expand any collapsed sections on the page before capturing content.

PageCrawl waits until the page is fully loaded. However, in some situations, certain page elements only appear after additional time or after specific actions are executed (clicking, form submission, redirects, etc.).

You can add wait actions to ensure the page is completely ready before capturing content. Multiple "Wait" actions are available:

Note: Actions will wait up to 15 seconds before continuing (10 seconds for redirect waits). To avoid unnecessarily long wait times, different subscription tiers have varying timeout limits: Free (45 seconds), Standard (90 seconds), Enterprise (180 seconds), Ultimate (180 seconds). If loading takes longer than the timeout limit, the page will result in a timeout error.

Frequently updated areas like footers, headers, and sidebars can result in irrelevant notifications. These sections often include changing elements such as timestamps, menus, or recent updates that are unrelated to the main content.

nav,aside,footer,.footer,header to exclude them. This directly alters the page, and these areas will not be visible in screenshots. You may want to use this approach when using a Tracked Element other than "Full page text."main selector. If no such element exists (e.g., the website is not semantically structured), you will see a "No selector found" error.

Occasionally, a monitored page may fail to load properly, leading to blank content or error messages. While PageCrawl detects these situations in most cases, it can still trigger false positives. This often happens when a website doesn't report errors properly, relies on external data sources that fail to load, or when dynamic content is not displayed correctly.

Use the "Mark Check as Failed When" action to flag a page as failed without recording changes. For example:

Additionally, customize the "Report Errors" setting to trigger only after a certain number of consecutive failures (e.g., after 10 consecutive failed checks) to avoid being overwhelmed by temporary issues.

If you check pages frequently, ensure the "Delay when Failed" setting is deactivated (in Advanced preferences) to prevent page failures from reducing the page-checking frequency.

Websites may display varying content based on user sessions, location, or elements that frequently appear and disappear. This can lead to false positive notifications.

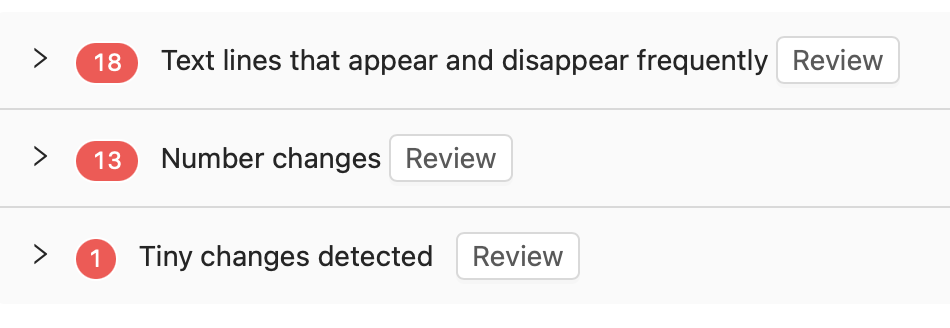

Once sufficient sample data is collected, PageCrawl will automatically suggest filters to reduce false triggers. Look for the "Frequently changing content detected" panel on your monitored page.

You can:

For changes that slip through, use the thumbs down button to mark them as noise.

By default, PageCrawl enables "Block cookie banners and ads" and "Hide website overlays and popups" actions to reduce unnecessary notifications. However, you can disable these settings if not needed.

Cookie banners often appear dynamically after the page loads, altering the content and triggering false positives.

Overlay popups, such as ads or newsletter subscription prompts, may appear sporadically and interfere with accurate monitoring.

These default settings simplify the monitoring process but can be adjusted based on your specific needs.

Sometimes pages use animations to reveal content sections that only appear as you scroll down the page.

By implementing these strategies, you can significantly reduce false positive notifications when monitoring websites with PageCrawl.

Quick wins for reducing false positives:

Remember:

With proper configuration and ongoing fine-tuning, you'll achieve efficient and reliable website change monitoring.

If you're still experiencing issues with false positives after trying these solutions, don't hesitate to contact our support team for personalized assistance with your specific monitoring setup.

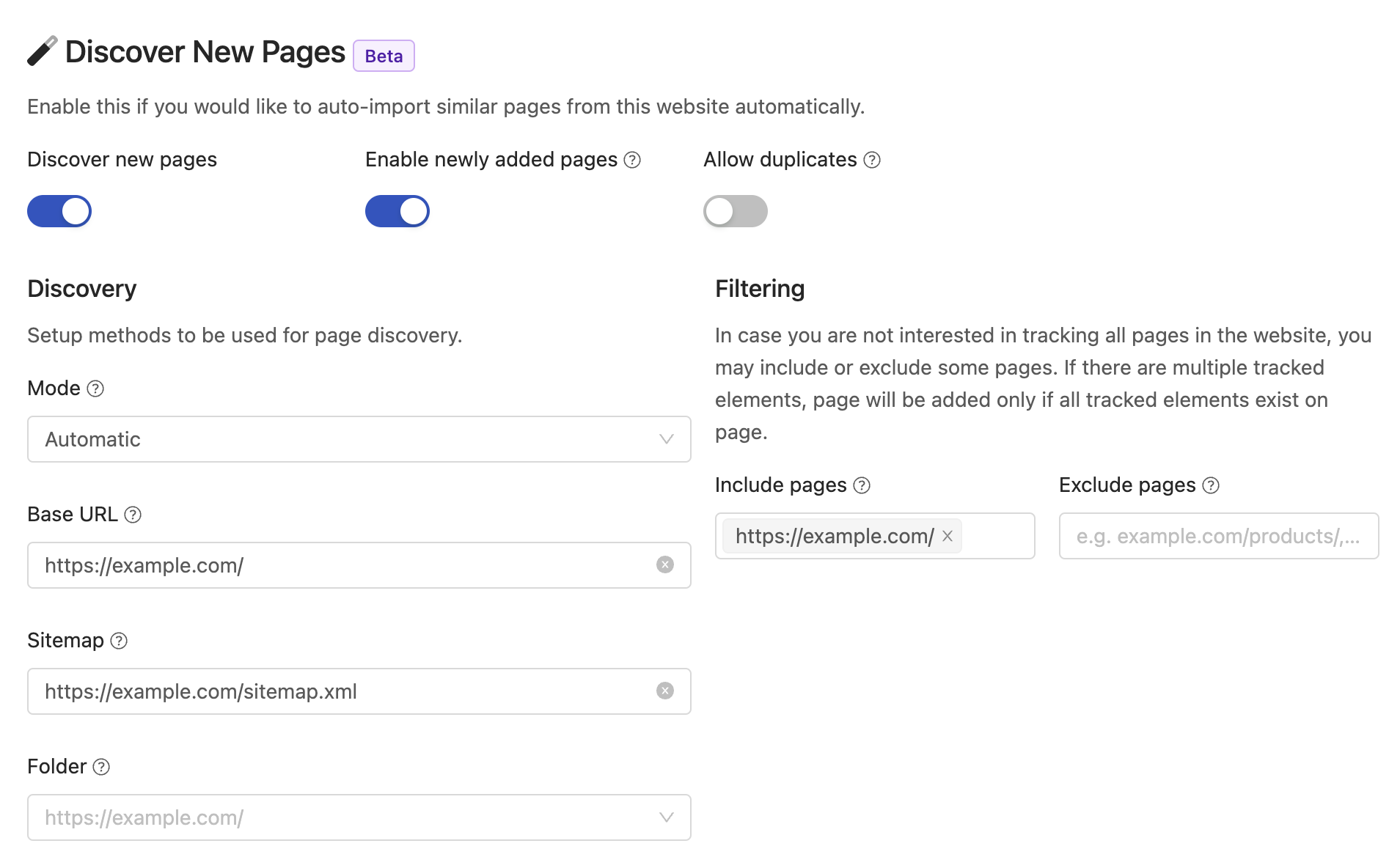

]]>PageCrawl.io is a powerful website changes monitoring tool designed to help you keep track of all the pages within your website effortlessly. One of its standout features is the ability to crawl and automatically discover all pages within a website, much like Google's indexing process. This article will guide you through the process of utilizing PageCrawl.io to effectively track and manage all pages within your website.

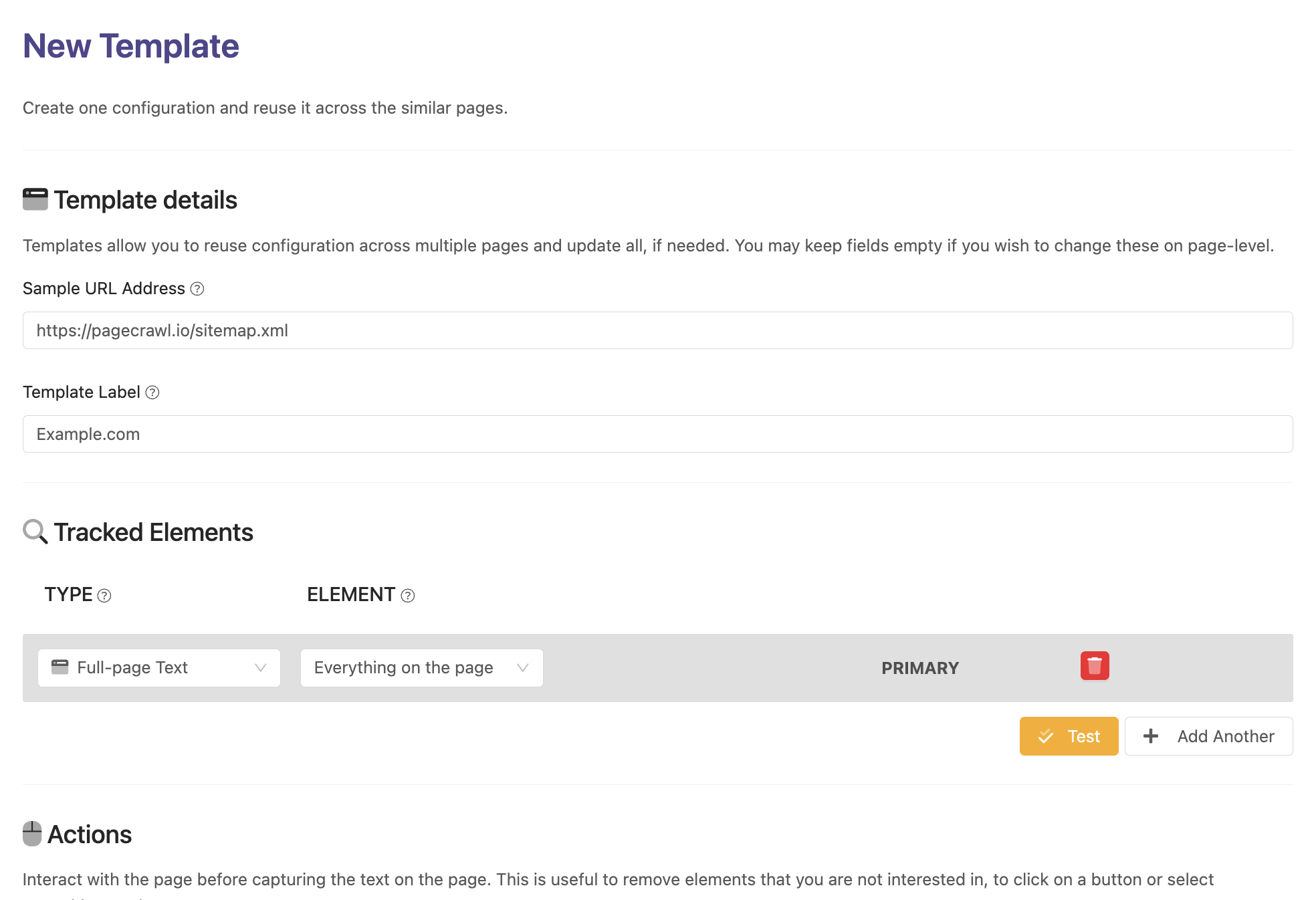

Creating a template within PageCrawl.io is the initial step to enable auto-discovery for tracking all pages within a website.

Create a Template:

Provide Sample URL: Sample URL helps to automatically setup common parameters such as Base Discovery URL, filters and automatically detect sitemaps within the site.

Activate Automatic Page Discovery: Enable this feature to automatically uncover new pages as they're added to the site.

Choose Your Crawling Method:

Sitemap only: Perfect if tracked site has a sitemap.xml file detailing all pages.

Homepage links only: Start the crawl from your provided URL, discovering pages through links on the homepage.

Follow links 2 levels deep / Follow links 3 levels deep: Opt for an extensive exploration, ensuring maximum page coverage by following links across multiple levels. These options are available on Enterprise/Ultimate plans only.

Automatic (recommended): Uses all available methods for page discovery.

Configuration: Fine-tune additional settings like tracked elements to monitor, update frequency, and specific directories for inclusion or exclusion.

Apply and Save: Save your template settings and apply them to the relevant projects within your PageCrawl.io account.

Wait for newly discovered pages to appear in your PageCrawl.io account.

Once your template is in place, PageCrawl.io systematically discovers and indexes all available pages within your website.

Review Discovered Pages: Once page discovery completes, navigate through a detailed list of discovered URLs within the dashboard.

Customized Monitoring: Set up tailored monitoring for specific pages or sections, configuring alerts to notify you of any modifications.

Content Change Insights: Review content changes over time to spot updates, removals, or additions across your monitored pages.

Optimization: Employ the insights gathered to optimize your website, refining user experience, enhancing SEO strategies, and rectifying any issues spotted during the crawl.

PageCrawl.io's automatic page discovery feature simplifies the process of monitoring all pages within a website. By following these steps, efficiently manage, monitor, and stay updated on your website's content, ensuring an informed approach to website management.

For further guidance or inquiries, consult PageCrawl.io's support resources or reach out to their customer service team.

Happy tracking!

]]>

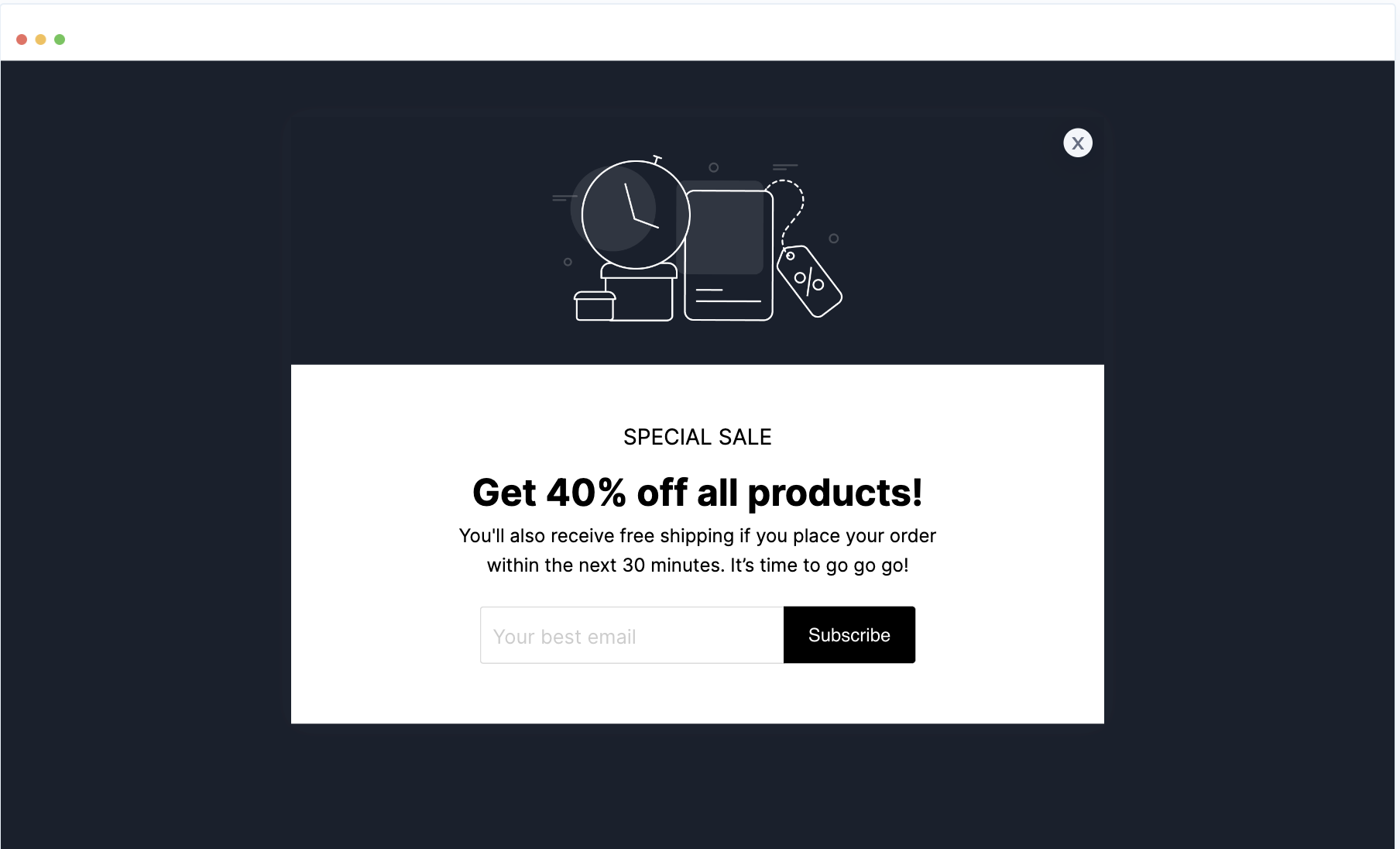

When you visit a website for the first time, you may sometimes encounter an annoying ad or offer that overlays the content. While this is usually not a problem when monitoring websites for changes, it can still sometimes cause false-positive alerts if screenshots capture the content overlaid with the popup. These popups may only appear once, or for specific visitors or geographic locations.

To mitigate false positives, we highly recommend using the "Hide website overlays & popups" action on affected pages. Keep in mind that this may not work on all pages.

If the "Hide website overlays & popups" action did not work, or if all content on the page becomes invisible, you can manually target the overlay with the "Remove page element" action to exclude it.

]]>

When monitoring web pages, you might find it useful to keep a historical HTML record for future reference. However, minor changes, such as dynamic updates to attributes, styles, or tags, can often trigger unnecessary alerts. These changes, while technically present in the HTML, might not affect the visual representation or the substantive content of the page.

Focus on Text Content: By monitoring the text content of a page rather than its HTML structure, you can significantly reduce the number of false alerts. Text content changes are more likely to represent meaningful updates to the page.

Use Multiple Tracked Elements: You can add several tracked elements to a single page. This lets you record HTML changes for reference while only receiving notifications for the elements that matter most.

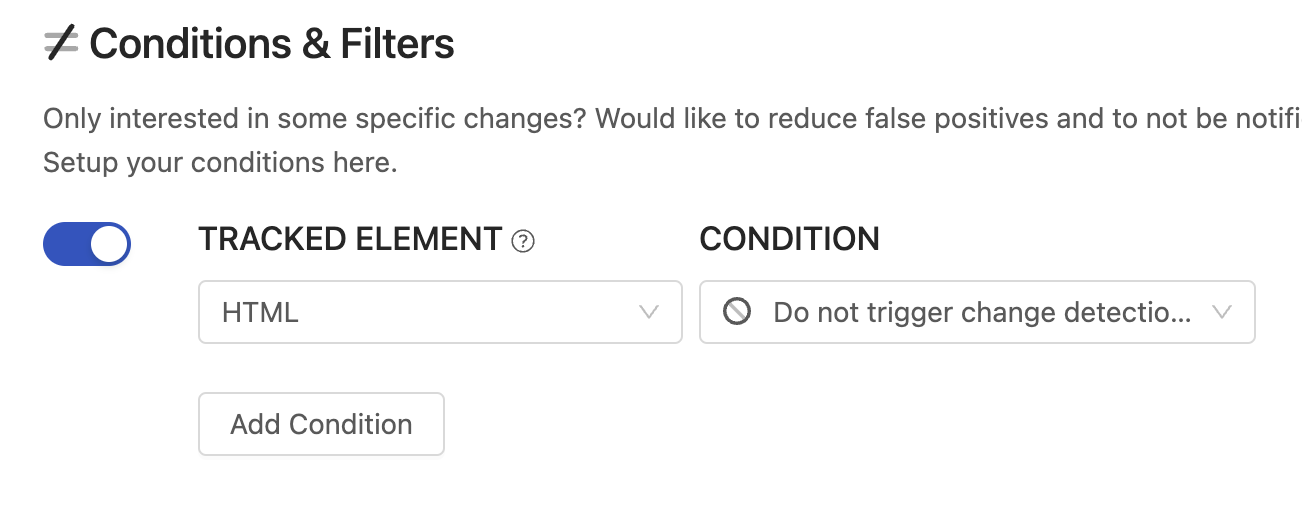

The "Do not trigger" option is a threshold setting on individual tracked elements. It records changes for that element without sending any notifications. Here is how to set it up:

With this configuration, PageCrawl will continue recording HTML changes in the timeline for future reference, but only changes on your other tracked elements (such as Text) will trigger notifications.

By carefully adjusting your monitoring settings, you can ensure that you are alerted only to significant changes that impact the content's meaning or visual presentation. This approach helps maintain the effectiveness of your monitoring efforts without the distraction of frequent, unnecessary notifications.

]]>Yes, we support cryptocurrency payments for Ultimate plans paid annually.

To arrange payment, please contact support at support@pagecrawl.io.

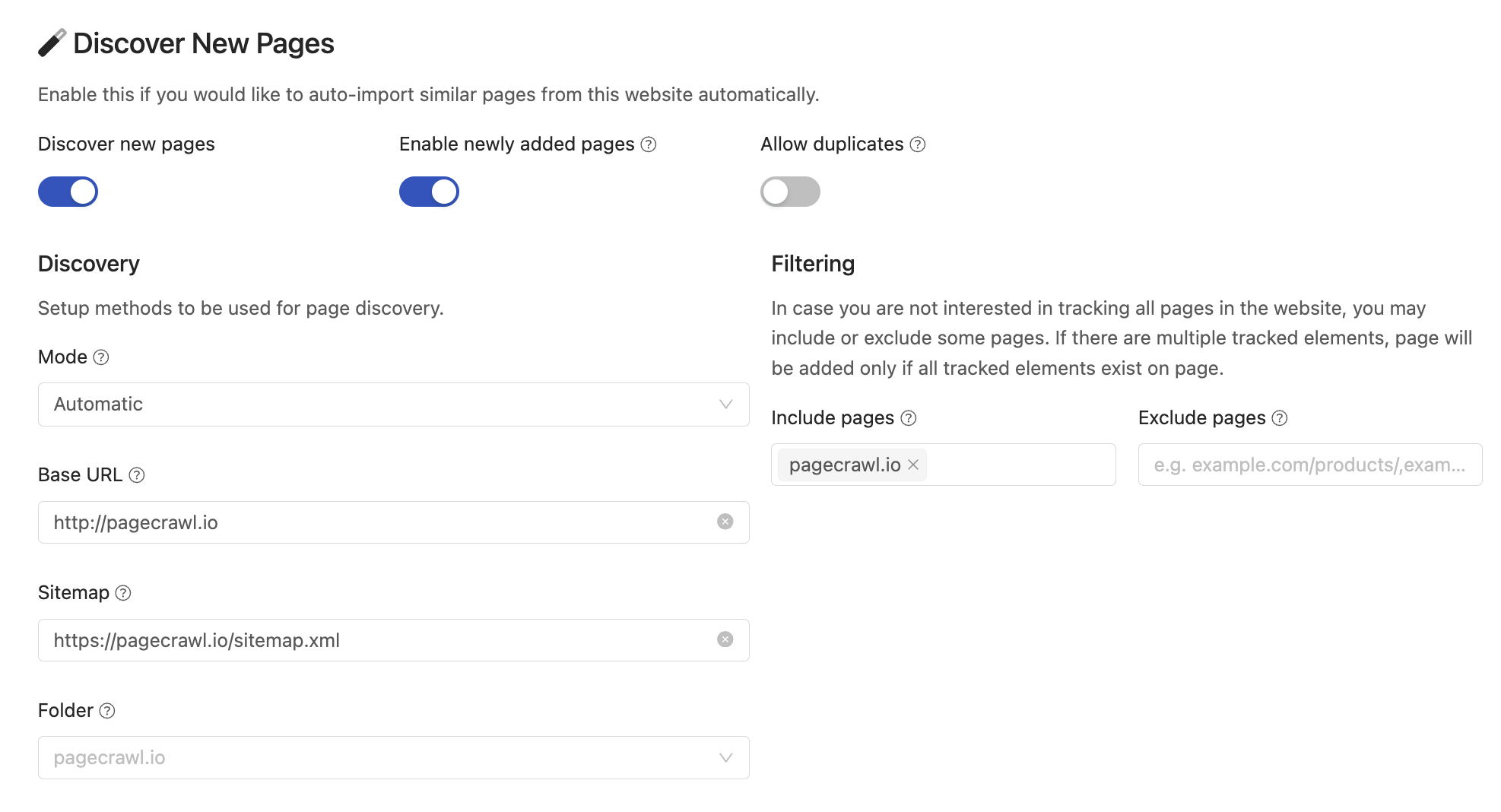

]]>PageCrawl is designed to make website change monitoring and management seamless. The "Discover New Pages" feature takes your change monitoring to the next level by automatically identifying new links, tracking changes, and ensuring your online presence remains up-to-date. In this guide, we'll delve into the capabilities of this feature, including its scanning methods, automated monitoring, and filtering options.

This feature performs automated scans of your website, identifying new links that have been added. This proactive approach keeps you informed about any changes to your website's link structure and updates.

PageCrawl provides multiple scanning methods to suit your needs. The default mode is Automatic (recommended), which combines methods to find new pages using the best approach for each website:

To start monitoring the website and automatically discover all new pages, configure a new Template which will serve as the basis for monitoring new pages.

You may choose to monitor all pages on the website or only those with a specific structure (e.g., if you only want to track product pages and not other pages).

File Checksum Monitoring detects when any online file has been modified by comparing its SHA-256 hash. Unlike text-based monitoring, this works with any file type, including zip archives, images, videos, and binary files. When a change is detected, the original file is stored so you can download and compare versions.