Pricing is the fastest-moving element of competitive strategy. A competitor can change their prices at any moment, and if you find out a week later, you have already lost deals. Whether you are running an e-commerce store, a SaaS business, or a retail operation, knowing what your competitors charge (and when they change it) is a direct input to revenue.

Price tracking tools automate this monitoring. Instead of manually visiting competitor websites, screenshots, and spreadsheets, these tools check prices on a schedule and alert you when something changes. Some go further with historical price charts, AI analysis, and integrations with your pricing and sales systems.

This guide compares the best competitor price tracking tools available in 2026, covering different approaches from dedicated pricing platforms to flexible website monitoring tools.

How Price Tracking Works

Before comparing tools, it helps to understand the two main approaches to automated price tracking.

Structured Data Extraction

Some tools connect to e-commerce platforms through APIs, product feeds, or structured data schemas (like Schema.org pricing markup). This approach extracts clean, structured price data but only works on platforms that expose it.

Pros: Clean data, high accuracy, product-level matching Cons: Limited to supported platforms, requires product catalog mapping

Website Change Monitoring

Other tools monitor the actual web page and detect when price-related content changes. This is more flexible because it works on any website, but it requires more setup to extract the specific price data you want.

Pros: Works on any website, monitors non-standard pages, catches all changes Cons: Requires element selection or smart extraction, can pick up non-price changes

The best tools combine both approaches or give you the flexibility to use either depending on the target.

What to Look for in a Price Tracking Tool

Accuracy and Reliability

The foundation. If the tool misses price changes or reports incorrect prices, everything built on top of it fails. Look for:

- Consistent check frequency: Can you check prices hourly? Every 5 minutes? The right frequency depends on your market, but flexibility matters.

- Browser rendering: Many e-commerce sites load prices dynamically with JavaScript. Tools that use a real browser engine (like Chromium) handle these correctly. Simple HTTP scrapers often return blank prices.

- Error handling: What happens when a page is temporarily down or the layout changes? Good tools retry failed checks and alert you to issues rather than silently reporting stale data.

Coverage

How many competitors and products can you monitor?

- Number of monitors: Free tiers typically offer 5-25 monitors. Paid plans range from hundreds to thousands. If you are tracking 200+ products across 15 competitors, you need at least 3,000 monitors.

- Geographic pricing: Some competitors show different prices by location. Can the tool check from different regions?

- Currency handling: For international monitoring, the tool should handle multiple currencies and ideally provide conversion.

Price Intelligence Features

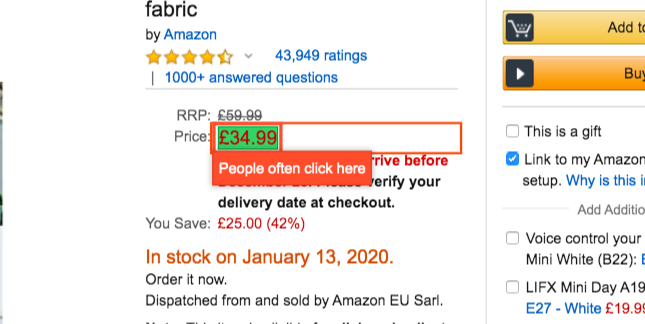

Raw price data is the starting point. Intelligence is what makes it actionable:

- Historical charts: See price trends over weeks and months, not just current prices.

- AI summaries: Get readable descriptions of what changed and by how much, without reading raw diffs.

- Alerts and thresholds: Set rules like "alert me if Competitor X drops below $99" or "notify if any competitor undercuts our price."

- Change percentage: See not just that a price changed, but the magnitude (5% increase vs. 50% increase).

Integration and Workflow

Price data needs to reach the people and systems that act on it:

- Slack/Teams: Real-time alerts to your pricing or sales team.

- Webhook/API: Feed price data into your own dashboards, pricing engines, or CRM.

- Spreadsheet export: For analysis and reporting.

- Automation platforms: n8n, Zapier, or Make integrations for building automated workflows.

The Best Price Tracking Tools

We've tested every major monitoring platform extensively. Here's an honest look at each.

PageCrawl

Type: Website monitoring with smart price detection Starting price: Free (6 monitors), $8/month (100 monitors), $30/month (500 monitors)

PageCrawl is a full-featured website monitoring platform that includes automatic price detection. When you add a product page and select "price tracking" mode, it automatically identifies prices on the page, extracts them, and tracks changes over time with a visual price chart.

Price tracking features:

- Automatic price detection on product pages (no CSS selectors needed for standard layouts)

- Cross-retailer product comparison: automatically groups the same product across retailers and shows side-by-side pricing with alerts when a store becomes the cheapest or most expensive

- Historical price charts showing trends

- AI-powered change summaries ("Enterprise plan increased 33% from $299 to $399")

- Element-specific monitoring for targeting individual prices on comparison pages

- Screenshot history to see exactly what the page looked like at each check

- Availability detection (in-stock/out-of-stock changes)

![]()

Strengths:

- Works on any website. Not limited to specific e-commerce platforms. Can monitor SaaS pricing pages, B2B portals, government procurement sites, and any page with a price.

- Smart price extraction that handles most product page layouts automatically.

- Combines price tracking with full website monitoring. Track prices, content changes, and visual changes in one tool.

- AI summaries translate diffs into actionable intelligence.

- Full notification stack: Slack, Discord, Telegram, Teams, email, webhooks.

- Free tier includes price tracking with 6 monitors.

- Supports login sequences for monitoring prices behind authentication.

Limitations:

- Price extraction works best on standard product pages. Complex multi-product tables may need element-specific selectors.

Best for: Teams that need flexible price monitoring across diverse sources (not just e-commerce), combined with broader website change detection.

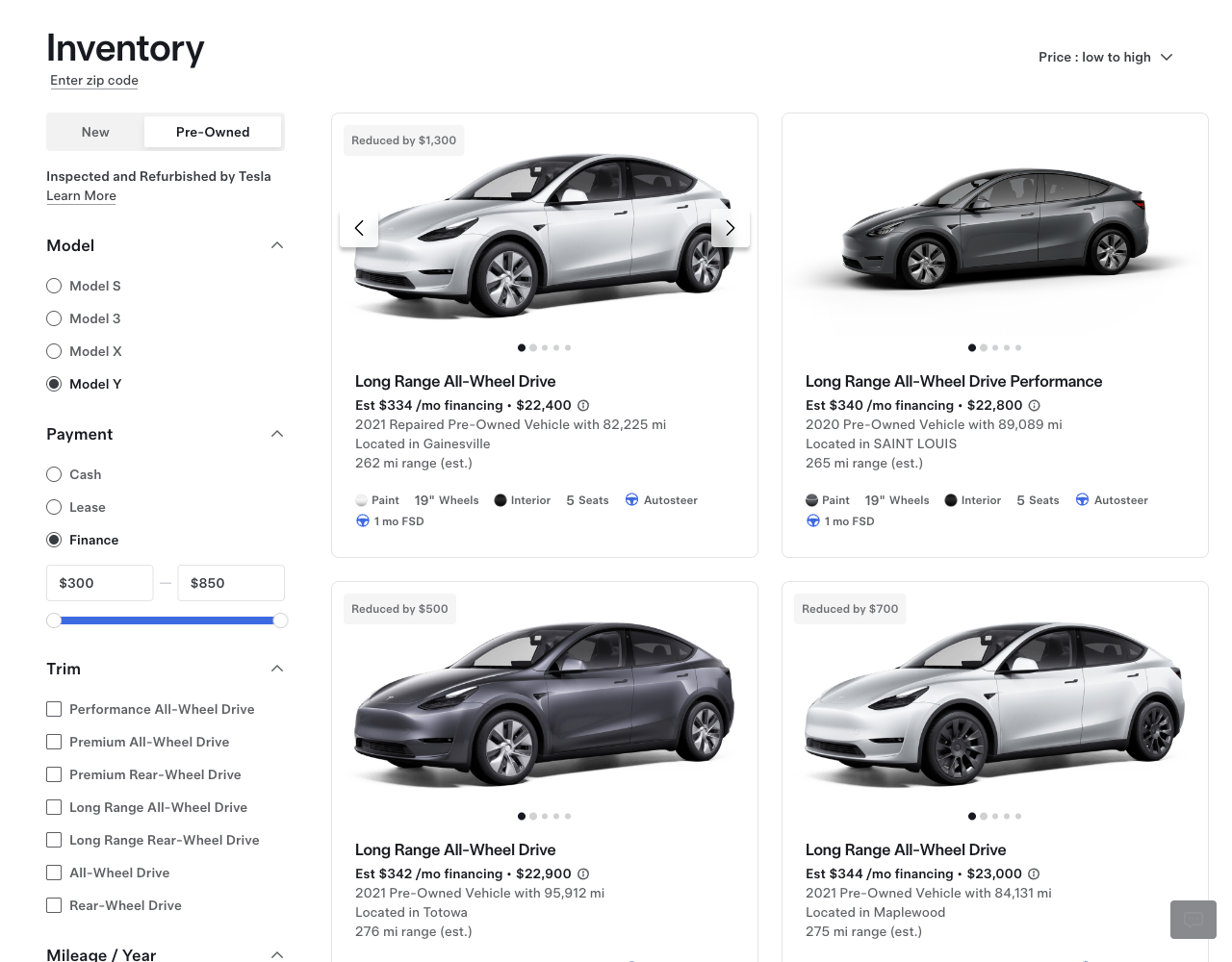

Prisync

Type: Dedicated e-commerce price intelligence Starting price: $99/month (100 products)

Prisync is a dedicated competitor price monitoring platform built for e-commerce. It focuses on structured product-level price tracking with automatic competitor matching.

Price tracking features:

- Product-level price tracking with competitor matching

- Automatic competitor URL detection

- MAP (Minimum Advertised Price) monitoring

- Dynamic pricing suggestions

- Historical price data and analytics

- Email alerts for price changes

Strengths:

- Purpose-built for e-commerce pricing. The product-matching workflow is streamlined.

- MAP violation detection for brands monitoring their reseller network.

- Dynamic pricing suggestions based on competitor data.

- Dashboard designed specifically for pricing managers.

Limitations:

- Only works for e-commerce product pages. Cannot monitor SaaS pricing, B2B portals, or non-standard pages.

- Starting at $99/month for 100 products, it gets expensive at scale.

- Limited notification channels (primarily email).

- No website change detection beyond pricing.

Best for: E-commerce businesses that need structured product-level price intelligence with dynamic pricing capabilities. For a broader comparison of tools in this space, see our e-commerce monitoring tools guide.

Price2Spy

Type: Dedicated price monitoring and intelligence Starting price: $24/month (basic plan)

Price2Spy focuses on price monitoring for e-commerce and retail with a strong emphasis on MAP compliance and repricing.

Price tracking features:

- Automated price and availability monitoring

- MAP compliance monitoring

- Price index and market position analysis

- Repricing suggestions

- Custom reports and analytics

- Email and Slack alerts

Strengths:

- Strong MAP monitoring and violation reporting.

- Detailed market position analysis showing where your prices rank against competitors.

- Good support for monitoring at scale (thousands of products).

- Repricing intelligence to automate pricing decisions.

Limitations:

- Enterprise-focused pricing that starts high for small teams.

- Primarily e-commerce focused. Limited flexibility for non-standard pages.

- Setup requires product catalog mapping, which takes time.

- Interface can feel dated compared to newer tools.

Best for: Brands and retailers who need MAP compliance monitoring at scale.

Visualping

Type: Website monitoring with basic price tracking Starting price: $14/month (personal), business plans from $140/month

Visualping is a general website monitoring tool that can be used for price tracking through its visual and text monitoring modes.

Price tracking features:

- Visual comparison of pricing pages

- Text change detection on price elements

- Email alerts for detected changes

- Basic element selection for targeting prices

Strengths:

- Simple setup for non-technical users.

- Visual comparison mode clearly shows what changed on the page.

- Affordable entry-level pricing.

Limitations:

- No dedicated price tracking mode. You are monitoring for general page changes, not specifically extracting price data.

- No historical price charts or structured price data.

- No AI summaries on free/basic plans.

- Limited notification channels (Slack requires paid plan).

- No price-specific intelligence features (no trend analysis, no threshold alerts based on price values).

Best for: Simple, visual monitoring of a few competitor pricing pages where you just need to know "did the page change?"

Competera

Type: Enterprise pricing intelligence platform Starting price: Custom (enterprise)

Competera is an enterprise pricing platform that combines competitor price monitoring with AI-driven pricing optimization.

Price tracking features:

- Automated competitor price collection at scale

- AI-driven optimal pricing recommendations

- Demand-based pricing models

- Portfolio-wide pricing strategy

- Integration with ERP and PIM systems

Strengths:

- End-to-end pricing solution from data collection to pricing optimization.

- AI models consider demand elasticity, not just competitor prices.

- Built for enterprise scale (millions of SKUs).

- Deep integration with retail systems.

Limitations:

- Enterprise pricing, not accessible for small or mid-size businesses.

- Primarily focused on retail and CPG industries.

- Long implementation timeline.

- Overkill for teams that just need to monitor a few competitors.

Best for: Large retailers and e-commerce companies that need AI-driven pricing optimization across a massive product catalog.

Scrapy + Custom Solution

Type: DIY web scraping framework Starting price: Free (open source), but requires development time

For technically capable teams, building a custom price scraper with tools like Scrapy, Playwright, or Puppeteer is an option.

What you build:

- Custom scraping scripts per competitor site

- Database for storing historical price data

- Alert system for price changes

- Dashboard for visualization

Strengths:

- Complete flexibility. You control exactly what data you collect and how.

- No per-monitor pricing. Once built, monitoring additional pages is marginal cost.

- Can be tightly integrated with your internal systems.

Limitations:

- Significant development and maintenance investment. Each competitor site requires custom scraping logic.

- Handling anti-bot measures, CAPTCHAs, and IP blocking is your responsibility.

- Browser rendering adds infrastructure complexity.

- When sites change their layout, scrapers break and need updating.

- No AI summaries, no visual comparison, no built-in alerting unless you build it.

Best for: Technical teams with specific requirements that no off-the-shelf tool meets, and the engineering resources to build and maintain a custom solution.

Comparison Table

| Feature | PageCrawl | Prisync | Price2Spy | Visualping | Competera |

|---|---|---|---|---|---|

| Price detection | Automatic | Product matching | Product matching | Manual setup | Automated |

| Cross-retailer comparison | Yes | Limited | Limited | No | Yes |

| Non-e-commerce pages | Yes | No | Limited | Yes | No |

| Historical charts | Yes | Yes | Yes | No | Yes |

| AI summaries | Yes | No | No | No | Yes |

| Slack alerts | Yes (free) | No | Yes (paid) | Paid only | Custom |

| Webhook/API | Yes | Yes | Yes | Paid only | Yes |

| Login support | Yes | No | No | No | No |

| Starting price | Free | $99/mo | $94/mo | $14/mo | Enterprise |

| Free tier | 6 monitors | No | Trial only | 5 monitors | No |

How to Build a Price Intelligence Workflow

Having the right tool is step one. Here is how to build a workflow that turns price data into business value.

Step 1: Map Your Competitive Landscape

Start by listing:

- Direct competitors: Companies your prospects compare you against in every deal

- Indirect competitors: Companies offering alternative solutions to the same problem

- Price leaders: The companies that set pricing expectations in your market

If your competitors sell on major retail platforms, our retailer-specific guides cover monitoring Amazon prices, Best Buy prices, and Walmart prices in detail. If you need to compare the same product across multiple retailers at once, see our guide to cross-retailer price comparison.

For each, identify:

- Pricing page URL

- Individual product/plan URLs

- Any public price lists or catalogs

Step 2: Set Up Monitoring

Configure a monitor for each pricing page with appropriate settings:

- Check frequency: Every 2-4 hours for competitive markets, daily for stable markets

- Tracking mode: Use price tracking or element-specific monitoring for clean data

- AI focus: Set the AI summary to focus on "pricing changes, plan restructuring, new tiers, and promotional offers"

- Noise filters: Remove date stamps, testimonial rotations, and visitor counters that change independently of prices

Step 3: Build Your Alert Pipeline

Route price alerts to where they will be acted on:

- Immediate: Price drops or significant changes go to a dedicated Slack channel and notify the pricing team

- Daily digest: Minor changes, promotional offers, and new products go in a daily summary

- Webhook to dashboard: All price changes feed into a central dashboard or spreadsheet for historical analysis

- CRM update: Competitor pricing changes attached to relevant deals in your CRM

Step 4: Establish a Review Process

Data without action is waste. Establish a regular process:

- Daily: Pricing team reviews overnight changes and decides on immediate responses

- Weekly: Broader team reviews pricing trends and discusses strategic implications

- Monthly: Analyze pricing movement patterns and update your competitive pricing strategy

- Quarterly: Review the full competitive pricing landscape and adjust your monitoring setup

Step 5: Measure Impact

Track the business impact of your price intelligence:

- Response time: How quickly do you respond to competitor price changes? (Target: same business day)

- Win rate: Has visibility into competitor pricing improved your deal win rate?

- Price optimization: Are you leaving money on the table or pricing too aggressively?

- Coverage: Are you monitoring all relevant competitors and products?

Common Price Tracking Challenges

Dynamic Pricing

Many e-commerce sites use dynamic pricing that changes based on demand, inventory, time of day, or visitor behavior. This means:

- Prices may differ between checks even without a "real" pricing change

- The price you monitor may not be the price your customers see

- Promotional pricing (flash sales, limited offers) creates noise

Solution: Check more frequently during business hours, use historical averaging to identify real pricing shifts vs. dynamic fluctuations, and set change thresholds to filter out minor variations (under 2-3%).

Personalized Pricing

Some sites show different prices based on cookies, location, browsing history, or account status.

Solution: Use monitoring tools that can check from different locations and clear cookies between checks. PageCrawl includes built-in location options and cookie removal actions.

Anti-Bot Detection

Competitors may block automated monitoring tools with CAPTCHAs, rate limiting, or IP blocking.

Solution: Use tools with proper browser rendering (not simple HTTP requests), vary check locations, and use reasonable check frequencies (hourly, not every minute). If a specific site blocks you, try different check locations or reduce check frequency.

Price Behind Login

Some B2B vendors only show real pricing after authentication (not listed on public pages).

Solution: Use monitoring tools that support login sequences to authenticate before checking prices. PageCrawl's actions system handles this with navigate-fill-click sequences.

Pricing Page Types and How to Monitor Them

Standard Product Pages

E-commerce product pages with a single price per product. Most tools handle these automatically. Use price tracking mode for clean extraction.

Comparison/Plan Pages

SaaS pricing pages with multiple plans displayed in columns or cards. Monitor each plan's price as a separate element, or use full-page monitoring to catch plan restructuring, feature changes, and tier additions.

Catalog/Search Results

Product listing pages showing many items with prices. Monitor the page for overall changes, or set up individual monitors for high-priority products. Use element selectors to target specific product cards.

Wholesale/B2B Pricing

Often requires authentication and may show volume-based pricing tiers. Use login-enabled monitoring and track the specific tier relevant to your purchase volumes.

Choosing your PageCrawl plan

PageCrawl's Free plan lets you monitor 6 pages with 220 checks per month, which is enough to validate the approach on your most critical pages. Most teams graduate to a paid plan once they see the value.

| Plan | Price | Pages | Checks / month | Frequency |

|---|---|---|---|---|

| Free | $0 | 6 | 220 | every 60 min |

| Standard | $8/mo or $80/yr | 100 | 15,000 | every 15 min |

| Enterprise | $30/mo or $300/yr | 500 | 100,000 | every 5 min |

| Ultimate | $99/mo or $999/yr | 1,000 | 100,000 | every 2 min |

Annual billing saves two months across every paid tier. Enterprise and Ultimate scale up to 100x if you need thousands of pages or multi-team access.

Standard at $80/year covers 100 product pages, enough to track your top SKUs across several competitors at 15-minute intervals. Catching a single competitor price cut before you respond with a matching discount can recover margins that would otherwise be lost for days. Enterprise at $300/year extends that to 500 pages at 5-minute checks, which is enough to cover a full product category across every major retailer you compete with.

Getting Started

Pick your top three competitors. Set up price monitors on their main pricing page or top 5 products. Route alerts to Slack. Run it for two weeks and see what changes. For a broader look at tracking competitor activity beyond just pricing, see our guide on how to track competitor websites.

Most teams are surprised by how frequently competitor prices change. Armed with that visibility, you can respond faster and price more strategically.

PageCrawl's free tier includes price tracking with automatic detection, AI summaries, and Slack alerts for up to 6 monitors. Start tracking competitor prices today.

PageCrawl vs the Alternatives

See how PageCrawl compares to the tools in this article:

]]>